Support Questions

- Cloudera Community

- Support

- Support Questions

- Cloudera Manager 5.11 stuck at Distributed on Ce...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Cloudera Manager 5.11 stuck at Distributed on CentOS 7 in Vmware 12.x

Created on 04-26-2017 01:37 AM - edited 09-16-2022 04:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm trying to install cloudera manager for last 7 days and the installation is stuck while distributing the parcels.

OS : CentOS 7

Vmware 12 :

Settings preconfigured on three nodes as follows :

/etc/hosts

127.0.0.1 localhost

192.168.10.194 master

192.168.10.89 slave1

192.168.10.90 slave2

/etc/hostname

master(for slaves, it is slaves in their machines)

/etc/sysconfig/network

NETWORKING=yes

HOSTNAME=master

RES_OPTIONS="single-request-reopen"

disabled firewall and selinux , iptables(not installed in centos 7 )

Already tried methods ,

Skipped Java installation - Not working .

No Java installed(removed all java from the system) -not working

Log :

26/Apr/2017 13:22:23 +0000] 6023 MainThread agent INFO CM server guid: 366ec894-eadd-4e1b-92e4-c1fe81acfc1c

[26/Apr/2017 13:22:23 +0000] 6023 MainThread agent INFO Using parcels directory from server provided value: /opt/cloudera/parcels

[26/Apr/2017 13:22:24 +0000] 6023 MainThread parcel INFO Agent does create users/groups and apply file permissions

[26/Apr/2017 13:22:24 +0000] 6023 MainThread parcel_cache INFO Using /opt/cloudera/parcel-cache for parcel cache

[26/Apr/2017 13:22:24 +0000] 6023 MainThread agent ERROR Caught unexpected exception in main loop.

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.11.0-py2.7.egg/cmf/agent.py", line 710, in __issue_heartbeat

self._init_after_first_heartbeat_response(resp_data)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.11.0-py2.7.egg/cmf/agent.py", line 948, in _init_after_first_heartbeat_response

self.client_configs.load()

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.11.0-py2.7.egg/cmf/client_configs.py", line 713, in load

new_deployed.update(self._lookup_alternatives(fname))

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.11.0-py2.7.egg/cmf/client_configs.py", line 434, in _lookup_alternatives

return self._parse_alternatives(alt_name, out)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.11.0-py2.7.egg/cmf/client_configs.py", line 446, in _parse_alternatives

path, _, _, priority_str = line.rstrip().split(" ")

ValueError: too many values to unpack

Server.log

2017-04-26 13:33:19,577 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Staleness check done. Duration: PT0.003S

2017-04-26 13:33:19,577 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Staleness check execution stats: average=0ms, min=0ms, max=0ms.

2017-04-26 13:33:19,750 INFO NodeConfiguratorThread-0-2:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave2: New state is a done state

2017-04-26 13:33:19,750 INFO NodeConfiguratorThread-0-2:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave2: Transitioning from WAIT_FOR_HEARTBEAT (PT4.021S) to COMPLETE

2017-04-26 13:33:19,750 INFO NodeConfiguratorThread-0-2:net.schmizz.sshj.transport.TransportImpl: Disconnected - BY_APPLICATION

2017-04-26 13:35:43,883 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Reaped total of 0 deleted commands

2017-04-26 13:35:43,884 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Found no commands older than 2015-04-27T08:05:43.883Z to reap.

2017-04-26 13:35:43,884 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Wizard is active, not reaping scanners or configurators

2017-04-26 13:35:53,072 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from PACKAGE_INSTALL cloudera-manager-agent (PT847.701S) to PACKAGE_INSTALL cloudera-manager-daemons

2017-04-26 13:35:54,074 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from PACKAGE_INSTALL cloudera-manager-daemons (PT1.002S) to INSTALL_JCE

2017-04-26 13:35:54,075 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from INSTALL_JCE (PT0.001S) to AGENT_CONFIGURE

2017-04-26 13:35:54,075 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from AGENT_CONFIGURE (PT0S) to AGENT_START

2017-04-26 13:35:54,684 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from AGENT_START (PT0.609S) to SCRIPT_SUCCESS

2017-04-26 13:35:54,684 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from SCRIPT_SUCCESS (PT0S) to WAIT_FOR_HEARTBEAT

2017-04-26 13:35:58,413 INFO 1020143030@agentServer-0:com.cloudera.server.cmf.AgentProtocolImpl: Setting default rackId for host 28fea9dc-c720-406a-a1cc-045e2d00e28f: /default

2017-04-26 13:35:58,423 INFO 1020143030@agentServer-0:com.cloudera.server.cmf.AgentProtocolImpl: [DbHost{id=3, hostId=28fea9dc-c720-406a-a1cc-045e2d00e28f, hostName=slave1}] Added to rack group: /default

2017-04-26 13:35:58,440 INFO 1020143030@agentServer-0:com.cloudera.cmf.service.ServiceHandlerRegistry: Executing command ProcessStalenessCheckCommand BasicCmdArgs{args=[First reason why: com.cloudera.cmf.model.DbHost.name (#3) has changed]}.

2017-04-26 13:35:58,453 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Running staleness check with QUICK_CHECK for 0/0 roles.

2017-04-26 13:35:58,453 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Staleness check done. Duration: PT0.002S

2017-04-26 13:35:58,453 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Staleness check execution stats: average=0ms, min=0ms, max=0ms.

2017-04-26 13:35:58,704 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: New state is a done state

2017-04-26 13:35:58,704 INFO NodeConfiguratorThread-0-1:com.cloudera.server.cmf.node.NodeConfiguratorProgress: slave1: Transitioning from WAIT_FOR_HEARTBEAT (PT4.020S) to COMPLETE

2017-04-26 13:35:58,704 INFO NodeConfiguratorThread-0-1:net.schmizz.sshj.transport.TransportImpl: Disconnected - BY_APPLICATION

2017-04-26 13:36:02,548 INFO 1418155107@scm-web-4:com.cloudera.server.cmf.components.OperationsManagerImpl: Creating cluster cluster with version CDH 5 and rounded off version CDH 5.0.0

2017-04-26 13:36:02,615 INFO 1418155107@scm-web-4:com.cloudera.cmf.service.ServiceHandlerRegistry: Executing command ProcessStalenessCheckCommand BasicCmdArgs{args=[First reason why: com.cloudera.cmf.model.DbHost.cluster (#1) has changed]}.

2017-04-26 13:36:02,707 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Running staleness check with QUICK_CHECK for 0/0 roles.

2017-04-26 13:36:02,708 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Staleness check done. Duration: PT0.003S

2017-04-26 13:36:02,709 INFO ProcessStalenessDetector-0:com.cloudera.cmf.service.config.components.ProcessStalenessDetector: Staleness check execution stats: average=0ms, min=0ms, max=0ms.

2017-04-26 13:36:06,583 INFO ParcelUpdateService:com.cloudera.parcel.components.ParcelManagerImpl: Downloading parcels of CDH:5.11.0-1.cdh5.11.0.p0.34 for distros [RHEL7]

2017-04-26 13:36:06,820 INFO ParcelUpdateService:com.cloudera.parcel.components.ParcelDownloaderImpl: Preparing to download: https://archive.cloudera.com/cdh5/parcels/5.11/CDH-5.11.0-1.cdh5.11.0.p0.34-el7.parcel

2017-04-26 13:38:58,375 INFO 1020143030@agentServer-0:com.cloudera.server.common.MonitoringThreadPool: agentServer: execution stats: average=9ms, min=0ms, max=162ms.

2017-04-26 13:38:58,375 INFO 1020143030@agentServer-0:com.cloudera.server.common.MonitoringThreadPool: agentServer: waiting in queue stats: average=0ms, min=0ms, max=37ms.

2017-04-26 13:45:43,916 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Reaped total of 0 deleted commands

2017-04-26 13:45:43,921 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Found no commands older than 2015-04-27T08:15:43.918Z to reap.

2017-04-26 13:45:43,923 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Wizard is active, not reaping scanners or configurators

2017-04-26 13:45:53,551 INFO ScmActive-0:com.cloudera.server.cmf.components.ScmActive: (119 skipped) ScmActive completed successfully.

2017-04-26 13:48:58,867 INFO 442631510@agentServer-8:com.cloudera.server.common.MonitoringThreadPool: agentServer: execution stats: average=9ms, min=0ms, max=162ms.

2017-04-26 13:48:58,868 INFO 442631510@agentServer-8:com.cloudera.server.common.MonitoringThreadPool: agentServer: waiting in queue stats: average=0ms, min=0ms, max=37ms.

2017-04-26 13:55:43,941 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Reaped total of 0 deleted commands

2017-04-26 13:55:43,944 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Found no commands older than 2015-04-27T08:25:43.942Z to reap.

2017-04-26 13:55:43,945 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Wizard is active, not reaping scanners or configurators

2017-04-26 13:58:59,329 INFO 1193173764@agentServer-11:com.cloudera.server.common.MonitoringThreadPool: agentServer: execution stats: average=8ms, min=1ms, max=96ms.

2017-04-26 13:58:59,329 INFO 1193173764@agentServer-11:com.cloudera.server.common.MonitoringThreadPool: agentServer: waiting in queue stats: average=0ms, min=0ms, max=37ms.

2017-04-26 14:00:00,035 INFO com.cloudera.cmf.scheduler-1_Worker-1:com.cloudera.cmf.service.ServiceHandlerRegistry: Executing command GlobalPoolsRefresh BasicCmdArgs{scheduleId=1, scheduledTime=2017-04-26T08:30:00.000Z}.

2017-04-26 14:00:00,049 INFO com.cloudera.cmf.scheduler-1_Worker-1:com.cloudera.cmf.scheduler.CommandDispatcherJob: Skipping scheduled command 'GlobalPoolsRefresh' since it is a noop.

tried this solution : https://community.cloudera.com/t5/Cloudera-Manager-Installation/Problem-with-cloudera-agent/td-p/476... ( dont know what to do with alternatives )

No space constraint

I think this is the problem with many who have little knowledge in cloudera installation with Internet . Please share the proper steps to resolve this issue as

I have been trying to resolve this problem for last ten days . please help

Created on 04-26-2017 02:53 AM - edited 04-26-2017 02:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Could you run on each host following command:

rpm -qa | grep -i "jdk"

and paste the output here, please?

Also, did you meet all prerequisites?

Created 04-26-2017 06:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

copy-jdk-configs-1.2-1.el7.noarch

java-1.7.0-openjdk-headless-1.7.0.131-2.6.9.0.el7_3.x86_64

java-1.8.0-openjdk-headless-1.8.0.131-2.b11.el7_3.x86_64

java-1.8.0-openjdk-1.8.0.131-2.b11.el7_3.x86_64

java-1.7.0-openjdk-1.7.0.131-2.6.9.0.el7_3.x86_64

[root@master user]#

Created 04-26-2017 06:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

[root@master user]# rpm -qa | grep -i "jdk" copy-jdk-configs-1.2-1.el7.noarch java-1.7.0-openjdk-headless-1.7.0.131-2.6.9.0.el7_3.x86_64 java-1.8.0-openjdk-headless-1.8.0.131-2.b11.el7_3.x86_64 java-1.8.0-openjdk-1.8.0.131-2.b11.el7_3.x86_64 java-1.7.0-openjdk-1.7.0.131-2.6.9.0.el7_3.x86_64 [root@master user]#

What are the prerequisites, in my question , I have posted the steps i have done . Not less , not more . exactly the same I have done . Please check and let me know if u need any thing else

Created 04-26-2017 07:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Try to remove all cloudera stuff from all nodes (server, agent, daemon)

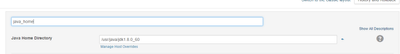

Remove all openjdk packages. Install oracle jdk, e.g. jdk1.8.0_60. Set JAVA_HOME variable.

Then install cloudera server on you CM node, run cloudera manager, go to CM settings and set up java_home parameter. After that back to your installation and try to distribute parcels.

Created on 04-26-2017 07:26 AM - edited 04-26-2017 07:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Super Ji, I have removed jdk / java related stuff from Master node , to my surprise parcels started distributing .

But Still , it is not completely distributed . it is ditributing at 67% and unpacking 0% . and stuck in the middle for long time .

Out of three nodes only two nodes started to distribute and unpacking . third node is not even started and stuck it seems . Any possible problem . or I have to start everything from the beginning .

Created on 04-26-2017 07:30 AM - edited 04-26-2017 07:32 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

could you provied logs (/var/log/cloudera-scm-server/cloudera-scm-server.log) from CM node, please?

Created on 04-26-2017 07:37 AM - edited 04-26-2017 07:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have posted logs below

SCM-SERVER.log

SCM AGENT LOG :

Created 04-26-2017 07:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Grab logs form across all nodes as well, please (/var/log/cloudera-scm-agent/cloudera-scm-agent.log).

Created 04-26-2017 07:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you able to establish a connection with telnet from CM node to slave 1?

telnet slave1.dev.local 9000