Support Questions

- Cloudera Community

- Support

- Support Questions

- LOAD DATA time for parquet file depends on partiti...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

LOAD DATA time for parquet file depends on partition/table size

- Labels:

-

Apache Impala

Created on 01-18-2019 12:49 PM - edited 09-16-2022 07:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I noticed that the time it takes for load data to run in order to load a single file into a partitioned, internal, parquet table is proportional to the partition and table size.

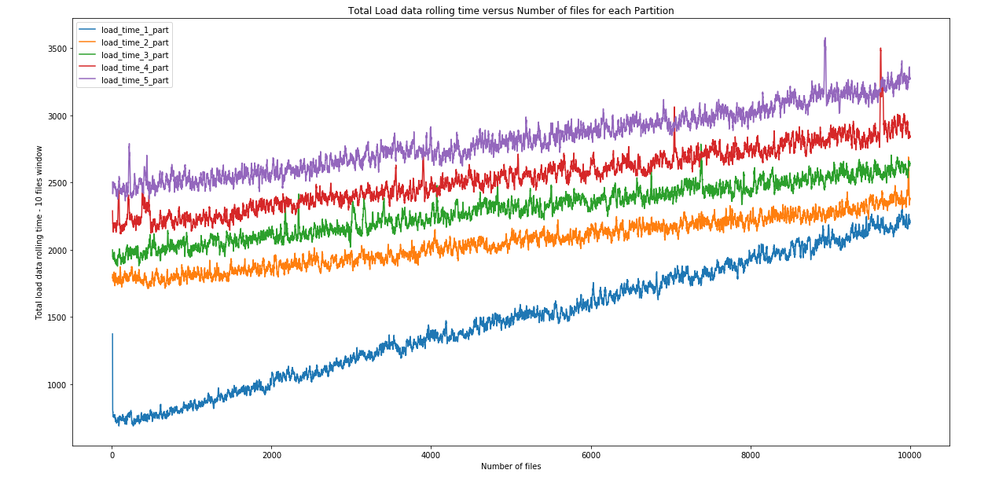

Then I did an experiment in which I run load time for the same parquet file (renaming it uniquely), which has around 1MB in size, until one partition was filled up with 10k files. Then, I continue filling up the next partition, until 5 partitions were full with 10k files each (full partition has around 12GB in size).

The results are the following:

It is noticeable that load data time increases slightly inside a partition and the next load data time continues from where the previous partition left.

Obs: we are using Ibis framework to do the load data.

Could you please explain a little bit about the Impala load data internals in order to justify increase in time as the table grows?

Thanks,

Paulo.

Created 01-22-2019 09:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This affected us as well. Here are some potentially related bugs. My understanding of the internals is that it is doing a full table refresh. If you are doing LOAD DATA with partition, ideally it would only do an incremental refresh instead of full and you would get closer to flat times.

https://issues.apache.org/jira/browse/IMPALA-7330

https://issues.apache.org/jira/browse/IMPALA-7854

What version of Impala are you using?

Created 01-22-2019 09:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This affected us as well. Here are some potentially related bugs. My understanding of the internals is that it is doing a full table refresh. If you are doing LOAD DATA with partition, ideally it would only do an incremental refresh instead of full and you would get closer to flat times.

https://issues.apache.org/jira/browse/IMPALA-7330

https://issues.apache.org/jira/browse/IMPALA-7854

What version of Impala are you using?

Created 01-23-2019 12:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for your answer.

I tried to enable debug log level while calling load data in Ibis framework and I could see a call for cmd=listStatus being done for every partition in the table, then finally a call to cmd=open, which is final step to handle load data statement. I'm doing load data passing on the table and partition.

We can find mentions for listStatus command in Impala code here: https://github.com/apache/impala/search?q=listStatus&unscoped_q=listStatus. It is complicated to understand the entire thing, but I suppose those calls are being used as a "light refresh" to identify modified files in the partition.

If listStatus performance depends on the number of files in the partition (but probably not its size) and that is being called for every partition in the table, I can see why the load data runtime is increasing as the chart suggests.

I think you also have noticed that refresh grows A LOT as the partition size increases (for instance, in the same partition containing 10k files in my experiment it would take more than 40 seconds), so I'm somewhat happy with this workflow using load data, despite the seemingly unavoidable degradation in performance as table grows.

It also can be noticed that the refresh runtime does not suffer a small jump as we add a new partition. Rather, it grows exclusively as the partition you are refreshing grows.

Other workflows using Hive/direct upload into HDFS would require REFRESH, which would turn impactical long term as the partition grows.

Impala version is 2.11.

Created 01-23-2019 12:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content