Support Questions

- Cloudera Community

- Support

- Support Questions

- MKDirs failed to create file

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

MKDirs failed to create file

Created on 12-10-2015 05:23 AM - edited 09-16-2022 02:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I am trying to run a basic query in Hive, to which I am getting error saying MKDirs failed to create file:

Query:

select name from batting;

Error Log:

Error: java.lang.RuntimeException: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":29,"name":"PA Patel","runs":205,"high_score":57,"average":20.5,"strike_rate":110.81,"sixes":1,"team":"Bangalore"}

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:161)

at org.apache.hadoop.mapred.MapRunner.run(MapRunner.java:54)

at org.apache.hadoop.mapred.MapTask.runOldMapper(MapTask.java:399)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:334)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:152)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1332)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:147)

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":29,"name":"PA Patel","runs":205,"high_score":57,"average":20.5,"strike_rate":110.81,"sixes":1,"team":"Bangalore"}

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:548)

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:143)

... 8 more

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2015-12-10_08-07-28_115_8039040536647708382/_task_tmp.-ext-10001

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:237)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.createBucketFiles(FileSinkOperator.java:477)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.processOp(FileSinkOperator.java:525)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.SelectOperator.processOp(SelectOperator.java:84)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.TableScanOperator.processOp(TableScanOperator.java:83)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:531)

... 9 more

Caused by: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2015-12-10_08-07-28_115_8039040536647708382/_task_tmp.-ext-10001

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:434)

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:420)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:805)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:786)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:685)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:674)

at org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat.getHiveRecordWriter(HiveIgnoreKeyTextOutputFormat.java:80)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getRecordWriter(HiveFileFormatUtils.java:246)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:234)

... 20 more

Execution failed with exit status: 2

Obtaining error informationI have checked the permissions for /tmp/training and found that for Owner and Group it was set to 'Can Create Files and Directories' and for Others it was 'Can Access Files'. Have changed the permissions to 'Can Create Files and Directories' for Others as well (as it is a test pseudonode environment, no issues in changing permissions). However, no luck.

I am on Hive 0.9 using CDH 4.1.1. Would be great if any one could help as I am pretty new in troubleshooting issues around Hive.

Thanks

snm1523

Created 01-18-2016 06:59 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I was able to find a solution to this issue. You would need to add below property to mapred-site.xml basis on your Hadoop version:

Hadoop 1.x:

<property>

<name>mapred.job.tracker</name>

<value>localhost:9101</value>

</property>

Hadoop 2.x:

<property>

<name>mapreduce.jobtracker.address</name>

<value>localhost:9101</value>

</property>Thanks

snm1523

Created 12-19-2015 10:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hadoop fs -ls /tmp/training

hadoop fs -ls -d /tmp/training

hadoop fs -ls -d /tmp

Note that CDH4 is way past its EOL (End Of Life) and is no longer supported by Cloudera. It is recommended to use CDH5 instead.

Created 12-28-2015 07:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Harsh,

Apologies for very late reply. However, was stuck in some other issues.

I am using Cloudera-udacity 4.1.1 VM which has come preconfigured with the said version.

I am logged in with training as user.

Upon attempting the said commands, I found that folder training did not exist instead a folder 'hive-training' was present. So I renamed hive-training to training in HDFS and then was able to execute the said commands.

[training@localhost ~]$ hadoop fs -ls /tmp/training [training@localhost ~]$ hadoop fs -ls -d /tmp/training Found 1 items drwxr-xr-x - training supergroup 0 2015-12-09 01:59 /tmp/training [training@localhost ~]$ hadoop fs -ls -d /tmp/ Found 1 items drwxrwxrwt - hdfs supergroup 0 2015-12-28 09:52 /tmp [training@localhost ~]$

Also, I noticed Hive warehouse is stored under /user. Below is an output to few additional commands:

[training@localhost ~]$ hadoop fs -ls /user Found 5 items drwxrwxrwt - yarn supergroup 0 2014-07-27 09:35 /user/history drwxr-xr-x - hue supergroup 0 2013-09-05 20:08 /user/hive drwxr-xr-x - hue hue 0 2013-09-10 10:37 /user/hue drwxr-xr-x - training supergroup 0 2015-12-08 22:36 /user/training drwxr-xr-x - training supergroup 0 2014-07-27 03:52 /user/trial [training@localhost ~]$ hadoop fs -ls /user/training Found 19 items drwx------ - training supergroup 0 2015-12-28 09:53 /user/training/.staging -rw-r--r-- 1 training supergroup 2144 2015-12-08 22:12 /user/training/Batting.csv drwxr-xr-x - training supergroup 0 2015-12-08 22:36 /user/training/hive_case drwxr-xr-x - training supergroup 0 2014-07-27 07:35 /user/training/out drwxr-xr-x - training supergroup 0 2014-07-27 08:20 /user/training/out1 drwxr-xr-x - training supergroup 0 2014-07-27 09:01 /user/training/out10 drwxr-xr-x - training supergroup 0 2014-07-27 09:10 /user/training/out11 drwxr-xr-x - training supergroup 0 2014-07-27 09:11 /user/training/out12 drwxr-xr-x - training supergroup 0 2014-07-27 09:45 /user/training/out13 drwxr-xr-x - training supergroup 0 2014-07-27 09:35 /user/training/out19 drwxr-xr-x - training supergroup 0 2014-07-27 08:15 /user/training/out2 drwxr-xr-x - training supergroup 0 2014-07-27 09:37 /user/training/out20 drwxr-xr-x - training supergroup 0 2014-07-27 08:21 /user/training/out3 drwxr-xr-x - training supergroup 0 2014-07-27 08:25 /user/training/out4 drwxr-xr-x - training supergroup 0 2014-07-27 08:38 /user/training/out5 drwxr-xr-x - training supergroup 0 2014-07-27 08:43 /user/training/out6 drwxr-xr-x - training supergroup 0 2014-07-27 08:45 /user/training/out7 drwxr-xr-x - training supergroup 0 2014-07-27 08:58 /user/training/out8 drwxr-xr-x - training supergroup 0 2014-07-27 08:59 /user/training/out9 [training@localhost ~]$ hadoop fs -ls /user/hive Found 1 items drwxrwxrwx - hue supergroup 0 2015-12-09 02:00 /user/hive/warehouse

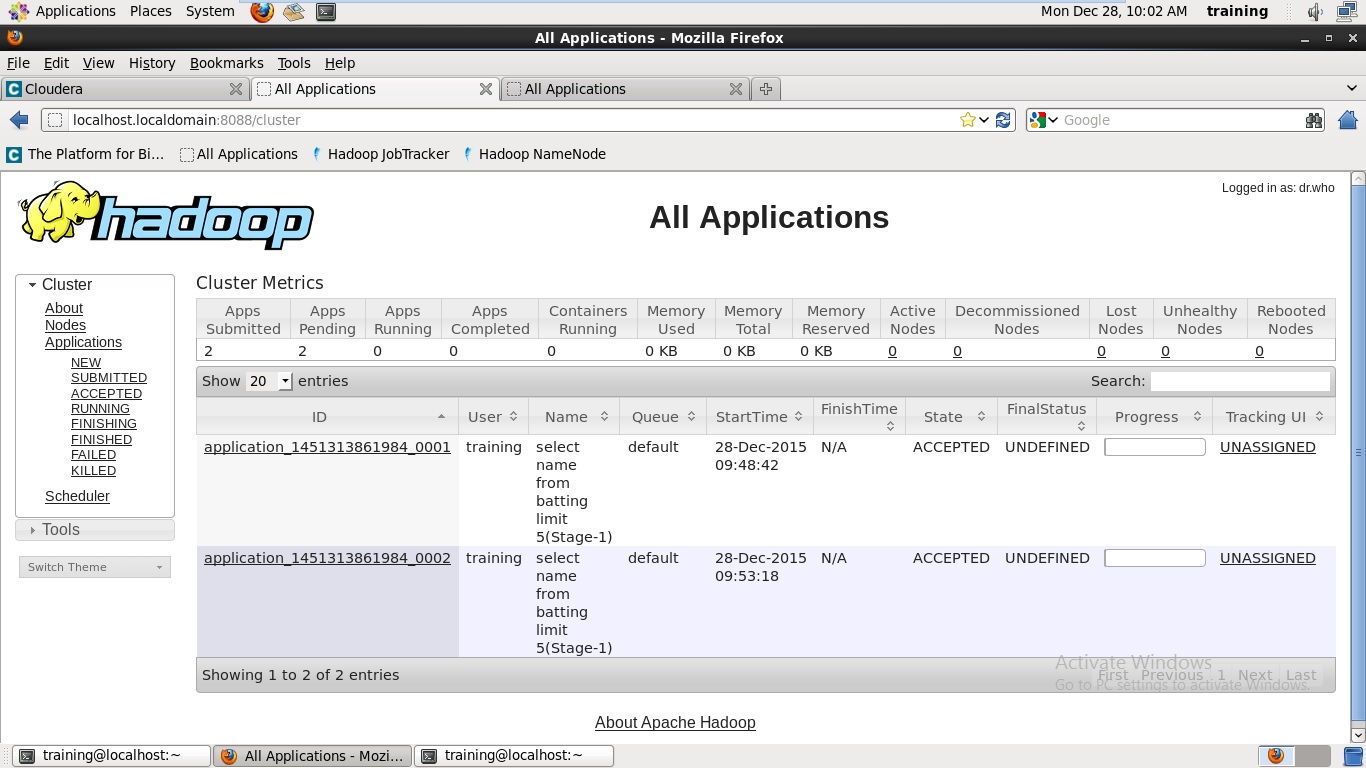

In addition, I able to execute select * from batting; successfully however, upon attempting select name from batting; this time it is not returning any error instead the job is just going in pending state. I am also unable to kill the job as I get below error.

[training@localhost ~]$ hadoop job -kill application_1451313861984_0001 DEPRECATED: Use of this script to execute mapred command is deprecated. Instead use the mapred command for it. Exception in thread "main" java.lang.IllegalArgumentException: JobId string : application_1451313861984_0001 is not properly formed at org.apache.hadoop.mapreduce.JobID.forName(JobID.java:156) at org.apache.hadoop.mapreduce.tools.CLI.run(CLI.java:273) at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70) at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:84) at org.apache.hadoop.mapred.JobClient.main(JobClient.java:1250)

Lastly, I have attached a screenshot of Applications Manager page which shows that the jobs are in pending state.

Please advise.

Thanks

snm1523

Created 01-03-2016 09:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

> Caused by: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2015-12-10_08-07-28_115_8039040536647708382/_task_tmp.-ext-10001

> at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:434)

Thereby, can you ensure the local /tmp directory exists on all your cluster host root filesystems with the drwxrwxrwt permissions? Also try clearing out local directory /tmp/training from every host and re-run the query.

> instead the job is just going in pending state

If you notice your RM screenshot, it tells there are 0 active nodes. This means your NodeManager is unavailable/dead/not-started, and the RM has no resources to allocate to (thereby the hang in PENDING state, as it is waiting for some NodeManager to come along and satisfy the requested resources of the application). You may want to restart NodeManager services, and/or check its logs if its gone down for some FATAL reason.

Created 01-11-2016 06:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the help, Harsh. Found NM service was down, restarted the service and its working now.

Created on 01-15-2016 05:37 PM - edited 01-15-2016 05:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Harsh,

I am back with the same issue. Using a fresh copy of the VM.

1. Have verified that /tmp/training folder exists

2. Have cleaned the folder and tried the same query again its not working

3. Have checked the permissions of tmp and training folder and seems correct:

drwxrwxrwt. 34 root root 4096 Jan 15 20:29 tmp

drwxrwxrwx 2 training training 4096 Jan 15 20:28 training

4. NodeManager service is also running.

Still I get below error while executing select name from batting;

hive> select name from batting;

Total MapReduce jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

WARNING: org.apache.hadoop.metrics.jvm.EventCounter is deprecated. Please use org.apache.hadoop.log.metrics.EventCounter in all the log4j.properties files.

Execution log at: /tmp/training/training_20160115203535_f4fdd957-7680-48e3-b7d4-ac26a73b48b8.log

Job running in-process (local Hadoop)

Hadoop job information for null: number of mappers: 1; number of reducers: 0

2016-01-15 20:35:18,502 null map = 0%, reduce = 0%

2016-01-15 20:35:34,393 null map = 100%, reduce = 0%

Ended Job = job_1452671681915_0035 with errors

Error during job, obtaining debugging information...

Examining task ID: task_1452671681915_0035_m_000000 (and more) from job job_1452671681915_0035

Unable to retrieve URL for Hadoop Task logs. Does not contain a valid host:port authority: local

Task with the most failures(4):

-----

Task ID:

task_1452671681915_0035_m_000000

URL:

Unavailable

-----

Diagnostic Messages for this Task:

Error: java.lang.RuntimeException: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":29,"name":"PA Patel","runs":205,"high_score":57,"average":20.5,"strike_rate":110.81,"sixes":1,"team":"Bangalore"}

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:161)

at org.apache.hadoop.mapred.MapRunner.run(MapRunner.java:54)

at org.apache.hadoop.mapred.MapTask.runOldMapper(MapTask.java:399)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:334)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:152)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1332)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:147)

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":29,"name":"PA Patel","runs":205,"high_score":57,"average":20.5,"strike_rate":110.81,"sixes":1,"team":"Bangalore"}

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:548)

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:143)

... 8 more

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2016-01-15_20-35-09_549_8437051062805036433/_task_tmp.-ext-10001

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:237)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.createBucketFiles(FileSinkOperator.java:477)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.processOp(FileSinkOperator.java:525)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.SelectOperator.processOp(SelectOperator.java:84)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.TableScanOperator.processOp(TableScanOperator.java:83)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:531)

... 9 more

Caused by: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2016-01-15_20-35-09_549_8437051062805036433/_task_tmp.-ext-10001

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:434)

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:420)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:805)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:786)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:685)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:674)

at org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat.getHiveRecordWriter(HiveIgnoreKeyTextOutputFormat.java:80)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getRecordWriter(HiveFileFormatUtils.java:246)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:234)

... 20 more

Execution failed with exit status: 2

Obtaining error information

Task failed!

Task ID:

Stage-1

Logs:

/tmp/training/hive.log

FAILED: Execution Error, return code 2 from org.apache.hadoop.hive.ql.exec.MapRedTaskRequest you to please help on this.

Thanks

snm1523

Created 01-15-2016 06:06 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ls -l /tmp/training/

Also try the below before you run your query:

set hadoop.tmp.dir=.;

And before you run the Hive CLI:

export HADOOP_CLIENT_OPTS="-Djava.io.tmpdir=."

Created on 01-15-2016 06:23 PM - edited 01-15-2016 06:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the prompt response, Harsh.

Output of ls -l /tmp/training:

[training@localhost ~]$ ls -l /tmp/training/ total 36 -rw-rw-r-- 1 training training 3175 Jan 15 21:14 hive_job_log_training_201601152114_2081367348.txt -rw-rw-r-- 1 training training 3175 Jan 15 21:15 hive_job_log_training_201601152115_1734130449.txt -rw-rw-r-- 1 training training 9762 Jan 15 21:15 hive.log -rw-rw-r-- 1 training training 15704 Jan 15 21:05 training_20160115210505_a8632532-ad5d-40c8-8265-b0147c38655c.log

Also, even after setting the HADOOP_CLIENT_OPTS before running the CLI and setting hadoop.tmp.dir=.; I am getting the same error:

[training@localhost ~]$ HADOOP_CLIENT_OPTS="-Djava.io.tmpdir=."

[training@localhost ~]$ hive

Logging initialized using configuration in file:/etc/hive/conf.dist/hive-log4j.properties

Hive history file=/tmp/training/hive_job_log_training_201601152151_801169159.txt

hive> use case_ipl;

OK

Time taken: 1.814 seconds

hive> select * from bowling_no_partition limit 5;

OK

1 RV Uthappa Kolkata 36.4 299 16 18.68 8.15 13.7

2 GJ Maxwell Punjab 14.2 113 3 37.66 7.88 28.6

4 DR Smith Chennai 13.0 135 4 33.75 10.38 19.5

7 SK Raina Chennai 8.0 43 4 10.75 5.37 12.0

8 JP Duminy Delhi 47.5 334 13 25.69 6.98 22.0

Time taken: 0.806 seconds

hive> select name from bowling_no_partition;

Total MapReduce jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

WARNING: org.apache.hadoop.metrics.jvm.EventCounter is deprecated. Please use org.apache.hadoop.log.metrics.EventCounter in all the log4j.properties files.

Execution log at: /tmp/training/training_20160115215252_e2cea1ec-fc8e-46e4-ac82-6357d76ce6d1.log

Job running in-process (local Hadoop)

Hadoop job information for null: number of mappers: 1; number of reducers: 0

2016-01-15 21:52:25,188 null map = 0%, reduce = 0%

2016-01-15 21:52:41,173 null map = 100%, reduce = 0%

Ended Job = job_1452912287088_0002 with errors

Error during job, obtaining debugging information...

Examining task ID: task_1452912287088_0002_m_000000 (and more) from job job_1452912287088_0002

Unable to retrieve URL for Hadoop Task logs. Does not contain a valid host:port authority: local

Task with the most failures(4):

-----

Task ID:

task_1452912287088_0002_m_000000

URL:

Unavailable

-----

Diagnostic Messages for this Task:

Error: java.lang.RuntimeException: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":1,"name":"RV Uthappa","team":"Kolkata","overs":36.4,"runs":299,"wickets":16,"avg":18.68,"economy":8.15,"strike_rate":13.7}

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:161)

at org.apache.hadoop.mapred.MapRunner.run(MapRunner.java:54)

at org.apache.hadoop.mapred.MapTask.runOldMapper(MapTask.java:399)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:334)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:152)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1332)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:147)

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":1,"name":"RV Uthappa","team":"Kolkata","overs":36.4,"runs":299,"wickets":16,"avg":18.68,"economy":8.15,"strike_rate":13.7}

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:548)

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:143)

... 8 more

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2016-01-15_21-52-16_114_6229068466610181659/_task_tmp.-ext-10001

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:237)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.createBucketFiles(FileSinkOperator.java:477)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.processOp(FileSinkOperator.java:525)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.SelectOperator.processOp(SelectOperator.java:84)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.TableScanOperator.processOp(TableScanOperator.java:83)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:529)

... 9 more

Caused by: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2016-01-15_21-52-16_114_6229068466610181659/_task_tmp.-ext-10001

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:434)

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:420)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:805)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:786)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:685)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:674)

at org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat.getHiveRecordWriter(HiveIgnoreKeyTextOutputFormat.java:80)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getRecordWriter(HiveFileFormatUtils.java:246)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:234)

... 20 more

Execution failed with exit status: 2

Obtaining error information

Task failed!

Task ID:

Stage-1

Logs:

/tmp/training/hive.log

FAILED: Execution Error, return code 2 from org.apache.hadoop.hive.ql.exec.MapRedTask

hive> set hadoop.tmp.dir=.;

hive> select name from bowling_no_partition;

Total MapReduce jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

WARNING: org.apache.hadoop.metrics.jvm.EventCounter is deprecated. Please use org.apache.hadoop.log.metrics.EventCounter in all the log4j.properties files.

Execution log at: /tmp/training/training_20160115215252_1aa2c785-f1ba-45db-b3c1-4a2f45a26be6.log

Job running in-process (local Hadoop)

Hadoop job information for null: number of mappers: 1; number of reducers: 0

2016-01-15 21:52:58,406 null map = 0%, reduce = 0%

2016-01-15 21:53:15,462 null map = 100%, reduce = 0%

Ended Job = job_1452912287088_0003 with errors

Error during job, obtaining debugging information...

Examining task ID: task_1452912287088_0003_m_000000 (and more) from job job_1452912287088_0003

Unable to retrieve URL for Hadoop Task logs. Does not contain a valid host:port authority: local

Task with the most failures(4):

-----

Task ID:

task_1452912287088_0003_m_000000

URL:

Unavailable

-----

Diagnostic Messages for this Task:

Error: java.lang.RuntimeException: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":1,"name":"RV Uthappa","team":"Kolkata","overs":36.4,"runs":299,"wickets":16,"avg":18.68,"economy":8.15,"strike_rate":13.7}

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:161)

at org.apache.hadoop.mapred.MapRunner.run(MapRunner.java:54)

at org.apache.hadoop.mapred.MapTask.runOldMapper(MapTask.java:399)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:334)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:152)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1332)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:147)

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: Hive Runtime Error while processing row {"id":1,"name":"RV Uthappa","team":"Kolkata","overs":36.4,"runs":299,"wickets":16,"avg":18.68,"economy":8.15,"strike_rate":13.7}

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:548)

at org.apache.hadoop.hive.ql.exec.ExecMapper.map(ExecMapper.java:143)

... 8 more

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2016-01-15_21-52-49_840_1514077634964084555/_task_tmp.-ext-10001

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:237)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.createBucketFiles(FileSinkOperator.java:477)

at org.apache.hadoop.hive.ql.exec.FileSinkOperator.processOp(FileSinkOperator.java:525)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.SelectOperator.processOp(SelectOperator.java:84)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.TableScanOperator.processOp(TableScanOperator.java:83)

at org.apache.hadoop.hive.ql.exec.Operator.process(Operator.java:471)

at org.apache.hadoop.hive.ql.exec.Operator.forward(Operator.java:762)

at org.apache.hadoop.hive.ql.exec.MapOperator.process(MapOperator.java:529)

... 9 more

Caused by: java.io.IOException: Mkdirs failed to create file:/tmp/training/hive_2016-01-15_21-52-49_840_1514077634964084555/_task_tmp.-ext-10001

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:434)

at org.apache.hadoop.fs.ChecksumFileSystem.create(ChecksumFileSystem.java:420)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:805)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:786)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:685)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:674)

at org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat.getHiveRecordWriter(HiveIgnoreKeyTextOutputFormat.java:80)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getRecordWriter(HiveFileFormatUtils.java:246)

at org.apache.hadoop.hive.ql.io.HiveFileFormatUtils.getHiveRecordWriter(HiveFileFormatUtils.java:234)

... 20 more

Execution failed with exit status: 2

Obtaining error information

Task failed!

Task ID:

Stage-1

Logs:

/tmp/training/hive.log

FAILED: Execution Error, return code 2 from org.apache.hadoop.hive.ql.exec.MapRedTask

hive> Thanks

snm1523

Created 01-18-2016 06:59 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I was able to find a solution to this issue. You would need to add below property to mapred-site.xml basis on your Hadoop version:

Hadoop 1.x:

<property>

<name>mapred.job.tracker</name>

<value>localhost:9101</value>

</property>

Hadoop 2.x:

<property>

<name>mapreduce.jobtracker.address</name>

<value>localhost:9101</value>

</property>Thanks

snm1523