Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Cloudera 5.4.x cluster randomly reports "Clock...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Cloudera 5.4.x cluster randomly reports "Clock Offset Bad" with working NTP Server

Created on 08-24-2015 06:32 PM - edited 09-16-2022 02:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cloudera Community,

I have two different Cloudera Manager(CM) managed clusters that I upgraded from 5.3.3 to (5.4.1 and 5.4.3). On both clusters I get random "Clock Offset Bad" messages thoughout the day. I checked the times on all nodes and they are all in sync. I also checked the NTP configuration and it appears to be accurate. I did not have these issues when running 5.3.3. I was wondering how the CM agent checks for bad offset clock? Is there something that changed between 5.3.3 and 5.4.x?

I'm running:

Cluster 1

OS: Redhat Enterprise 6.6

CDH: 5.4.1

CM: 5.4.1

Cluster 2

OS: Redhat Enterprise 6.6

CDH: 5.4.3

CM: 5.4.3

Thanks.

Created on 11-16-2015 11:20 PM - edited 11-16-2015 11:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I had same issue on one of the 10 node cluster built using VMware hypervisor, so all 10 VM's running on hypervisor.

I actually did not face clock offset issue when I built cloudera cluster on a physical machines without using hypervisor. I feel there is latency issue with time sync of 3 minutes while on hypervisor.

Please let us know if it is recommended to have hadoop cluster over hypervisor or not.

Created 10-06-2016 07:45 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Some times these are false alerts. Can you check your sytem load during that time when you received these allerts(CLOCK_OFFSET, DNS_HOST_RESOLUTON, WEB_METRIC etc).

sar -q -f /var/log/sa/sa10

Use above command and modify sa10 with the date you have received alerts.

track down the load and check what unusal thing happened on that host during that widow.

If you see there is a bump up in the load then Check your system I/O disk utilization to see if the spindles are reaching 100%. If any of the spindles are reaching 100% then the system load is the cluprit here.

You may need to increase your thresholds on that perticular host in CM> host>all hosts> select on host name> configurations and look for Host Clock Offset Thresholds or Host DNS Resolution Duration Thresholds.

As per the present thresholds when the system experience high load it will pause for a while or send delayed response(response includes health check reports of the host when CM scm-agent is running) to the scm-server. When the SCM-server failed to receive these health check reports within the duration due to the host beeing busy then this will cause the alerts to flood in your inbox.

Created 10-06-2016 08:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello everyone,

A few things based on previous comments:

(1)

The = at the beginning indicates that the ntpd daemon on that host is polling the NTP server to get time. It does not mean it is synchronized, though. Any host in the list of NTP servers can be used to synchronized time.

(2)

If there is no * at the beginning of the output when the agent runs "ntpdc -np" then you will get an offset alert if you have enabled them

(3)

Functions of Hadoop require times be synchronized as much as possible, so the Clock Offset Health alert's intent is to inform cluster administrators that there could be a problem. If you verify that there is no problem... perhaps the NTP server is having some temporary trouble, you can adjust the thresholds or disable the alert altogether until the problem is resolved.

(4)

What Naveen1 refers to is when the agent host is overwhelmed and cannot service the agent's health check requests in due time so multiple health checks can become bad or unknown. I would not consider them 'false' in the sense that something simple like getting NTP info or resolving a hostname failed. In that case, the host is likely overloaded to the point where it is in crisis and basic commands cannot run. To me that warrants some investigation into host/service tuning, adding RAM, new hosts to the cluster, etc. as it is unlikely to improve and will cause more severe service problems in your cluster.

If you only see the clock offset health alert failing by itself, it is either:

- the offset exceeds the configured threshold in CM'sHost Clock Offset Thresholds

- ntpdc -np output did not have a "*" at the beginning of a line

For either, you can decide if you want to receive the alerts if you can confirm your system time is in sync across the cluster. To shut off the alerts set:

"Host Clock Offset Thresholds" Cricital: Never

BOTTOM LINE:

The alert is there to warn you that it seems something very bad is happening so you can check it out. If you know things are OK or don't care, you can shut off the alert.

If you want the alerts on, then ntpdc -np needs to return expected results. ntpdc queries ntpd, so ntpd needs to be healthy, too.

NOTE: chrony is supported in Cloudera Manager 5.8 and up if you use chony and support should be seemless

Cheers,

Ben

Created 10-06-2016 09:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 11-14-2016 12:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We are getting the same issue after upgrade from CDH 5.2 to CDH 5.7

Looks like this is an issue with CDH 5.4.x and above (not sure the same issue occures for those who are directly installing CDH 5.4.x or above without upgrade from lower version)

I don't want to shut off this alert. Pls let me know if there is any other way to fix this issue

Thanks

Kumar

Created 06-07-2017 09:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

I was able to fix the issue. We have a dedicated switch for Hadoop Cluster and I configured ntp server to sync with switch. It works all fine now 🙂

Not sure if everyone can do this, I would suggest you guys to try configuring ntp server for accurate time sync.

Or you might setup a ntp server for Hadoop Cluster. Usually, ntp sync should work fine with domain controllers.

[root@blrrndaclxx ~]# ntpq -p remote refid st t when poll reach delay offset jitter ============================================================================== *ntpbigdata.rnd. 172.xx.xx.111 4 u 574 1024 377 0.931 -0.790 3.597

Created 07-06-2017 01:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I used ntp server on ubuntu16,04, I also got the problem, It seemed that ntp disabled mode 7 in this version, so it does not support the command: ntpdc -np. I edited /etc/ntp.conf, added this as the first line:

enable mode 7

then restarted ntp. It worked fine.

Created 02-15-2018 03:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I got the same issue with clock offset. I use a cloudera quickstart VM with :

CDH 5.12

Red Hat Enterprise Linux 6 (64 bits)

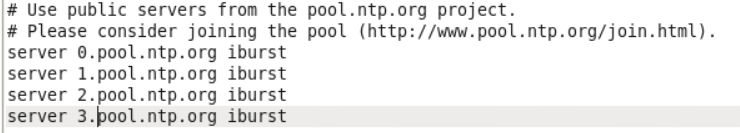

My VM is housed somewhere in OVH servers so in doubt, I simply used in my ntp.conf file :

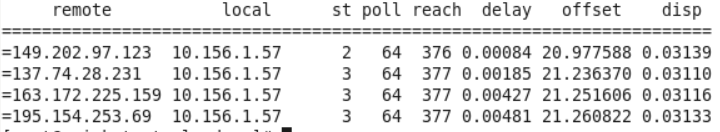

I restarted my ntp demon with "service ntp restart", and for a time, the sync is ok with one of the ntp servers and then falls down after about a minute. Here is the output of ntpdc -p :

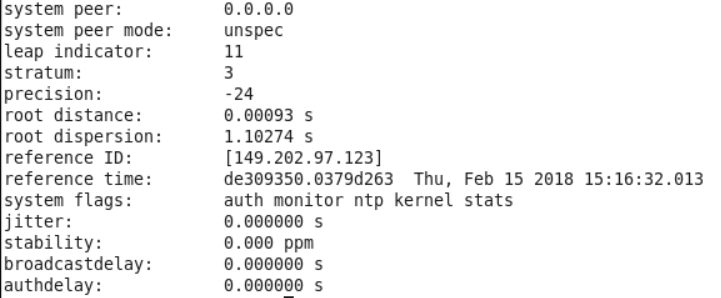

and ntpdc -c sysinfo :

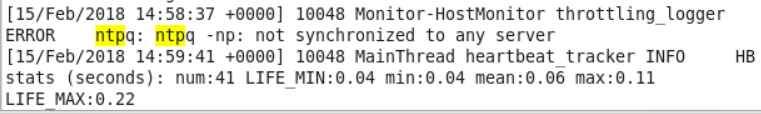

In the /var/log/cloudera-scm-agent/cloudera-scm-agent.log file, I don't have the ntpq command, but still some warning from the host manager about having no ntp server in sync:

My drift in /var/lib/ntp/drift is constant and equal to 499.985.

Any idea please ?

Best regards, Sélim

- « Previous

-

- 1

- 2

- Next »