Community Articles

- Cloudera Community

- Support

- Community Articles

- IoT Series: Sensors: Utilizing Breakout Garden...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 12-14-2018 06:45 PM - edited 08-17-2019 05:22 AM

IoT Series: Sensors: Utilizing Breakout Garden Hat: Part 1 - Introduction

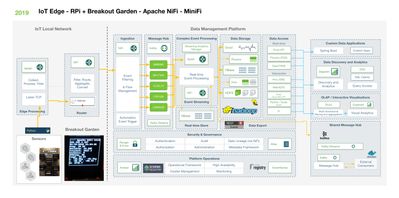

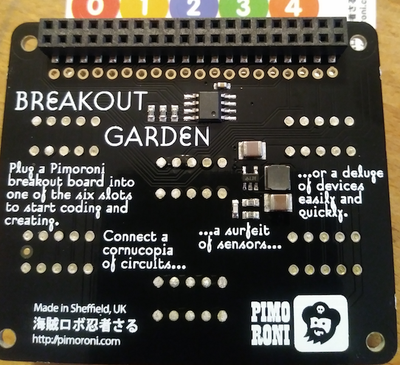

An easy option for adding, removing and prototype sensor reads from a standard Raspberry Pi with no special wiring.

Hardware Component List:

- Raspberry Pi

- USB Power Cable

- Pimoroni Breakout Garden Hat

- 1.12" Mono OLED Breakout 128x128 White/Black Screen

- BME680 Air Quality, Temperature, Pressure, Humidity Sensor

- LWM303D 6D0F Motion Sensor (X, Y, Z Axes)

- BH1745 Luminance and Color Sensor

- LTR-559 Light and Proximity Sensor 0.01 lux to 64,000 lux

- VL53L1X Time of Flight (TOF) Sensor Pew Pew Lasers!

Software Component List:

- Raspian

- Python 2.7

- JDK 8 Java

- Apache NiFi

- MiniFi

Source Code:

https://github.com/tspannhw/minifi-breakoutgarden

- Shell Script (https://github.com/tspannhw/minifi-breakoutgarden/blob/master/runbrk.sh)

- Python (https://github.com/tspannhw/minifi-breakoutgarden/blob/master/brk.py)

Summary

Our Raspberry Pi has a Breakout Garden Hat with 5 sensors and one small display. The display is showing the last reading and is constantly updating. For debugging purposes, it shows the IP Address so I can connect as needed.

We currently run via nohup, but when we go into constant use I will switch to a Linux Service to run on startup.

The Python script initializes the connections to all of the sensors and then goes into an infinite loop of reading those values and building a JSON packet that we send via TCP/IP over port 5005 to a listener. MiniFi 0.5.0 Java Agent is using ListenTCP on that port to capture these messages and filter them based on alarm values. If outside of the checked parameters we send them via S2S/HTTP(s) to an Apache NiFi server.

We also have a USB WebCam (Sony Playstation 3 EYE) that is capturing images and we read those with MiniFi and send them to NiFi as well.

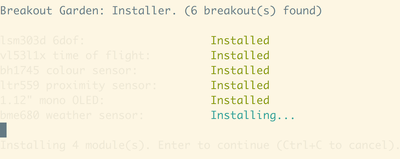

The first thing we need to do is pretty easy. We need to plug in our Pimoroni Breakout Garden Hat and our 6 plugs.

You have to do the standard installation of Python, Java 8, MiniFi and I recommend OpenCV. Make sure you have everything plugged in securely and the correct direction before you power on the Raspberry Pi.

Download MiniFi Java Here: https://nifi.apache.org/minifi/download.html

Install Python PIP curl https://bootstrap.pypa.io/get-pip.py -o get-pip.py

Install Breakout Garden Library wget https://github.com/pimoroni/breakout-garden/archive/master.zip

unzip master.zip

cd breakout-garden-master

sudo ./install.sh

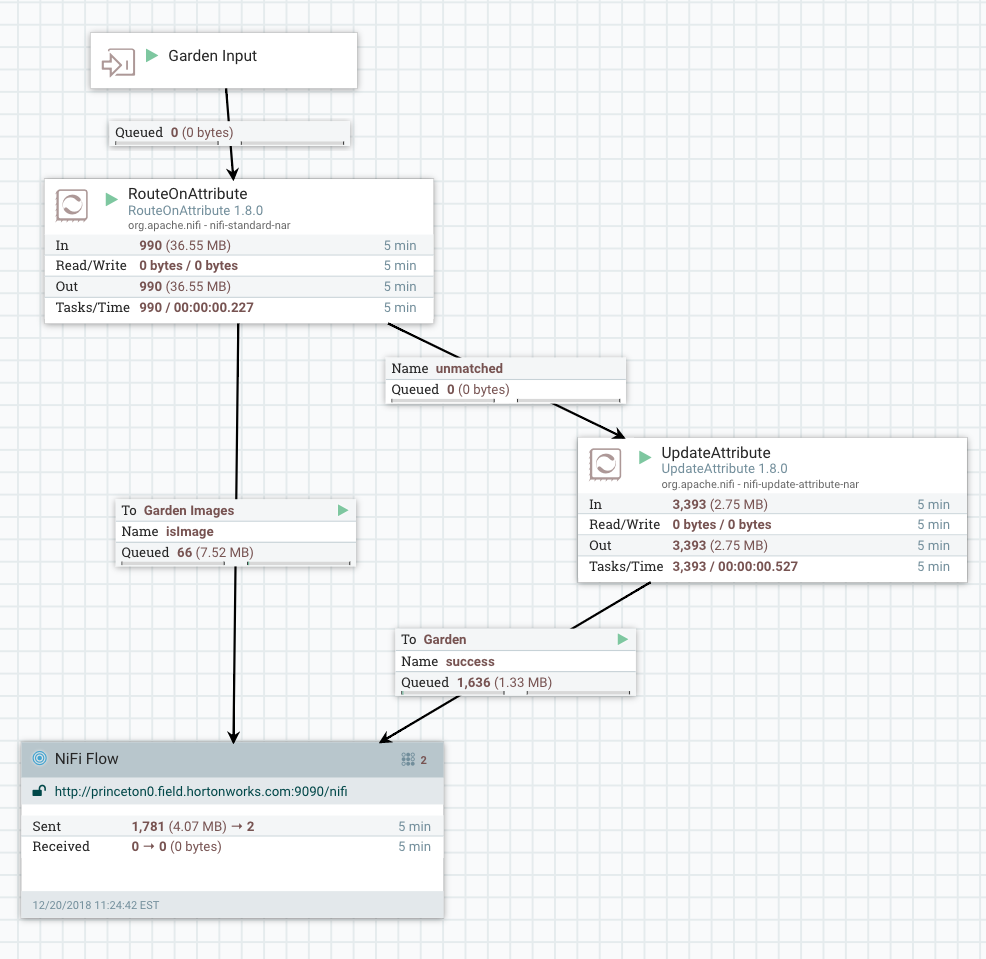

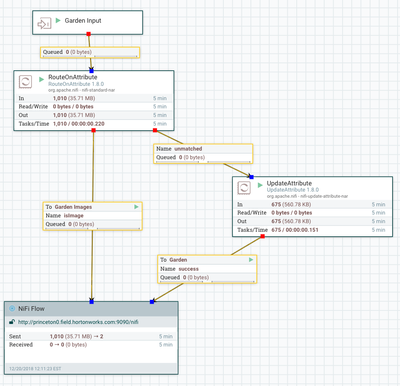

Running In NiFi

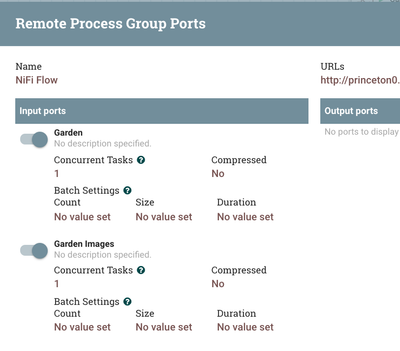

First we build our MiniFi Flow:

We have two objectives: listen for TCP/IP JSON messages from our running Python sensor collector and gather images captured by the PS3 Eye USB Webcam.

We then add content type and schema information to the attributes. We also extract a few values from the JSON stream to use for alerting.

We extract: $.cputemp, $.VL53L1X_distance_in_mm, $.bme680_humidity, $.bme680_tempf

These attributes are now attached to our flowfile which is unchanged. We can now Route on them.

So we route on a few alarm conditions:

${cputemp:gt(100)}

${humidity:gt(60)}

${tempf:gt(80)}

${distance:gt(0)}

We can easily add more conditions or different set values. We can also populate these set values from an HTTP / file lookup.

If these values are met we send to our local Apache NiFi router. This can then do further analysis with the fuller NiFi processor set including TensorFlow, MXNet, Record processing and lookups.

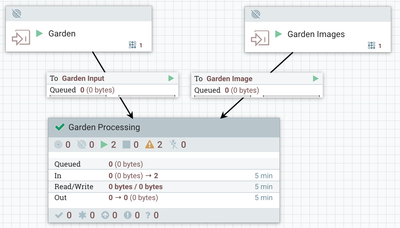

Local NiFi Routing

For now we are just splitting up the images and JSON and sending to two different remote ports on our cloud NiFi cluster.

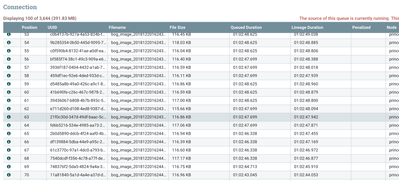

These then arrive in the cloud.

As you can see a list of the flow files waiting to be processed (I haven't written that part yet). As you can see we are getting a few a second, we could get 100,000 a second if we needed. Just add nodes. Instant scaling. Cloudbreak can do that for you.

In part 2, we will start processing these data streams and images. We will also add Apache MXNet and TensorFlow at various points on the edge, router and cloud using Python and built-in Deep Learning NiFi processors I have authored. We will also break apart these records and send each sensor to it's own Kafka topic to be processed with Kafka Streams, Druid, Hive and HBase.

As part of our loop we write to our little screen current values:

Example Record

{

"systemtime" : "12/19/2018 22:15:56",

"BH1745_green" : "4.0",

"ltr559_prox" : "0000",

"end" : "1545275756.7",

"uuid" : "20181220031556_e54721d6-6110-40a6-aa5c-72dbd8a8dcb2",

"lsm303d_accelerometer" : "+00.06g : -01.01g : +00.04g",

"imgnamep" : "images/bog_image_p_20181220031556_e54721d6-6110-40a6-aa5c-72dbd8a8dcb2.jpg",

"cputemp" : 51.0,

"BH1745_blue" : "9.0",

"te" : "47.3427119255",

"bme680_tempc" : "28.19",

"imgname" : "images/bog_image_20181220031556_e54721d6-6110-40a6-aa5c-72dbd8a8dcb2.jpg",

"bme680_tempf" : "82.74",

"ltr559_lux" : "006.87",

"memory" : 34.9,

"VL53L1X_distance_in_mm" : 134,

"bme680_humidity" : "23.938",

"host" : "vid5",

"diskusage" : "8732.7",

"ipaddress" : "192.168.1.167",

"bme680_pressure" : "1017.31",

"BH1745_clear" : "10.0",

"BH1745_red" : "0.0",

"lsm303d_magnetometer" : "+00.04 : +00.34 : -00.10",

"starttime" : "12/19/2018 22:15:09"

}

NiFi Templates

Let's Build Those Topics Now

/usr/hdp/current/kafka-broker/bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic bme680 /usr/hdp/current/kafka-broker/bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic bh17455 /usr/hdp/current/kafka-broker/bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic lsm303d /usr/hdp/current/kafka-broker/bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic vl53l1x /usr/hdp/current/kafka-broker/bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic ltr559

Hopefully in your environment, you will be able to have 3, 5 or 7 replication factor and many partitions. I have one Kafka Broker so this is what we are starting with.

Reference

- https://shop.pimoroni.com/collections/breakout-garden

- https://github.com/pimoroni/breakout-garden/tree/master/examples

- https://shop.pimoroni.com/products/breakout-garden-hat

- https://github.com/pimoroni/bme680-python

- https://learn.pimoroni.com/bme680

- https://shop.pimoroni.com/products/bh1745-luminance-and-colour-sensor-breakout

https://github.com/pimoroni/bh1745-python - https://shop.pimoroni.com/products/vl53l1x-breakout

https://github.com/pimoroni/vl53l1x-python/tree/master/examples - https://shop.pimoroni.com/products/ltr-559-light-proximity-sensor-breakout

https://github.com/pimoroni/ltr559-python - https://shop.pimoroni.com/products/bme680-breakout

https://github.com/pimoroni/bme680 - https://shop.pimoroni.com/products/lsm303d-6dof-motion-sensor-breakout

https://github.com/pimoroni/lsm303d-python - https://shop.pimoroni.com/products/1-12-oled-breakout

https://github.com/rm-hull/luma.oled - http://www.diegoacuna.me/how-to-run-a-script-as-a-service-in-raspberry-pi-raspbian-jessie/