Support Questions

- Cloudera Community

- Support

- Support Questions

- Host Monitor Fails To Start on Host After Spark2 u...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Host Monitor Fails To Start on Host After Spark2 upgrade

- Labels:

-

Apache Kafka

-

Apache Spark

-

Cloudera Manager

Created on 02-28-2019 01:19 PM - edited 09-16-2022 07:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

All-

I have a cluster of 3 VM's within a lab. Memory/RAM is not an issue. I recently went to upgrade to Spark 2.3 which I have done before without issues. Originally I could not get Spark2 to start- it would fail on the 7th/8 configuration updates (Kafka). Unfortunately, I seem to be having numerous issues this time around. In researching my issue with Spark 2 not starting, I came across the following post: https://community.cloudera.com/t5/Cloudera-Manager-Installation/deployClientConfig-on-service-Spark-...

One of the employee posts said the following:

We've seen this issue occassionally; you can work around the problem by deleting any empty alternatives under /var/lib/alternatives and restart cloudera-scm-agent ("service cloudera-scm-agent restart"). The agent will recreate any missing alternatives then.

This was the "beginning of the end" for me. I did exactly what it stated, but upon restarting the agent I had numerous issues within the Cloudera UI- mostly related to the Host and Service Monitor not loading- but only on VM1- 2 and 3 start and restart just fine. For whatever reason after rebooting it seemed to have also messed up my MySQL server auditing plugin. Mysql wouldnt start, which I traced back to the server auditing plugin being set to on- the .so file was missing- I had to manually reinstall it, comment out the server auditing section in my.cnf, reinstall the server auditing plugin, and restart. I have no clue how any of this was removed as nothing had been touched in MySQL for some time. Anyway, I finally got MySQL running again but still no host monitor for VM1.

The logs have been mostly unhelpful and appear consistent with the logs on the working VMs for the most part. The Cloudera-scm-server logs don't state much of anything except and error about /cmf/role/80/tail - except I have no /cmf directory on any of my VMs- even the ones with working agents.

The Agent logs just state a bunch of informational mesages that don't seem relevant, and then ends with "WARNING: Stopping Daemon" every time you try to restart the agent.

The firehose logs don't seem to have updated for a week- but the last entries in HOST MONITOR are:

2019-02-22 16:00:39,241 INFO com.cloudera.cmf.cdhclient.util.CDHUrlClassLoader: Detected that this program is running in a JAVA 1.8.0_172 JVM. CDH5 jars will be loaded from:lib/cdh5

2019-02-22 16:00:39,511 INFO com.cloudera.enterprise.ssl.SSLFactory: Using configured truststore for verification of server certificates in HTTPS communication.

2019-02-22 16:00:39,895 INFO com.cloudera.cmf.BasicScmProxy: Using encrypted credentials for SCM

2019-02-22 16:00:39,910 INFO com.cloudera.cmf.BasicScmProxy: Authenticated to SCM.

2019-02-22 16:00:39,916 INFO com.cloudera.cmf.BasicScmProxy: Failed request to SCM: 302

2019-02-22 16:00:39,916 WARN com.cloudera.cmon.firehose.Main: No descriptor fetched from https://*TRUNCATED** on after 1 tries, sleeping for 2 secs

2019-02-22 16:00:40,068 WARN com.cloudera.cmf.event.publish.EventStorePublisherWithRetry: Failed to publish event: SimpleEvent{attributes={ROLE=[mgmt-HOSTMONITOR-ebd5bcd145a4882ab03d338b502c108c], HOSTS=[*TRUNCATED**], ROLE_TYPE=[HOSTMONITOR], CATEGORY=[LOG_MESSAGE], EVENTCODE=[EV_LOG_EVENT], SERVICE=[mgmt], SERVICE_TYPE=[MGMT], LOG_LEVEL=[WARN], HOST_IDS=[*TRUNCATED**], SEVERITY=[IMPORTANT]}, content=No descriptor fetched from http://*TRUNCATED** on after 1 tries, sleeping for 2 secs, timestamp=1550869239916}

2019-02-22 16:00:41,963 WARN com.cloudera.cmon.firehose.Main: No descriptor fetched from http://*TRUNCATED** on after 2 tries, sleeping for 2 secs

2019-02-22 16:00:43,965 WARN com.cloudera.cmon.firehose.Main: No descriptor fetched from http://*TRUNCATED** on after 3 tries, sleeping for 2 secs

2019-02-22 16:00:45,968 WARN com.cloudera.cmon.firehose.Main: No descriptor fetched from http://*TRUNCATED** on after 4 tries, sleeping for 2 secs

2019-02-22 16:00:47,970 WARN com.cloudera.cmon.firehose.Main: No descriptor fetched from http://*TRUNCATED** on after 5 tries, sleeping for 2 secs

Any ideas?

Created 03-08-2019 10:11 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @krieger ,

Could you please run this command and see if it returns any result?

# grep '^[[:blank:]]' /etc/cloudera-scm-agent/config.ini

Above command can rule out if there are any leading spaces in the /etc/cloudera-scm-agent/config.ini. See below example. The example showing that there's a leading space in front of server_port=7182. This will cause the config parser to fail.

# grep '^[[:blank:]]' /etc/cloudera-scm-agent/config.ini

server_port=7182

From the content you pasted, I feel there is a leading space before the line "use_tls=0". If the command does return the line, could you please remove the leading space in the line and then try to restart agent again?

Thanks and hope this helps,

Li

Li Wang, Technical Solution Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created 03-01-2019 10:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @krieger,

We need some more details to assist on this issue. Just by upgrading to Spark 2.3 should not cause issue with HMON.

Could you please answer a few questions:

1) What is the CM/CDH version you have in the lab?

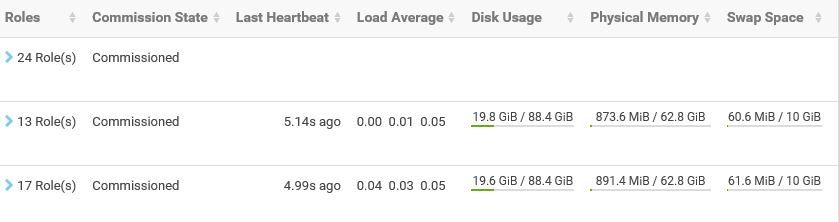

2) Where does HMON role install? On which host? If you can, send us a screenshot of the CM hosts page.

3) It sounds like VM2 and VM3 are working fine, but not VM1. Is VM1 CM agent running or not?

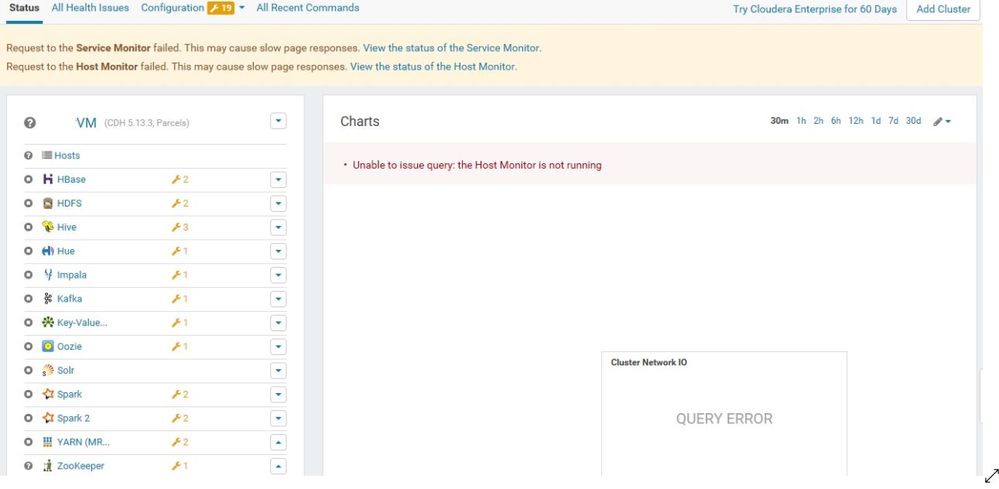

4) Is HMON showing down/stopped from CM UI? If you can, send us a screenshot of CM homepage.

Can you please also send us the agent log from a non-working one and working one so we can do some investigation/comparsion to find out why the agent is not starting on one of the hosts.

Thanks,

Li

Li Wang, Technical Solution Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created on 03-01-2019 11:54 AM - edited 03-01-2019 11:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your reply!! Please see below:

1) What is the CM/CDH version you have in the lab?

CM:Cloudera Express 5.13.2

CDH:5.13.3

2) Where does HMON role install? On which host? If you can, send us a screenshot of the CM hosts page.

HMON is installed on VM1- screenshot below of the CM Hosts page.

3) It sounds like VM2 and VM3 are working fine, but not VM1. Is VM1 CM agent running or not?

Correct, VM2 & 3 are fine- or appear to be. VM1 does not throw any errors when starting the agent other than "WARNING: Stopping Daemon" in the agent logs. So it does not appear that the agent is running, but I can't seem to get it to start.

4) Is HMON showing down/stopped from CM UI? If you can, send us a screenshot of CM homepage.

HMON appears to be down. Restart attempts fail. SMON is also down and won't restart. Screenshot attached

Requested logs below:

[01/Mar/2019 14:02:30 +0000] 11699 MainThread __init__ INFO Agent UUID file was last modified at 2018-05-17 15:45:14.694427 [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO SCM Agent Version: 5.13.2 [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Agent Protocol Version: 4 [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Using Host ID: 256e9061-379a-4544-95ec-6fe3b3f1dc0f [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Using directory: /run/cloudera-scm-agent [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Using supervisor binary path: /usr/lib64/cmf/agent/build/env/bin/supervisord [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Neither verify_cert_file nor verify_cert_dir are configured. Not performing validation of server certificates in HTTPS communication. These options can be configured in this agent's config.ini file to enable certificate validation. [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Agent Logging Level: INFO [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO No command line vars [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Found database jar: /usr/share/java/mysql-connector-java.jar [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Missing database jar: /usr/share/java/oracle-connector-java.jar (normal, if you're not using this database type) [01/Mar/2019 14:02:30 +0000] 11699 MainThread agent INFO Found database jar: /usr/share/cmf/lib/postgresql-9.0-801.jdbc4.jar [01/Mar/2019 14:02:30 +0000] 11699 Dummy-1 daemonize WARNING Stopping daemon.

VM2 (working) Agent log:

[01/Mar/2019 13:24:00 +0000] 13895 MainThread agent ERROR Heartbeating to *Truncated*:7182 failed.

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/agent.py", line 1417, in _send_heartbeat

self.master_port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

[01/Mar/2019 13:24:35 +0000] 13895 MonitorDaemon-Reporter throttling_logger ERROR (9 skipped) Error sending messages to firehose: mgmt-SERVICEMONITOR-ebd5bcd145a4882ab03d338b502c108c

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/monitor/firehose.py", line 120, in _send

self._port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

[01/Mar/2019 13:25:35 +0000] 13895 MonitorDaemon-Reporter throttling_logger ERROR (9 skipped) Error sending messages to firehose: mgmt-HOSTMONITOR-ebd5bcd145a4882ab03d338b502c108c

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/monitor/firehose.py", line 120, in _send

self._port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

[01/Mar/2019 13:26:31 +0000] 13895 MainThread heartbeat_tracker INFO HB stats (seconds): num:40 LIFE_MIN:0.00 min:0.00 mean:0.05 max:0.64 LIFE_MAX:0.40

[01/Mar/2019 13:28:31 +0000] 13895 MainThread throttling_logger INFO (14 skipped) Identified java component java8 with full version JAVA_HOME=/usr/java/default java version "1.8.0_172" Java(TM) SE Runtime Environment (build 1.8.0_172-b11) Java HotSpot(TM) 64-Bit Server VM (build 25.172-b11, mixed mode) for requested version misc.

[01/Mar/2019 13:28:31 +0000] 13895 MainThread throttling_logger INFO (14 skipped) Identified java component java8 with full version JAVA_HOME=/usr/java/jdk1.8.0_172 java version "1.8.0_172" Java(TM) SE Runtime Environment (build 1.8.0_172-b11) Java HotSpot(TM) 64-Bit Server VM (build 25.172-b11, mixed mode) for requested version 8.

[01/Mar/2019 13:34:35 +0000] 13895 MonitorDaemon-Reporter throttling_logger ERROR (9 skipped) Error sending messages to firehose: mgmt-SERVICEMONITOR-ebd5bcd145a4882ab03d338b502c108c

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/monitor/firehose.py", line 120, in _send

self._port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

[01/Mar/2019 13:35:35 +0000] 13895 MonitorDaemon-Reporter throttling_logger ERROR (9 skipped) Error sending messages to firehose: mgmt-HOSTMONITOR-ebd5bcd145a4882ab03d338b502c108c

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/monitor/firehose.py", line 120, in _send

self._port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

[01/Mar/2019 13:36:31 +0000] 13895 MainThread heartbeat_tracker INFO HB stats (seconds): num:40 LIFE_MIN:0.00 min:0.03 mean:0.04 max:0.06 LIFE_MAX:0.40

[01/Mar/2019 13:44:35 +0000] 13895 MonitorDaemon-Reporter throttling_logger ERROR (9 skipped) Error sending messages to firehose: mgmt-SERVICEMONITOR-ebd5bcd145a4882ab03d338b502c108c

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/monitor/firehose.py", line 120, in _send

self._port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

[01/Mar/2019 13:56:32 +0000] 13895 MainThread heartbeat_tracker INFO HB stats (seconds): num:40 LIFE_MIN:0.00 min:0.03 mean:0.03 max:0.05 LIFE_MAX:0.40

[01/Mar/2019 13:58:33 +0000] 13895 MainThread throttling_logger INFO (14 skipped) Identified java component java8 with full version JAVA_HOME=/usr/java/default java version "1.8.0_172" Java(TM) SE Runtime Environment (build 1.8.0_172-b11) Java HotSpot(TM) 64-Bit Server VM (build 25.172-b11, mixed mode) for requested version misc.

[01/Mar/2019 13:58:33 +0000] 13895 MainThread throttling_logger INFO (14 skipped) Identified java component java8 with full version JAVA_HOME=/usr/java/jdk1.8.0_172 java version "1.8.0_172" Java(TM) SE Runtime Environment (build 1.8.0_172-b11) Java HotSpot(TM) 64-Bit Server VM (build 25.172-b11, mixed mode) for requested version 8.

[01/Mar/2019 14:03:18 +0000] 13895 CP Server Thread-12 _cplogging INFO *TRUNCATED IP* - - [01/Mar/2019:14:03:18] "GET /heartbeat HTTP/1.1" 200 2 "" "NING/1.0"

[01/Mar/2019 14:03:18 +0000] 13895 MainThread process INFO [1491-zookeeper-server] Updating process: False {u'running': (True, False), u'run_generation': (1, 2)}

[01/Mar/2019 14:03:18 +0000] 13895 MainThread process INFO Deactivating process 1491-zookeeper-server

[01/Mar/2019 14:03:19 +0000] 13895 MainThread agent INFO Triggering supervisord update.

[01/Mar/2019 14:03:19 +0000] 13895 Metadata-Plugin navigator_plugin INFO stopping Metadata Plugin for zookeeper-server with pipelines []

[01/Mar/2019 14:03:19 +0000] 13895 Metadata-Plugin navigator_plugin_pipeline INFO Stopping Navigator Plugin Pipeline '' for zookeeper-server (log dir: None)

[01/Mar/2019 14:03:22 +0000] 13895 Audit-Plugin navigator_plugin INFO stopping Audit Plugin for zookeeper-server with pipelines []

[01/Mar/2019 14:03:22 +0000] 13895 Audit-Plugin navigator_plugin_pipeline INFO Stopping Navigator Plugin Pipeline '' for zookeeper-server (log dir: None)

[01/Mar/2019 14:03:35 +0000] 13895 CP Server Thread-11 _cplogging INFO *TRUNCATED IP* - - [01/Mar/2019:14:03:35] "GET /heartbeat HTTP/1.1" 200 2 "" "NING/1.0"

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO [1491-zookeeper-server] Updating process.

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO Deleting process 1491-zookeeper-server

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Triggering supervisord update.

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO [1491-zookeeper-server] Orphaning process

[01/Mar/2019 14:03:35 +0000] 13895 MainThread util INFO Using generic audit plugin for process zookeeper-server

[01/Mar/2019 14:03:35 +0000] 13895 MainThread util INFO Creating metadata plugin for process zookeeper-server

[01/Mar/2019 14:03:35 +0000] 13895 MainThread util INFO Using specific metadata plugin for process zookeeper-server

[01/Mar/2019 14:03:35 +0000] 13895 MainThread util INFO Using generic metadata plugin for process zookeeper-server

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO [1496-zookeeper-server] Instantiating process

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO [1496-zookeeper-server] Updating process: True {}

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO First time to activate the process [1496-zookeeper-server].

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Created /run/cloudera-scm-agent/process/1496-zookeeper-server

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Chowning /run/cloudera-scm-agent/process/1496-zookeeper-server to zookeeper (991) zookeeper (988)

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Chmod'ing /run/cloudera-scm-agent/process/1496-zookeeper-server to 0751

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Created /run/cloudera-scm-agent/process/1496-zookeeper-server/logs

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Chowning /run/cloudera-scm-agent/process/1496-zookeeper-server/logs to zookeeper (991) zookeeper (988)

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Chmod'ing /run/cloudera-scm-agent/process/1496-zookeeper-server/logs to 0751

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO [1496-zookeeper-server] Refreshing process files: None

[01/Mar/2019 14:03:35 +0000] 13895 MainThread parcel INFO prepare_environment begin: {u'SPARK2': u'2.3.0.cloudera4-1.cdh5.13.3.p0.611179', u'CDH': u'5.13.3-1.cdh5.13.3.p0.2', u'KAFKA': u'3.0.0-1.3.0.0.p0.40'}, [u'cdh'], [u'cdh-plugin', u'zookeeper-plugin']

[01/Mar/2019 14:03:35 +0000] 13895 MainThread parcel INFO The following requested parcels are not available: {}

[01/Mar/2019 14:03:35 +0000] 13895 MainThread parcel INFO Obtained tags ['spark2'] for parcel SPARK2

[01/Mar/2019 14:03:35 +0000] 13895 MainThread parcel INFO Obtained tags ['cdh', 'impala', 'kudu', 'sentry', 'solr', 'spark'] for parcel CDH

[01/Mar/2019 14:03:35 +0000] 13895 MainThread parcel INFO Obtained tags ['kafka'] for parcel KAFKA

[01/Mar/2019 14:03:35 +0000] 13895 MainThread parcel INFO prepare_environment end: {'CDH': '5.13.3-1.cdh5.13.3.p0.2'}

[01/Mar/2019 14:03:35 +0000] 13895 MainThread __init__ INFO Extracted 10 files and 0 dirs to /run/cloudera-scm-agent/process/1496-zookeeper-server.

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO [1496-zookeeper-server] Evaluating resource: {u'io': None, u'named_cpu': None, u'tcp_listen': None, u'dynamic': True, u'rlimits': None, u'install': None, u'file': None, u'memory': None, u'directory': {u'path': u'/var/log/zookeeper/stacks', u'bytes_free_warning_threshhold_bytes': 0, u'group': u'zookeeper', u'user': u'zookeeper', u'mode': 493}, u'cpu': None, u'contents': None}

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO reading limits: {u'limit_memlock': None, u'limit_fds': None}

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO [1496-zookeeper-server] Launching process. one-off False, command zookeeper/zkserver.sh, args [u'2', u'/var/lib/zookeeper']

[01/Mar/2019 14:03:35 +0000] 13895 MainThread agent INFO Triggering supervisord update.

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO Begin audit plugin refresh

[01/Mar/2019 14:03:35 +0000] 13895 MainThread navigator_plugin INFO Scheduling a refresh for Audit Plugin for zookeeper-server with pipelines []

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO Begin metadata plugin refresh

[01/Mar/2019 14:03:35 +0000] 13895 MainThread navigator_plugin INFO Scheduling a refresh for Metadata Plugin for zookeeper-server with pipelines []

[01/Mar/2019 14:03:35 +0000] 13895 MainThread __init__ INFO Instantiating generic monitor for service ZOOKEEPER and role SERVER

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO Begin monitor refresh.

[01/Mar/2019 14:03:35 +0000] 13895 MainThread abstract_monitor INFO Refreshing GenericMonitor ZOOKEEPER-SERVER for None

[01/Mar/2019 14:03:35 +0000] 13895 MainThread __init__ INFO New monitor: (<cmf.monitor.generic.GenericMonitor object at 0x7f5eb86282d0>,)

[01/Mar/2019 14:03:35 +0000] 13895 MainThread process INFO Daemon refresh complete for process 1496-zookeeper-server.

[01/Mar/2019 14:03:37 +0000] 13895 Audit-Plugin navigator_plugin INFO Refreshing Audit Plugin for zookeeper-server with pipelines []

[01/Mar/2019 14:03:37 +0000] 13895 Audit-Plugin navigator_plugin_pipeline INFO Stopping Navigator Plugin Pipeline '' for zookeeper-server (log dir: None)

[01/Mar/2019 14:03:39 +0000] 13895 Metadata-Plugin navigator_plugin INFO Refreshing Metadata Plugin for zookeeper-server with pipelines []

[01/Mar/2019 14:03:39 +0000] 13895 Metadata-Plugin navigator_plugin_pipeline INFO Stopping Navigator Plugin Pipeline '' for zookeeper-server (log dir: None)

[01/Mar/2019 14:03:57 +0000] 13895 MonitorDaemon-Scheduler __init__ INFO Monitor ready to report: ('GenericMonitor ZOOKEEPER-SERVER for zookeeper-SERVER-b1c728f2c1cd8ee0802ebb322237a17a',)

[01/Mar/2019 14:04:36 +0000] 13895 MonitorDaemon-Reporter throttling_logger ERROR (10 skipped) Error sending messages to firehose: mgmt-SERVICEMONITOR-ebd5bcd145a4882ab03d338b502c108c

Traceback (most recent call last):

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/cmf-5.13.2-py2.7.egg/cmf/monitor/firehose.py", line 120, in _send

self._port)

File "/usr/lib64/cmf/agent/build/env/lib/python2.7/site-packages/avro-1.6.3-py2.7.egg/avro/ipc.py", line 469, in __init__

self.conn.connect()

File "/usr/lib64/python2.7/httplib.py", line 824, in connect

self.timeout, self.source_address)

File "/usr/lib64/python2.7/socket.py", line 571, in create_connection

raise err

error: [Errno 111] Connection refused

Created 03-01-2019 03:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @krieger,

Thanks for your response.

I was not able to see clear error messages from the VM1 agent log. Can you please run below commands on VM1 host and send us the output?

1. ps -ef | grep cmf-agent

2. service cloudera-scm-agent status

Thanks,

Li

Li Wang, Technical Solution Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created 03-04-2019 01:46 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sure!

1. root 8454 8426 0 16:41 pts/0 00:00:00 grep --color=auto cmf-agent

2. ● cloudera-scm-agent.service - LSB: Cloudera SCM Agent

Loaded: loaded (/etc/rc.d/init.d/cloudera-scm-agent; bad; vendor preset: disabled)

Active: active (exited) since Fri 2019-03-01 14:02:30 EST; 3 days ago

Docs: man:systemd-sysv-generator(8)

Process: 11650 ExecStop=/etc/rc.d/init.d/cloudera-scm-agent stop (code=exited, status=0/SUCCESS)

Process: 11669 ExecStart=/etc/rc.d/init.d/cloudera-scm-agent start (code=exited, status=0/SUCCESS)

Mar 01 14:02:29 vm1 systemd[1]: Starting LSB: Cloudera SCM Agent...

Mar 01 14:02:29 vm1 su[11684]: (to root) root on none

Mar 01 14:02:30 vm1 cloudera-scm-agent[11669]: Starting cloudera-scm-agent: [ OK ]

Mar 01 14:02:30 vm1 systemd[1]: Started LSB: Cloudera SCM Agent.

Created 03-04-2019 02:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @krieger,

Since it looks like agent is not running correctly, I suggest you trying to do "Hard Stopping and Restarting Agents" by following below documentation:

Please note: hard restart will kill all running managed service processes on the host(s) where the command is run.

Thanks,

Li

Li Wang, Technical Solution Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created 03-05-2019 11:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks! I have tried the hard restart a few times- including just now per your instructions. Unfortunately, nothing seems to change and the results are the same.

Created 03-05-2019 02:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Li Wang, Technical Solution Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created 03-07-2019 09:22 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have- and just tried again with no resolution. Thanks again for the help

Created 03-07-2019 09:51 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @krieger ,

Could you please check if /etc/cloudera-scm-agent/config.ini exists on the VM1? If it does, can you please send the content for us to review?

Thanks,

Li

Li Wang, Technical Solution Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community: