Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: How to read json from S3 then edit json using ...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to read json from S3 then edit json using Nifi

- Labels:

-

Apache NiFi

Created on 06-22-2022 06:14 AM - edited 06-22-2022 05:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am using Nifi 1.6.0. I am trying to copy an S3 file into redshift. The json file on S3 looks like this:

[

{"a":1,"b":2},

{"a":3,"b":4}

]

However this gives an error because of '[' and ']' in the file (https://stackoverflow.com/a/45348425).

I need to convert the json from above format to a format which looks like this:

{"a":1,"b":2}

{"a":3,"b":4}

So basically what I am trying to do is to remove '[' and ']' and replace '},' with '}'.

The file has over 14 million rows (30 MB in size)

How can I achieve this using Nifi?

Created on 06-22-2022 07:31 PM - edited 06-22-2022 07:32 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

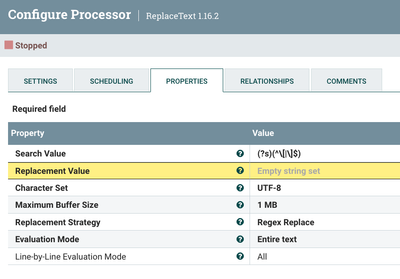

Try this:

This is the regex:

(?s)(^\[|\]$)You may have to increase the buffer size to accommodate your file.

Cheers,

André

Was your question answered? Please take some time to click on "Accept as Solution" below this post.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Created on 06-22-2022 06:56 PM - edited 06-22-2022 06:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I will try this solution, but I think the queue to PutDatabaseRecord processor will get clogged very soon because there are over 14 million rows in this file.

I tried Replace text and I was able to replace '},' to '}' within seconds. Can you tell me how to remove the first line '[' and the last line ']'?

Thanks

Created on 06-22-2022 07:31 PM - edited 06-22-2022 07:32 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Try this:

This is the regex:

(?s)(^\[|\]$)You may have to increase the buffer size to accommodate your file.

Cheers,

André

Was your question answered? Please take some time to click on "Accept as Solution" below this post.

If you find a reply useful, say thanks by clicking on the thumbs up button.

- « Previous

-

- 1

- 2

- Next »