Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Solr Swap Memory exceeding threshold

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Solr Swap Memory exceeding threshold

Created on

03-22-2022

03:08 AM

- last edited on

04-21-2026

12:59 AM

by

GrazittiAPI

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Everyone!

I've been receiving the following error on my Cloudera Manager.

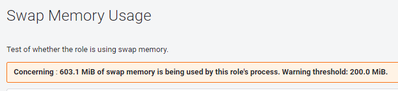

"603.1 MiB of swap memory is being used by this role's process. Warning threshold: 200.0 MiB."

I have tried removing Solr collections by going to the following location and running the following query.

http://<HOSTNAME>:8993/solr/#/ranger_audits/documents

<delete><query>evtTime:[* TO NOW-0DAYS]</query></delete>

But it's not having any effect on the Swap memory usage.

P.S. Swappiness is set to 1 as when I run

cat /proc/sys/vm/swappiness

The output generated is 1.

Cluster Details :

Solr: 8.4.1

Hadoop 3.1.1.7.1.6.0-297

CDH-7.1.6

Created 03-29-2022 01:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @AzfarB

Thanks for using Cloudera Community. Based on the Post, Your Team observed Solr-Infra JVM reporting WARNING for Swap Space more than 200MB being utilised. Restarting the Solr-Infra JVM ensured the WARNING went away.

Note that Swapping isn't Bad in general & the same has been discussed in detail by Community in [1] & [2]. Plus, Deleting RangerAudits Documents won't affect the same as Solr uses JVM as documented [3]. Indexed Documents aren't persisted in Memory unless Cached, thereby ensuring Deletion won't fix the Swapping guaranteed.

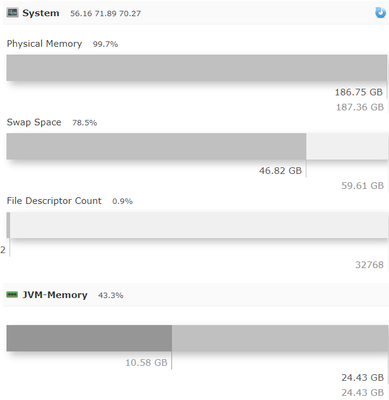

As your Screenshot shows, the Host itself is running short on Memory (~99% Utilised) & Overall Swap is ~80% at ~47GB, out of which Solr-Infra is contributing <1GB. As documented in the below Links, Your Team can focus on the Host Level Usage & Considering Increasing the Swap Threshold from 200MB to at least 10% of the Heap i.e. 2GB for a Warning.

01 additional point can be made as to why Solr-Infra Restart helped resolved the WARNING. This needs to be looked at from the Host perspective as to the amount of Memory freed & Whether the Overall Swap Usage reduced at Host Level after Solr-Infra Restart as opposed to Solr-Infra WARNING being suppressed only.

Regards, Smarak

[2] https://chrisdown.name/2018/01/02/in-defence-of-swap.html

[3] https://blog.cloudera.com/apache-solr-memory-tuning-for-production/

Created 03-22-2022 03:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@AzfarB ,

Which other roles are running on the same node as this Solr role?

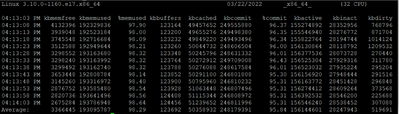

Could you provide the output of a "sar -r 5 12" command?

Cheers,

André

Was your question answered? Please take some time to click on "Accept as Solution" below this post.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Created 03-22-2022 06:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @araujo,

The problem went away as soon as I restarted Solr, but I'd still like to understand what it was and if there's a better/permanent solution.

Please find below the output of your mentioned command "sar -r 5 12"

This is the master node and there are quite a number of roles currently running here. Please find their list below.

HBASE Gateway

HIVE Gateway

HDFS Gateway

HIVE on TEZ Gateway

Hue Server

Kafka Gateway

Oozie Server

Ranger Admin

Ranger Tagsync

Ranger Usersync

Solr Server

Spark Gateway

SQOOP_Client Gateway

Streams Messaging Manager Rest Admin Server

Streams Messaging Manager UI Server

Tez Gateway

YARN Gateway

Created 03-22-2022 10:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @AzfarB

I think it is quite common for modern linux kernel to move some inactive pages into swap to bring down memory footprint.

As long your application or Solr not having any performance issue, I would not concern much on the swapping and I would just increase the threshold.

rgds,

Ram.

Created 03-27-2022 10:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@AzfarB, Has any of the replies helped resolve your issue? If so, can you please mark the appropriate reply as the solution? It will make it easier for others to find the answer in the future.

Regards,

Vidya Sargur,Community Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created 03-29-2022 01:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @AzfarB

Thanks for using Cloudera Community. Based on the Post, Your Team observed Solr-Infra JVM reporting WARNING for Swap Space more than 200MB being utilised. Restarting the Solr-Infra JVM ensured the WARNING went away.

Note that Swapping isn't Bad in general & the same has been discussed in detail by Community in [1] & [2]. Plus, Deleting RangerAudits Documents won't affect the same as Solr uses JVM as documented [3]. Indexed Documents aren't persisted in Memory unless Cached, thereby ensuring Deletion won't fix the Swapping guaranteed.

As your Screenshot shows, the Host itself is running short on Memory (~99% Utilised) & Overall Swap is ~80% at ~47GB, out of which Solr-Infra is contributing <1GB. As documented in the below Links, Your Team can focus on the Host Level Usage & Considering Increasing the Swap Threshold from 200MB to at least 10% of the Heap i.e. 2GB for a Warning.

01 additional point can be made as to why Solr-Infra Restart helped resolved the WARNING. This needs to be looked at from the Host perspective as to the amount of Memory freed & Whether the Overall Swap Usage reduced at Host Level after Solr-Infra Restart as opposed to Solr-Infra WARNING being suppressed only.

Regards, Smarak

[2] https://chrisdown.name/2018/01/02/in-defence-of-swap.html

[3] https://blog.cloudera.com/apache-solr-memory-tuning-for-production/

Created 04-08-2022 12:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @AzfarB

We hope the above Post has helped answer your concerns & offered an Action Plan to further review. We are marking the Post as Resolved for now. For any concerns, Feel free to post your ask in a Post & we shall get back to you accordingly.

Regards, Smarak