Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: S3 integration in Hue error : ValueError: Inva...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

S3 integration in Hue error : ValueError: Invalid S3 URI: S3A

- Labels:

-

Cloudera Hue

Created on 05-14-2019 06:51 AM - edited 09-16-2022 07:23 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I've set a cluster with CDH 6.20, 1 master 4 slaves, with the parcels way. Everything is working quite good, except the connection between Hue and S3.

I've followed the instructions from this guide

The hosts are running on Ubuntu 18.04, Python version is 2.7.15rc1S

When I try to navigate my buckets in Hue filebrowser, I get this error in the GUI : "Unknown error occurred".

On the Hue server logs, here is the complete error :

Internal Server Error: /filebrowser/view=S3A:/

Traceback (most recent call last):

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/build/env/lib/python2.7/site-packages/Django-1.11-py2.7.egg/django/core/handlers/exception.py", line 41, in inner

response = get_response(request)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/build/env/lib/python2.7/site-packages/Django-1.11-py2.7.egg/django/core/handlers/base.py", line 249, in _legacy_get_response

response = self._get_response(request)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/build/env/lib/python2.7/site-packages/Django-1.11-py2.7.egg/django/core/handlers/base.py", line 187, in _get_response

response = self.process_exception_by_middleware(e, request)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/build/env/lib/python2.7/site-packages/Django-1.11-py2.7.egg/django/core/handlers/base.py", line 185, in _get_response

response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/build/env/lib/python2.7/site-packages/Django-1.11-py2.7.egg/django/utils/decorators.py", line 185, in inner

return func(*args, **kwargs)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/apps/filebrowser/src/filebrowser/views.py", line 201, in view

stats = request.fs.stats(path)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/desktop/core/src/desktop/lib/fs/proxyfs.py", line 119, in stats

return self._get_fs(path).stats(path)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/desktop/libs/aws/src/aws/s3/__init__.py", line 52, in wrapped

return fn(*args, **kwargs)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/desktop/libs/aws/src/aws/s3/s3fs.py", line 256, in stats

stats = self._stats(path)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/desktop/libs/aws/src/aws/s3/s3fs.py", line 157, in _stats

key = self._get_key(path, validate=True)

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/desktop/libs/aws/src/aws/s3/s3fs.py", line 131, in _get_key

bucket_name, key_name = s3.parse_uri(path)[:2]

File "/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hue/desktop/libs/aws/src/aws/s3/__init__.py", line 68, in parse_uri

raise ValueError("Invalid S3 URI: %s" % uri)

ValueError: Invalid S3 URI: S3A:S3A seems to be the root URI. Here is the ajax URL requested by Hue, giving this error : http://*****.com:8889/filebrowser/view=S3A://?format=json&sortby=name&descending=false&pagesize=100&pagenum=1&_=1557827867345

I know the credentials setup is okay, for 2 reasons :

- I'm able to list a bucket content with hadoop fs -ls

- I can browse my buckets on Hue through an other cluster running CDH 5.13, with the same API access. This very same URL, except the hostname, is working fine on my other cluster.

I've tried :

- to disable / enable S3guard.

- to disable / enable the S3 connect for this cluster, in Administration > External accounts

- to force the S3 endpoint in the S3Connect configuration. In my case, s3.eu-west-1.amazonaws.com

- to disable the firewall on the server running Hue

- to change the node where Hue server is running

- to play with the URL by setting differents paths, including a bucket, and a resource.

- to add key id / secret in the safety valve, as explained here

I'm running out of ideas, I wasn't able to find anything on the forum, stackoverflow, Jira.

Any help or suggestion would be appreciated

Thank you

Edit (1)

As the error is suggesting, the problem seems to be coming from the S3 URI, where a slash is missing.

No matter URI I test, the double slash in S3A:// is transformed to a single slash (/). As a result, the resquested URI, the root node, is S3A:/. It doesn't match the variables S3_ROOT and S3A_ROOT, in the __init__.py

I've noticed that the Django framework version is different between CDH 5.13 and 6.20. I don't know if it's related, I'm not a python developper, but I'll continue to investigate and hopefully find some workaround.

Created on 06-18-2019 09:21 PM - edited 06-18-2019 09:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jmarcopoulos ,

Do you have Cloudera Manager for your cluster? If yes, you can update following config.

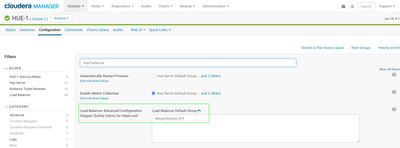

It turned out that for Debian or Ubuntu OSs, we need configure Hue's Load Balancer Advanced Configuration Snippet (Safety Valve) for httpd.conf with "MergeSlashes OFF", "Save Change" and restart Hue to avoid this missing slash error for s3 file browser.

Or you can use hue's server port: 8888 instead of hue's load balancer port:8889 to work around this issue.

This issue seems only happening for Debian and Ubuntu env. Centos/Redhat, Slesus are working fine.

Hope this helps!

Weixia

Created 05-15-2019 08:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've put a dirty workaround, which is to add a slash, in case the path is beginning with s3a:/, so at the end the path contains 2 slashes.

I can now use the filebrowser with S3.

File apps/filebrowser/src/filebrowser/views.py, Line 183 :

path = path.replace("s3a:/", "s3a://").replace("S3A:/", "S3A://")I would prefer a cleaner solution, because the day Hue get updated, my workaround will probably disappear.

Created on 06-18-2019 09:21 PM - edited 06-18-2019 09:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jmarcopoulos ,

Do you have Cloudera Manager for your cluster? If yes, you can update following config.

It turned out that for Debian or Ubuntu OSs, we need configure Hue's Load Balancer Advanced Configuration Snippet (Safety Valve) for httpd.conf with "MergeSlashes OFF", "Save Change" and restart Hue to avoid this missing slash error for s3 file browser.

Or you can use hue's server port: 8888 instead of hue's load balancer port:8889 to work around this issue.

This issue seems only happening for Debian and Ubuntu env. Centos/Redhat, Slesus are working fine.

Hope this helps!

Weixia

Created 06-25-2019 03:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I've added the parameter in the security valve, it works fine.

Thank you