Community Articles

- Cloudera Community

- Support

- Community Articles

- HDF/HDP Twitter Sentiment Analysis End-to-End Solu...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 08-03-2018 06:54 PM - edited 08-17-2019 06:51 AM

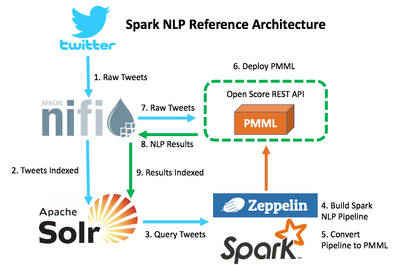

This tutorial will be designed to extent my other work, and the goal is to provide the reader a frame work for a complete end-to-end solution for sentiment analysis on streaming Twitter data. The solution is going to use many different components in the HDF/HDP stack including NiFi, Solr, Spark, and Zeppelin, and it will also utilize libraries that are available in the Open Source Community. I have included the Reference Architecture, which depicts the order of events for the solution.

*Note: Before starting this tutorial, the reader would be best served reading my other tutorials listed below:

- Twitter Sentiment using Spark Core NLP in Apache Zeppelin

- Connecting Solr to Spark - Apache Zeppelin Notebook

*Prerequisites

- Established Apache Solr collections called "Tweets" and "TweetSentiment"

- Downloaded and installed Open Scoring

- Completed this Tutorial: Build and Convert a Spark NLP Pipeline into PMML in Apache Zeppelin

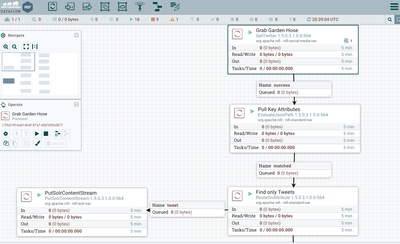

Step 1 - Download and deploy NiFi flow.

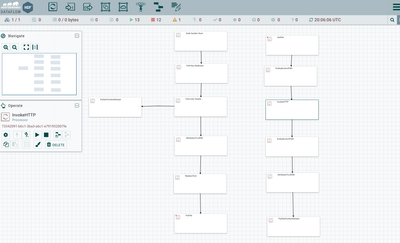

The NiFi Flow can be found here: tweetsentimentflow.xml

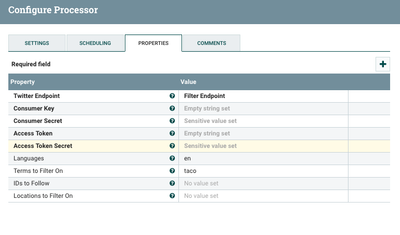

Step 2 - In NiFi flow, configure "Get Garden Hose" NiFi processor with your valid Twitter API Credentials.

- Customer Key

- Customer Secret

- Access Token

- Access Token Secret

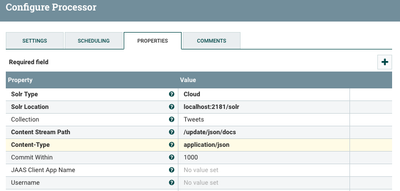

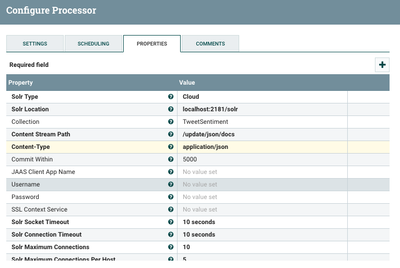

Step 3 - In NiFi flow, configure both of the "PutSolrContentStream" NiFi processors with location of your Solr instance. This is example the Solr instance is located on the same host.

Step 4 - From the command line, start OpenScoring server. Please note, OpenScoring was downloaded to the /tmp directory in this example.

$ java -jar /tmp/openscoring/openscoring-server/target/openscoring-server-executable-1.4-SNAPSHOT.jar --port 3333

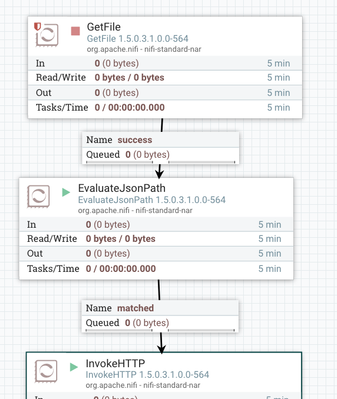

Step 5 - Start building corpus of Tweets, which will be used to train the Spark Pipeline model in the following steps. In NiFi flow, turn on the processors that will move the raw Tweets into Solr. In the NiFi flow, make sure the "Get File" processor remains turned off until the model has been trained and deployed.

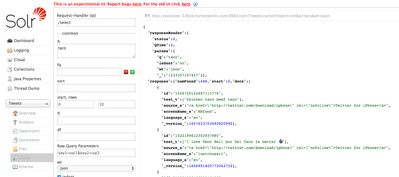

Step 7 - Validate flow is working as expected by querying the Tweets Solr collection in the Solr UI.

Step 8 - Follow the tutorial to Build and Convert a Spark NLP Pipeline into PMML in Apache Zeppelin and save PMML object as TweetPipeline.pmml to the same host that is running OpenScoring.

Step 9 - Use OpenScoring to deploy PMML model based on Spark Pipeline.

$ curl -X PUT --data-binary @TweetPipeline.pmml -H "Content-type: text/xml" http://localhost:3333/openscoring/model/TweetPipe

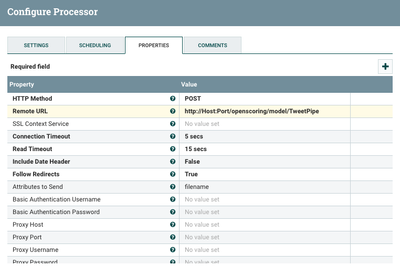

Step 10 - In NiFi flow, configure the "InvokeHTTP" NiFi processor with the host information location of the OpenScoring API end point.

Step 11 - In the NiFi Flow, enable all of the processors, and validate the flow is working as expected by querying the TweetSentiment Solr collection in the Solr UI.