Community Articles

- Cloudera Community

- Support

- Community Articles

- HDFS Space Consumption During Rolling Upgrade

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 06-16-2016 11:41 PM - edited 08-17-2019 11:59 AM

Summary

HDFS Rolling Upgrade facilitates software upgrade of independent individual components in an HDFS cluster. During the upgrade window, HDFS will not physically delete blocks. Normal block deletion resumes after the administrator finalizes the upgrade. A common source of operational problems is forgetting to finalize an upgrade. If left unaddressed, HDFS will run out of storage capacity. Attempts to delete files will not free space. To avoid this problem, always finalize HDFS rolling upgrades in a timely fashion.

This information applies to both Ambari Rolling & Express Upgrade.

Rolling Upgrade Block Handling

The high-level workflow of a rolling upgrade for the administrator is:

- Initiate rolling upgrade.

- Perform software upgrade on individual nodes.

- Run typical workloads and validate new software works.

- If validation is successful, finalize the upgrade.

- If validation discovers a problem, revert to the prior software via one of 2 options:

- Rollback - Restore prior software and restore cluster data to its pre-upgrade state.

- Downgrade - Restore prior software, but preserve data changes that occurred during the upgrade window.

The Apache Hadoop documentation on HDFS Rolling Upgrade covers the specific commands in more detail.

To satisfy the requirements of Rollback, HDFS will not delete blocks during a rolling upgrade window, which is the time between initiating the rolling upgrade and finalizing it. During this window, DataNodes handle block deletions by moving the blocks to a special directory named "trash" instead of physically deleting them. While the blocks reside in trash, they are not visible to clients performing reads. Thus, the files are logically deleted, but the blocks still consume physical space on the DataNode volumes. If the administrator chooses to rollback, the DataNodes restore these blocks from the trash directory to restore the cluster's data to its pre-upgrade state.

After the upgrade is finalized, normal block deletion processing resumes. Blocks previously saved to trash will be physically deleted. New deletion activity will result in a physical delete, not moving the block to trash. Block deletion is asynchronous, so there may be propagation delays between the user deleting a file and the space being freed as reported by tools like "hdfs dfsadmin -report".

Impact on HDFS Space Utilization

An important consequence of this behavior is that during a rolling upgrade window, HDFS space utilization will rise continuously. Attempting to free space by deleting files will be ineffective, because the blocks will be moved to the trash directory instead of physically deleted.

Please also note that this behavior applies not only to files that existed before the upgrade, but also new files created during the upgrade window. All deletes are handled by moving the blocks to trash.

An administrator might notice that even after deleting a large amount of files, various tools continue to report high space consumption. This includes "hdfs dfsadmin -report", JMX metrics (which are consumed by Apache Ambari) and the NameNode web UI.

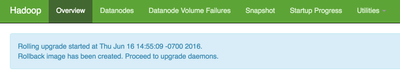

If a cluster shows these symptoms, check if a rolling upgrade has not been finalized. There are multiple ways to check this. The "hdfs dfsadmin -rollingUpgrade query" command will report "Proceed with rolling upgrade", and the "Finalize Time" will be unspecified.

> hdfs dfsadmin -rollingUpgrade query

QUERY rolling upgrade ...

Proceed with rolling upgrade:

Block Pool ID: BP-1273075337-10.22.2.98-1466102062415

Start Time: Thu Jun 16 14:55:09 PDT 2016 (=1466114109053)

Finalize Time: <NOT FINALIZED>The NameNode web UI will display a banner at the top stating "Rolling upgrade started".

JMX metrics also expose "RollingUpgradeStatus", which will have a "finalizeTime" of 0 if the upgrade has not been finalized.

> curl 'http://10.22.2.98:9870/jmx?qry=Hadoop:service=NameNode,name=NameNodeInfo'

...

"RollingUpgradeStatus" : {

"blockPoolId" : "BP-1273075337-10.22.2.98-1466102062415",

"createdRollbackImages" : true,

"finalizeTime" : 0,

"startTime" : 1466114109053

},

...DataNode Disk Layout

This section explores the layout on disk for DataNodes that have logically deleted blocks during a rolling upgrade window. The following discussion uses a small testing cluster containing only one file.

This shows a typical disk layout on a DataNode volume hosting exactly one block replica:

data/dfs/data/current ├── BP-1273075337-10.22.2.98-1466102062415 │ ├── current │ │ ├── VERSION │ │ ├── dfsUsed │ │ ├── finalized │ │ │ └── subdir0 │ │ │ └── subdir0 │ │ │ ├── blk_1073741825 │ │ │ └── blk_1073741825_1001.meta │ │ └── rbw │ ├── scanner.cursor │ └── tmp └── VERSION

The block file and its corresponding metadata file are in the "finalized" directory.

If this file were deleted during a rolling upgrade window, then the block file and its corresponding metadata file would move to the trash directory:

data/dfs/data/current ├── BP-1273075337-10.22.2.98-1466102062415 │ ├── RollingUpgradeInProgress │ ├── current │ │ ├── VERSION │ │ ├── dfsUsed │ │ ├── finalized │ │ │ └── subdir0 │ │ │ └── subdir0 │ │ └── rbw │ ├── scanner.cursor │ ├── tmp │ └── trash │ └── finalized │ └── subdir0 │ └── subdir0 │ ├── blk_1073741825 │ └── blk_1073741825_1001.meta └── VERSION

As a reminder, block deletion activity in HDFS is asynchronous. It may take several minutes after running the "hdfs dfs -rm" command before the block moves from finalized to trash.

One way to determine extra space consumption by logically deleted files is to run a "du" command on the trash directory.

> du -hs data/dfs/data/current/BP-1273075337-10.22.2.98-1466102062415/trash 8.0K data/dfs/data/current/BP-1273075337-10.22.2.98-1466102062415/trash

Assuming relatively even data distribution across nodes in the cluster, if this shows that a significant proportion of the volume's capacity is consumed by the trash directory, then that is a sign that the unfinalized rolling upgrade is the source of the space consumption.

Conclusion

Finalize those upgrades!

Created on 12-30-2016 02:58 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Chris Nauroth Hi, how soon does the normal block deletion start once the upgrade is finalized?

Created on 12-30-2016 07:02 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Joshua Adeleke , there is a statement in the article that "block deletion activity in HDFS is asynchronous". This statement also applies when finalizing an upgrade. Since this processing happens asynchronously, it's difficult to put an accurate wall clock estimate on it. In my experience, I've generally seen the physical on-disk block deletions start happening 2-5 minutes after finalizing an upgrade.

Created on 12-30-2016 08:20 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Chris Nauroth Then there must be an issue if after 7 hours there is still no reduction in HDFS storage and it still keeps the /trash/finalized folder...