Community Articles

- Cloudera Community

- Support

- Community Articles

- Incremental Fetch in NiFi with QueryDatabaseTable

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

08-16-2016

09:56 PM

- edited on

02-12-2020

06:33 AM

by

SumitraMenon

NiFi is most effectively used as an "always-on" system, meaning that the data flows are often always operational (running). Doing batch processing is a more difficult task and usually requires some user intervention (such as stopping a source processor).

For relational databases (RDBMS), a common use case is to migrate, replicate, or otherwise move the data from the source RDBMS to some target. If ExecuteSQL were used to get all data from a table (for example), then the processor will execute the query each time it is triggered to run, and will return whatever results correspond to the query.

If the goal is to simply move all rows to some target, then ExecuteSQL could be started then immediately stopped, such that it would only execute once, and all results will be sent down the flow.

However a more common use case is that the source database is being updated from some external process (user, webapp, ERP/CRM/etc. system). In order to get the new rows, the table needs to be queried again. However assuming the "old" rows had already been moved, then many duplicate rows would continue to be processed in the flow.

As an alternative the QueryDatabaseTable processor allows you to specify column(s) in a table that are increasing in value (such as an "ID" or "timestamp" column), and the processor will only retrieve rows from the table whose values in those columns are greater than the maximum value(s) observed so far.

To illustrate, consider the following database table called "users":

| id | name | age | |

| 1 | Joe | joe@fakemail.com | 42 |

| 2 | Mary | mary@fakemail.com | 24 |

| 3 | Matt | matt@fakemail.com | 38 |

Here, QueryDatabaseTable would be configured to use a table name of "users" and a "Maximum-Value Column" of "id". When the processor runs the first time, it will not have seen any values from the "id" column and thus all rows will be returned. The query executed is:

SELECT * FROM users

However after that query has completed, QueryDatabaseTable stores the maximum value for the "id" column that it has seen; namely, 3.

Now let's say QueryDatabaseTable has been scheduled to run every 5 minutes, and the next time it runs, the table looks like this:

| id | name | age | |

| 1 | Joe | joe@fakemail.com | 42 |

| 2 | Mary | mary@fakemail.com | 24 |

| 3 | Matt | matt@fakemail.com | 38 |

| 4 | Armando | armando@fakemail.com | 20 |

| 5 | Jim | jeff@fakemail.com | 30 |

Because QueryDatabaseTable had stored the maximum value of 3 for the "id" column, this time when the processor runs it executes the following query:

SELECT * FROM users WHERE id > 3

Now you will see just the last two rows (the recently added ones) are returned, and QueryDatabaseTable has stored the new maximum value of 5.

Here is the concept applied to a NiFi flow:

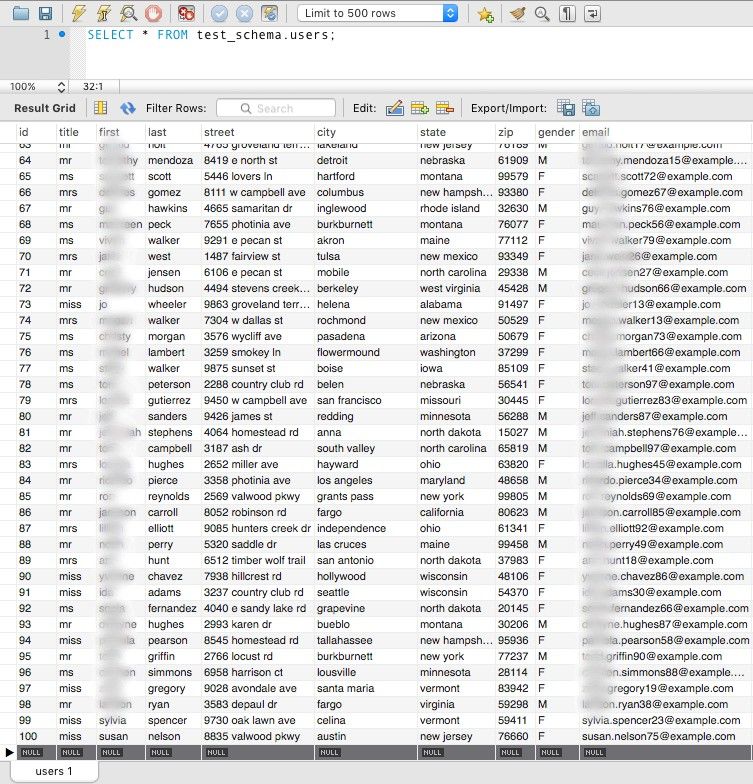

For this example, I have a "users" table containing many attributes about randomly-generated users, and there are 100 records to start with:

If we run QueryDatabaseTable, we see that after the SplitAvro we get 100 separate flow files:

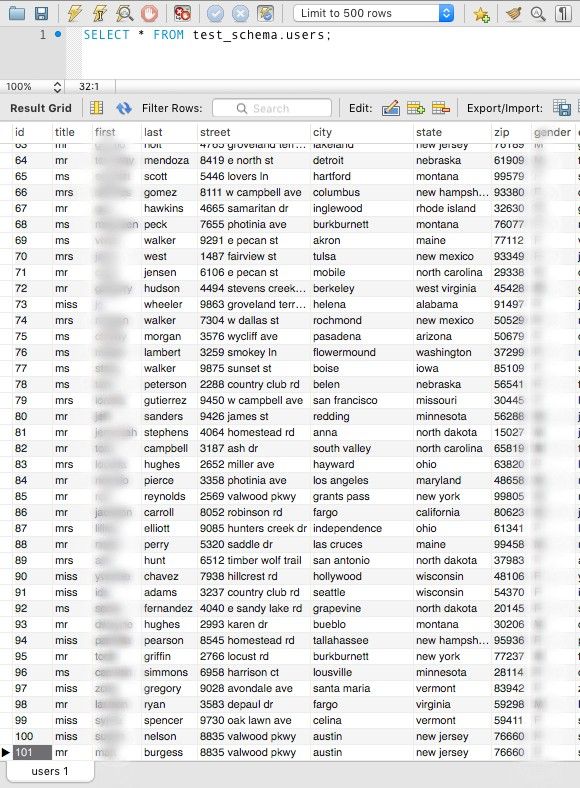

The flow remains this way until new data comes in (with an "id" value larger than 100). Adding one:

Once this row is added, you can see the additional record move through the flow:

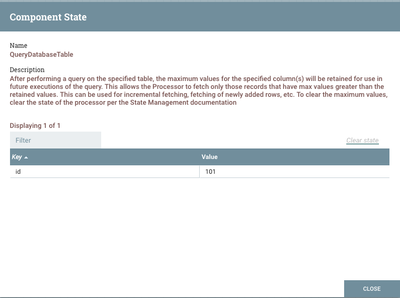

This approach works for any column that has increasing values, such as timestamps. If you want to clear out the maximum value(s) from the processor's state, right click on the processor and choose View State. Then you can click "Clear State" on the dialog:

Hopefully this article has shown how to use QueryDatabaseTable to do incremental fetching of database tables in NiFi. Please let me know how/if this works for you. Cheers!

Created on 08-16-2016 11:01 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

very good information, thanks for sharing Matt. Can't wait to see your next one for GenerateTableFetch:)

Created on 01-20-2017 09:07 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This is really helpful and tried it in Nifi 1.1.1 version., But it is not showing any value in view state page. Is it because the implementation changed in version 1.1.1.

But i tried with GenerateTableFetch processor, it is showing the value.

I configure the table value as below and 'Maximum-value-columns : nid'

(SELECT nid,node_type_id FROM cnbc_publish.node where type IN ('blogpost', 'cnbcnewsstory', 'partnerstory', 'wirestory', 'pressrelease', 'sponsored') and first_pub_date between 1476489600 and 1479744000 ) nd

Created on 01-20-2017 10:13 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Can you please provide the template file for the example above

Created on 03-15-2017 07:38 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Matt,

The above article really helps. But I do have a requirement to filter the data, I am searching for an option to add where clause.

Regards

Bhanu Chidigam

Created on 08-24-2017 07:09 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Really helpful! But i have a question:

How to specify a value to "Maximum-Value Column"? For your example , if i only want to get the data with an "id" value larger than 50, how to do that?

Created on 08-24-2017 06:25 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

In QueryDatabaseTable, you'd set the Maximum-Value Column to "id" and add a dynamic property named "initial.maxvalue.id" to 50. Make sure state has been cleared before running, and the first time it executes, it will grab all rows with id > 50. This same capability for GenerateTableFetch is not yet available (NIFI-4283) but coming soon.

Created on 08-25-2017 06:07 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thumb up!

Created on 10-31-2017 07:39 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Matt Burgess Can we do join query here from differen tables. I looked at the properties for QueryDatabase table, there is no property for entering query? Any help when query is join query from 2 different tables

Created on 11-01-2017 01:13 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This feature was added in NiFi 1.4.0 (NIFI-4257)

Created on 11-22-2017 07:39 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks Matt , one more good article from you.

If my nifi went down or processor stopped somehow. After restart what will be the value of the columns present ? (reset or will it keep the updated value)

also eagerly waiting for this processor to handle the actual cdc data from database change log file.that will solve many use cases i hope and may reduce the cost of other CDC based tools.