Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: org.apache.hadoop.hive.ql.metadata.HiveExcepti...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

org.apache.hadoop.hive.ql.metadata.HiveException dissapears when I add LIMIT clause to query

Created on 07-20-2016 03:41 PM - edited 08-19-2019 01:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

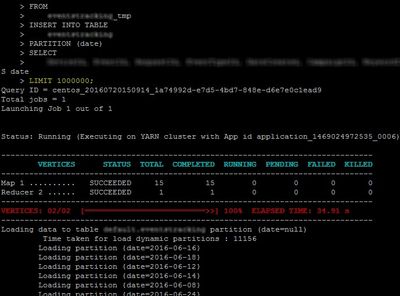

I have a query that inserts records from one table to another, 1.5 million records approx.

When I run the query most of the map tasks fails and the job aborts displaying several exceptions.

There is no problem at all in the data being inserted or in the table schema, as the failed rows can be successfully inserted in an isolated query. Datanodes and yarn have plenty of space left, connectivity is not a problem as the cluster is hosted in aws ec2 and the pcs are in the same virtual private cloud.

But here is the strange thing… If I add a LIMIT clause to the original query the job executes properly! The limit value is big enough to include all the records, so in reality there is no actual limit.

Cluster Specs: The cluster is small for testing purposes, composed by 3 machines, the ambari-server and 2 datanodes/nodemanagers 8GB RAM each, YARN memory 12.5GB. Remaining HDFS disk 40GB.

Thanks in advance for your time,

Created 07-20-2016 06:14 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks like your datanodes are dying from too many open files - check the nofiles setting for the "hdfs" user in /etc/security/limits.d/ If you want to bypass that particular problem by changing the query plan, try with set hive.optimize.sort.dynamic.partition=true;

Created 07-20-2016 06:14 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks like your datanodes are dying from too many open files - check the nofiles setting for the "hdfs" user in /etc/security/limits.d/ If you want to bypass that particular problem by changing the query plan, try with set hive.optimize.sort.dynamic.partition=true;