Archives of Support Questions (Read Only)

- Cloudera Community

- Board Archive

- Archives of Support Questions (Read Only)

- how to create a right spark-yarn-client interprete...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

how to create a right spark-yarn-client interpreter on zeppelin(spark version 1.5.2,hdp version 2.3.4,zeppelin version 0.6.0)

- Labels:

-

Apache Zeppelin

Created on 09-06-2016 09:23 AM - edited 08-19-2019 01:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

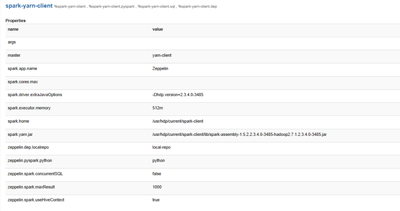

my spark-yarn-client interpreter

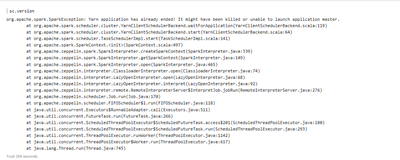

run sc.version

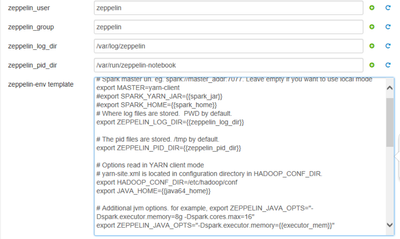

my zeppelin-env

zeppelin-zeppelin-master.easted.out in /var/log/zeppelin

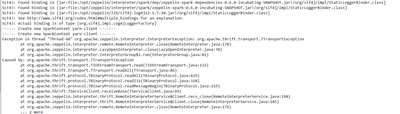

SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/zeppelin/interpreter/spark/dep/zeppelin-spark-dependencies-0.6.0-incubating-SNAPSHOT.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/zeppelin/interpreter/spark/zeppelin-spark-0.6.0-incubating-SNAPSHOT.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/zeppelin/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] ------ Create new SparkContext local[*] ------- Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=512m; support was removed in 8.0 Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=512m; support was removed in 8.0 SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/zeppelin/interpreter/spark/dep/zeppelin-spark-dependencies-0.6.0-incubating-SNAPSHOT.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/zeppelin/interpreter/spark/zeppelin-spark-0.6.0-incubating-SNAPSHOT.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/opt/zeppelin/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] ------ Create new SparkContext yarn-client ------- ------ Create new SparkContext yarn-client ------- Exception in thread "Thread-60" org.apache.zeppelin.interpreter.InterpreterException: org.apache.thrift.transport.TTransportException at org.apache.zeppelin.interpreter.remote.RemoteInterpreter.close(RemoteInterpreter.java:178) at org.apache.zeppelin.interpreter.LazyOpenInterpreter.close(LazyOpenInterpreter.java:78) at org.apache.zeppelin.interpreter.InterpreterGroup$1.run(InterpreterGroup.java:81) Caused by: org.apache.thrift.transport.TTransportException at org.apache.thrift.transport.TIOStreamTransport.read(TIOStreamTransport.java:132) at org.apache.thrift.transport.TTransport.readAll(TTransport.java:86) at org.apache.thrift.protocol.TBinaryProtocol.readAll(TBinaryProtocol.java:429) at org.apache.thrift.protocol.TBinaryProtocol.readI32(TBinaryProtocol.java:318) at org.apache.thrift.protocol.TBinaryProtocol.readMessageBegin(TBinaryProtocol.java:219) at org.apache.thrift.TServiceClient.receiveBase(TServiceClient.java:69) at org.apache.zeppelin.interpreter.thrift.RemoteInterpreterService$Client.recv_close(RemoteInterpreterService.java:198) at org.apache.zeppelin.interpreter.thrift.RemoteInterpreterService$Client.close(RemoteInterpreterService.java:185) at org.apache.zeppelin.interpreter.remote.RemoteInterpreter.close(RemoteInterpreter.java:176) ... 2 more

Created 10-07-2016 02:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does it work from the spark-shell?

I would explicitly define SPARK_HOME in the zeppelin_env_content (export SPARK_HOME=/usr/hdp/current/spark-client)

Could also try "yarn-cluster" in the interpreter screen.

Created 09-12-2016 02:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think the problem may be caused by a yarn , yarn check the configuration items.

,I think the problem may be caused by a yarn , yarn check the configuration items

Created 10-07-2016 02:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does it work from the spark-shell?

I would explicitly define SPARK_HOME in the zeppelin_env_content (export SPARK_HOME=/usr/hdp/current/spark-client)

Could also try "yarn-cluster" in the interpreter screen.

Created 10-07-2016 04:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ignore my comment on "yarn-cluster", that only applies to the livy-server.

Created 10-08-2016 05:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks for your answer,I have resolved the problem.

Created 10-11-2016 02:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How do you solve this? I met similar problem.

,How do you resolve the question?