Archives of Support Questions (Read Only)

- Cloudera Community

- Board Archive

- Archives of Support Questions (Read Only)

- livy2 zepplin issue

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

livy2 zepplin issue

Created 08-15-2018 12:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

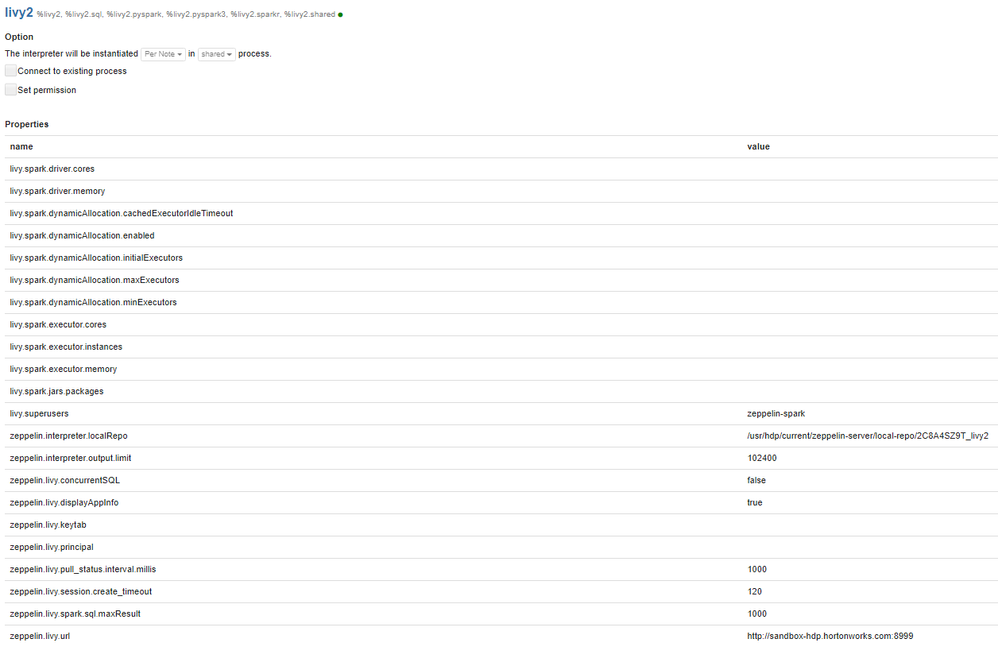

I tried to connect to livy2.spark thru zepplin notebook at HDP 2.6.4

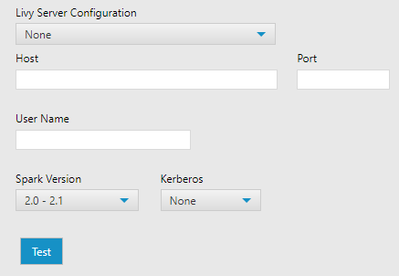

I don't know what configuration should be at interpreter(please check attached image) in Zepplin.

I counldn't cocnnect to livy2.spark, I had error: org.apache.zeppelin.livy.LivyException: The creation of session 4 is timeout within 120 seconds, appId: null, log: [stdout: , stderr: , Warning: Master yarn-cluster is deprecated since 2.0. Please use master "yarn" with specified deploy mode instead., 18/08/15 07:47:28 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable, 18/08/15 07:47:55 WARN DomainSocketFactory: The short-circuit local reads feature cannot be used because libhadoop cannot be loaded., 18/08/15 07:47:56 INFO RMProxy: Connecting to ResourceManager at sandbox-hdp.hortonworks.com/172.18.0.2:8032, YARN Diagnostics: ] at org.apache.zeppelin.livy.BaseLivyInterpreter.createSession(BaseLivyInterpreter.java:291) at org.apache.zeppelin.livy.BaseLivyInterpreter.initLivySession(BaseLivyInterpreter.java:184) at org.apache.zeppelin.livy.LivySharedInterpreter.open(LivySharedInterpreter.java:57) at org.apache.zeppelin.interpreter.LazyOpenInterpreter.open(LazyOpenInterpreter.java:69) at org.apache.zeppelin.livy.BaseLivyInterpreter.getLivySharedInterpreter(BaseLivyInterpreter.java:165) at org.apache.zeppelin.livy.BaseLivyInterpreter.open(BaseLivyInterpreter.java:139) at org.apache.zeppelin.interpreter.LazyOpenInterpreter.open(LazyOpenInterpreter.java:69) at org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer$InterpretJob.jobRun(RemoteInterpreterServer.java:493) at org.apache.zeppelin.scheduler.Job.run(Job.java:175) at org.apache.zeppelin.scheduler.FIFOScheduler$1.run(FIFOScheduler.java:139) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$201(ScheduledThreadPoolExecutor.java:180) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:293) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748)

Created 08-15-2018 10:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Maksym Shved!

Right, looking at your screenshot. I'm assuming you're doing this tests in a non-production environment. If so, then change the following parameters:

#Ambari > Spark2 > Advanced livy2-conf

livy.spark.master=yarn

livy.impersonation.enabled=false

#Ambari > Spark2 > Advanced livy2-env

export SPARK_HOME=/usr/hdp/current/spark2-client

export SPARK_CONF_DIR=/etc/spark2/conf

export JAVA_HOME={{java_home}}

export HADOOP_CONF_DIR=/etc/hadoop/conf

export LIVY_LOG_DIR={{livy2_log_dir}}

export LIVY_PID_DIR={{livy2_pid_dir}}

export LIVY_SERVER_JAVA_OPTS="-Xmx2g"

Restart the SPARK2 service and make the test again. If you're still facing issues, then go back to the Ambari and set the following.

Ambari > HDFS > Custom Core-Site hadoop.proxyuser.root.groups=* hadoop.proxyuser.root.hosts=*

Hope this helps.

Created 08-15-2018 10:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Maksym Shved!

Right, looking at your screenshot. I'm assuming you're doing this tests in a non-production environment. If so, then change the following parameters:

#Ambari > Spark2 > Advanced livy2-conf

livy.spark.master=yarn

livy.impersonation.enabled=false

#Ambari > Spark2 > Advanced livy2-env

export SPARK_HOME=/usr/hdp/current/spark2-client

export SPARK_CONF_DIR=/etc/spark2/conf

export JAVA_HOME={{java_home}}

export HADOOP_CONF_DIR=/etc/hadoop/conf

export LIVY_LOG_DIR={{livy2_log_dir}}

export LIVY_PID_DIR={{livy2_pid_dir}}

export LIVY_SERVER_JAVA_OPTS="-Xmx2g"

Restart the SPARK2 service and make the test again. If you're still facing issues, then go back to the Ambari and set the following.

Ambari > HDFS > Custom Core-Site hadoop.proxyuser.root.groups=* hadoop.proxyuser.root.hosts=*

Hope this helps.

Created on 08-29-2018 11:56 AM - edited 08-17-2019 07:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks a lot!

@Vinicius Higa Murakami, I have one small question, how I can connect to livy2 server?

I need to know some parameters to connect(look at the screenshot), but I don't know where I could find them.

I'm work at SandBox 2.6.5(last version)

Please help me, if u can.

Thanks!