what could be the reason that yarn memory is very high?

any suggestion to verify this?

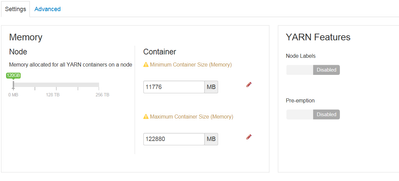

we have this value from ambari

yarn.scheduler.capacity.root.default.user-limit-factor=1

yarn.scheduler.minimum-allocation-mb=11776

yarn.scheduler.maximum-allocation-mb=122880

yarn.nodemanager.resource.memory-mb=120G

/usr/bin/yarn application -list -appStates RUNNING | grep RUNNING

Thrift JDBC/ODBC Server SPARK hive default RUNNING UNDEFINED 10%

Thrift JDBC/ODBC Server SPARK hive default RUNNING UNDEFINED 10%

mcMFM SPARK hdfs default RUNNING UNDEFINED 10%

mcMassRepo SPARK hdfs default RUNNING UNDEFINED 10%

mcMassProfiling SPARK hdfs default RUNNING UNDEFINED

free -g

total used free shared buff/cache available

Mem: 31 24 0 1 6 5

Swap: 7 0 7

Michael-Bronson