Support Questions

- Cloudera Community

- Support

- Support Questions

- All Nodes are disconnected from NiFI Cluster

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

All Nodes are disconnected from NiFI Cluster

- Labels:

-

Apache NiFi

Created 07-05-2018 08:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have a 3 node NiFi cluster in the production environment. it has been observed that all the nodes are disconnected from nifi-cluster and all nodes are in running state.

Below are logged.

018-07-05 00:30:19,650 ERROR [Site-to-Site Worker Thread-90476] o.a.nifi.remote.SocketRemoteSiteListener Unable to communicate with remote instance Peer[url=<>] (SocketFlowFileServerProtocol[CommsID=9f090b3e-f5cd-4dae-b893-ef3ba983afef]) due to org.apache.nifi.cluster.exception.NoClusterCoordinatorException: No node has yet been elected Cluster Coordinator. Cannot establish connection to cluster yet.; closing connection

Thanks

Dilip

Created 08-13-2019 12:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you manage to learn more about what might cause this? We're seeing the same thing.

Created 08-13-2019 03:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The above question and the associated comments were originally posted in the Community Help track. On Tue Aug 13 15:27 UTC 2019, a member of the HCC moderation staff moved it to the Data Ingestion & Streaming track. The Community Help Track is intended for questions about using the HCC site itself.

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Created on 08-14-2019 09:04 AM - edited 08-18-2019 01:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nifi cluster coordinator relays on the zookeeper for the election. NiFi employs a Zero-Master Clustering paradigm. Each node in the cluster performs the same tasks on the data, but each operates on a different set of data. One of the nodes is automatically elected (via Apache ZooKeeper) as the Cluster Coordinator.

All nodes in the cluster will then send heartbeat/status information to this node, and this node is responsible for disconnecting nodes that do not report any heartbeat status for some amount of time. Additionally, when a new node elects to join the cluster, the new node must first connect to the currently-elected Cluster Coordinator in order to obtain the most up-to-date flow. If the Cluster Coordinator determines that the node is allowed to join (based on its configured Firewall file), the current flow is provided to that node, and that node is able to join the cluster, assuming that the node’s copy of the flow matches the copy provided by the Cluster Coordinator. If the node’s version of the flow configuration differs from that of the Cluster Coordinator’s, the node will not join the cluster.

Checklist

Ensure you have 3 zookeepers (ensemble) to manage your Nifi cluster, and all should be up and running.

Walkthrough

- Stop all the Nifi instances

- Ensure the 3 zookeepers are up and running

- Start the Nifi one at a time

- Validate that one nifi has been elected coordinator as is PRIMARY

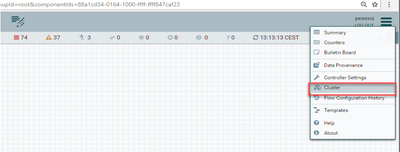

See screenshots

Coordinator elected

HTH

Created 08-14-2019 12:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I should have said that this was using the embedded option for the coordinator. I managed to find the solution by way of an old mailinglist thread (from 2016) [1] that suggested starting the nodes at the same time, rather than one after the other. The reason is that if you start up one node, it will go into a connection frenzy failing to contact the other nodes, quickly hitting the connection limit (and leaving lots of dangling connections in "close wait" state).

[1] http://apache-nifi.1125220.n5.nabble.com/Zookeeper-error-td13355.html