Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: CDP on Azure: Creation failed (freelpa creatio...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

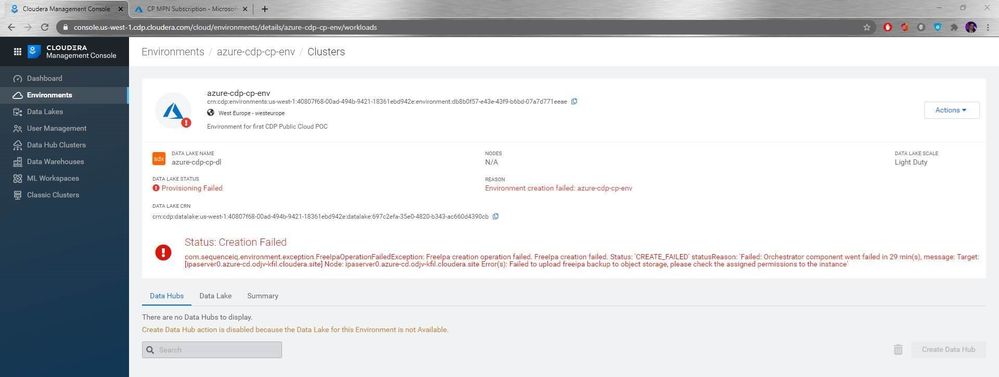

CDP on Azure: Creation failed (freelpa creation operation failed)

- Labels:

-

Cloudera Data Platform (CDP)

Created 09-29-2020 12:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

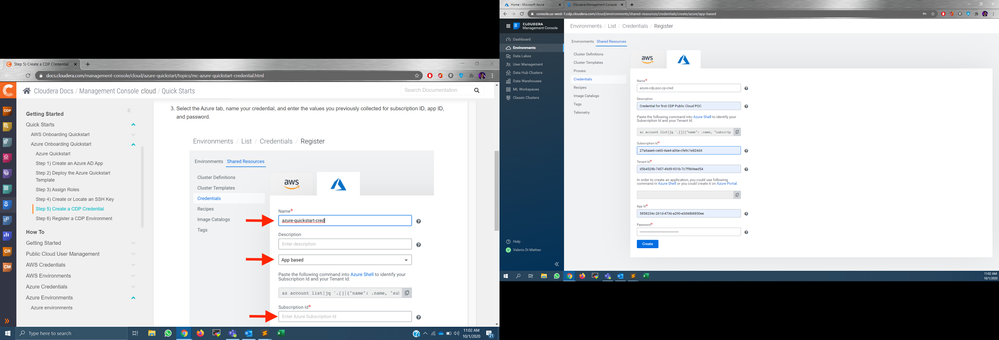

I'm trying to register an environment in CDP from using Azure, following this guide: https://docs.cloudera.com/management-console/cloud/azure-quickstart/topics/mc-azure-quickstart.html

All the steps work well, except the registration itself, which gives a 'Creation failed' error.

Even worse, the Environment and Data Lake cannot be deleted. Even the deletion fails for some sort of deadlock between the two, as they were nor provisioned correctly.

Any advice?

PS: it's worth to note that the guide comes with a video too (https://www.cloudera.com/campaign/videos/cdp-setup.html?videoId=6150076931001), in which a role is created in Azure by copy pasting a JSON provided by the CDP UI. This json is not there anymore in the guide nor in the UI itself, so I guess it's ok?

Thanks and best regards,

Valerio

Created 10-06-2020 06:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Absolutely, we have a partner team that can work with you.

More info here: https://www.cloudera.com/partners/cloudera-connect-partner-program.html

Created 10-13-2020 05:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Paul,

after checking more documentation and guides online, I can't help but notice that the only thing that seems to be missing is the creation of a 'custom role' to be assigned to the app. Is this the 'mapping' you were referring to?

At this link (https://www.cloudera.com/campaign/videos/cdp-setup.html?videoId=6150076931001) in the Azure video, at minutes 5:00 you can see a JSON and at minute 6:55 this JSON is used to create and assign the custom role.

However, the guide online does not show this step, even though the JSON is visible in a screenshot (https://docs.cloudera.com/management-console/cloud/azure-quickstart/topics/mc-azure-quickstart-crede...).

As you can see in my own screenshot, then, the JSON in the current UI is not even visible.

I can't seem to find any other missing step.

I had to delete the cluster in the meanwhile as every day was costing around 80 dollars of resources, so I was hoping to get more 'leads' on the error before I try again...

Thanks and best regards,

Valerio

Created 10-13-2020 02:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Valerio,

First, regarding the app role, I think the quick start doc page is out of date (I reported this to our doc team).

You do not need to create a custom role, as long as you create your credential app like this (replace subscriptionId with your ID):

az ad sp create-for-rbac \

--name http://your-cloudbreak-app \

--role Contributor \

--scopes /subscriptions/{subscriptionId}

Secondly, did you run step 3 completely?

Specifically, after the quick start, make sure to run this in an Azure bash shell post quickstart deployment (replace YOUR_SUBSCRIPTION_ID and YOUR_RG with the values used in quickstart):

export SUBSCRIPTIONID="YOUR_SUBSCRIPTION_ID"

export RESOURCEGROUPNAME="YOUR_RG"

export STORAGEACCOUNTNAME=$(az storage account list -g $RESOURCEGROUPNAME|jq '.[]|.name'| tr -d '"')

export ASSUMER_OBJECTID=$(az identity list -g $RESOURCEGROUPNAME|jq '.[]|{"name":.name,"principalId":.principalId}|select(.name | test("AssumerIdentity"))|.principalId'| tr -d '"')

export DATAACCESS_OBJECTID=$(az identity list -g $RESOURCEGROUPNAME|jq '.[]|{"name":.name,"principalId":.principalId}|select(.name | test("DataAccessIdentity"))|.principalId'| tr -d '"')

export LOGGER_OBJECTID=$(az identity list -g $RESOURCEGROUPNAME|jq '.[]|{"name":.name,"principalId":.principalId}|select(.name | test("LoggerIdentity"))|.principalId'| tr -d '"')

export RANGER_OBJECTID=$(az identity list -g $RESOURCEGROUPNAME|jq '.[]|{"name":.name,"principalId":.principalId}|select(.name | test("RangerIdentity"))|.principalId'| tr -d '"')

# Assign Managed Identity Operator role to the assumerIdentity principal at subscription scope

az role assignment create --assignee $ASSUMER_OBJECTID --role 'f1a07417-d97a-45cb-824c-7a7467783830' --scope "/subscriptions/$SUBSCRIPTIONID"

# Assign Virtual Machine Contributor role to the assumerIdentity principal at subscription scope

az role assignment create --assignee $ASSUMER_OBJECTID --role '9980e02c-c2be-4d73-94e8-173b1dc7cf3c' --scope "/subscriptions/$SUBSCRIPTIONID"

# Assign Storage Blob Data Contributor role to the loggerIdentity principal at logs filesystem scope

az role assignment create --assignee $LOGGER_OBJECTID --role 'ba92f5b4-2d11-453d-a403-e96b0029c9fe' --scope "/subscriptions/$SUBSCRIPTIONID/resourceGroups/$RESOURCEGROUPNAME/providers/Microsoft.Storage/storageAccounts/$STORAGEACCOUNTNAME/blobServices/default/containers/logs"

# Assign Storage Blob Data Owner role to the dataAccessIdentity principal at logs/data filesystem scope

az role assignment create --assignee $DATAACCESS_OBJECTID --role 'b7e6dc6d-f1e8-4753-8033-0f276bb0955b' --scope "/subscriptions/$SUBSCRIPTIONID/resourceGroups/$RESOURCEGROUPNAME/providers/Microsoft.Storage/storageAccounts/$STORAGEACCOUNTNAME/blobServices/default/containers/data"

az role assignment create --assignee $DATAACCESS_OBJECTID --role 'b7e6dc6d-f1e8-4753-8033-0f276bb0955b' --scope "/subscriptions/$SUBSCRIPTIONID/resourceGroups/$RESOURCEGROUPNAME/providers/Microsoft.Storage/storageAccounts/$STORAGEACCOUNTNAME/blobServices/default/containers/logs"

# Assign Storage Blob Data Contributor role to the rangerIdentity principal at data filesystem scope

az role assignment create --assignee $RANGER_OBJECTID --role 'ba92f5b4-2d11-453d-a403-e96b0029c9fe' --scope "/subscriptions/$SUBSCRIPTIONID/resourceGroups/$RESOURCEGROUPNAME/providers/Microsoft.Storage/storageAccounts/$STORAGEACCOUNTNAME/blobServices/default/containers/data"

Let me know if that works out for you.

Created 10-14-2020 01:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Paul,

thanks for the answer.

Yes, I did this step as you can see in screenshot 59 of the previous attachment.

HOWEVER, now that you gave me your version, I notice the following:

In the video (https://www.cloudera.com/campaign/videos/cdp-setup.html?videoId=6150076931001) as well as the file that the guide makes me download (https://raw.githubusercontent.com/odeshmane/cdp-azure-tools/master/azure_msi_role_assign.sh) the variables values are wrapped in double quotes, and the ObjectID are just the strings copied from the Azure UI. So, I used this format:

export SUBSCRIPTIONID="27a6aae6-ce60-4ae4-a06e-cfe9c1e824d4"

export RESOURCEGROUPNAME="cdp-poc"

export STORAGEACCOUNTNAME="cdppoccp"

export ASSUMER_OBJECTID="45fef2ef-c25b-45d9-baab-049249d252b1"

export DATAACCESS_OBJECTID="1df6f883-dde7-4c28-8961-cd37a2125273"

export LOGGER_OBJECTID="b86d8a21-fed0-4cf4-a723-936baa3736f4"

export RANGER_OBJECTID="edbe4750-e2a5-4932-9edd-6f9477429838"

Looking at your version, you build these programmatically, so I went to look again and actually, the example in the very same guide is different than the video and the template itself. It has this format, with no quotes, and the objectIds have a prefix made of Storage Account name + Identity ID.

export SUBSCRIPTIONID=jfs85ls8-sik8-8329-fq0m-jqo7v06dk5sy

export RESOURCEGROUPNAME=azure-quickstart-test1

export STORAGEACCOUNTNAME=cdpazureqs

export ASSUMER_OBJECTID=cdpazureqs-Assumer-jd85mvh9-u86n-8j2d-54dg-jd72j5ki1sd2

export DATAACCESS_OBJECTID=cdpazureqs-DataAccess-peyc86sk346c-yj12-ys89-ye5m-zt6wlv95fi23

export LOGGER_OBJECTID=cdpazureqs-Logger-f63ucn04-hf52-rq87-b6gd-v86fds9ptk3g

export RANGER_OBJECTID=cdpazureqs-Ranger-gc86d0uq-l6o4-vx67-qh87-1jf74l0cbeq7

Might this difference be the cause of the issue? Which one is the right version?

PS: As a matter of fact, if I go to my cloudbreak app Manifest right now I see this...

"appRoles": []

Valerio

Created 10-14-2020 05:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Again, you have two issues here:

1. Making sure that your app has contributor role

2. Making sure that the identities you created with the quick start template have the right permissions

If you follow the instructions I gave you (create the proper app + run the script), it should work.

Created 10-14-2020 05:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Paul,

I've used the same commands that you shared.

Is there any way I can check if the contributor role is properly assigned before I go on and start the cluster yet again? That empty 'appRole' property in the manifest json makes me doubt, and it is empty even after creating a brand new app and running your script again.

Best regards,

Valerio

Created 10-14-2020 10:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

of creation.

Did you manage to get involved with our partner program?

We would like to help you out by sharing screen rather than finding the

needle in the haystack here 🙂

Created 10-14-2020 09:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Paul,

I agree, a call with a shared screen would be better.

So yes, I'm already in the Partner Program and I also got the Partner Development License in order to do this very same exercise with CDP.

However I can't access the cases in the Support Portal (I get a 'restricted access' message).

If I look at the Partner Development Subscription I see that Support is included with Gold and Platinum, but we are Silver... Maybe it depends on this?

I contacted our Partner Sales Manager to see if he can help out.

Best regards,

Valerio

- « Previous

-

- 1

- 2

- Next »