Support Questions

- Cloudera Community

- Support

- Support Questions

- Convert json to multiple insert sql format

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Convert json to multiple insert sql format

- Labels:

-

Apache NiFi

Created 03-19-2023 04:22 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

Greetings!

Assume that I have a data flow, and the final result is inserting to the SQL database (Microsoft), but the step before! I have the following JSON format for each flow file content as following:

{

"sbrActivityType" : {

"1" : "ACT+A",

"3" : "ACT+A",

"2" : "ACT+X",

"5" : "ACT+X",

"4" : "ACT+X"

},

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}

Then, my desired output format look like the following:

INSERT INTO sbrTestNifiData (sbrActivityType, sbrDateTime) VALUES (?, ?)

So, it does not insert correctly to my database table which is looks like the following:

CREATE TABLE [dbo].[sbrTestNifiData](

[sbrId] [int] IDENTITY(1,1) NOT NULL primary key,

[sbrActivityType] [varchar](50) NULL,

[sbrDateTime] [varchar](50) NULL

);

So, it should inserted to my database table as a five records:

| sbrId | sbrActivityType | sbrDateTime |

| 1 | ACT+A | Sun Mar 19 11:56:40 AST 2023 |

| 2 | ACT+A | Sun Mar 19 11:56:40 AST 2023 |

| 3 | ACT+X | Sun Mar 19 11:56:40 AST 2023 |

| 4 | ACT+X | Sun Mar 19 11:56:40 AST 2023 |

| 5 | ACT+X | Sun Mar 19 11:56:40 AST 2023 |

Created on 03-19-2023 08:13 AM - edited 03-19-2023 08:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ,

My guess is that you are trying to use ConvertJsonToSQL & PutSQL to transpose the json into sql table. For this to happen you cant use the input json in the given format , you need to transform it using JoltTransformJSON processor to create an array of records where each record consist of "sbrActivityType" & "sbrDateTime" columns. The json keys have to match the table column names for this to work. The jolt spec to use can look like this:

[

{

"operation": "shift",

"spec": {

"sbrActivityType": {

// convert every activity into an array element

"*": "data[#1].sbrActivityType"

},

"sbrDateTime": "sbrDateTime"

}

},

{

"operation": "modify-default-beta",

"spec": {

"data": {

"*": {

// create new sbrDateTime key under each array element from

// above and set its value to the initial sbrDateTime

"sbrDateTime": "@(3,sbrDateTime)"

}

}

}

}

]

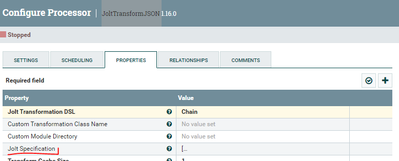

you set the Jolt Specification property with the spec above in the JoltTransformJSON

This should give you new json flowfile as follows:

{

"data" : [ {

"sbrActivityType" : "ACT+A",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+X",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+A",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+X",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+X",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

} ],

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}

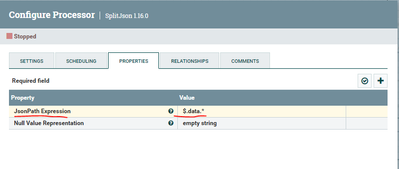

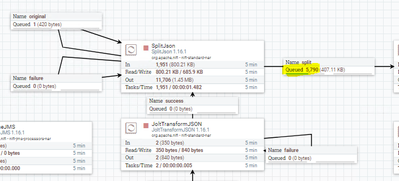

After that, use SplitJosn to split each record into its own json flowfile. The split JsonPath Expression is set to $.data.*:

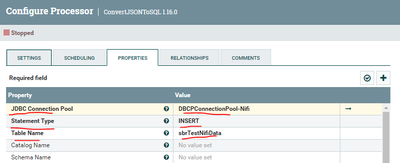

Then you use ConvertJSONToSQL as follows:

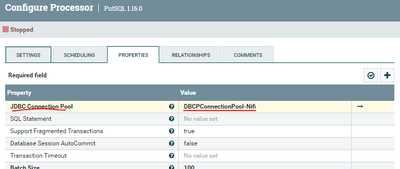

And Finally you use the PUTSQL processor as follows:

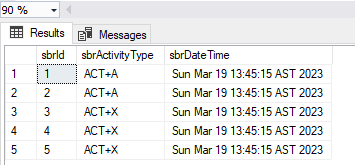

This should populate the table accordingly:

If this helps please accept solution.

Thanks

Created on 03-26-2023 06:10 AM - edited 03-26-2023 06:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I noticed that you have an "Original" relationship going from SplitJson back to itself. This where your duplicates are coming from. The "Original" relationship means the original input flowfile that is passed to the splitJson from JoltTransformation processor. You need to terminate this relationship and not pass it to anything unless you are using Wait-Notify processors which I dont think in your case. You can terminate relationship by going to splitJson Configuration then go to the Relationship tab and check the terminate box under the Original relationship.

Created on 03-19-2023 08:13 AM - edited 03-19-2023 08:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ,

My guess is that you are trying to use ConvertJsonToSQL & PutSQL to transpose the json into sql table. For this to happen you cant use the input json in the given format , you need to transform it using JoltTransformJSON processor to create an array of records where each record consist of "sbrActivityType" & "sbrDateTime" columns. The json keys have to match the table column names for this to work. The jolt spec to use can look like this:

[

{

"operation": "shift",

"spec": {

"sbrActivityType": {

// convert every activity into an array element

"*": "data[#1].sbrActivityType"

},

"sbrDateTime": "sbrDateTime"

}

},

{

"operation": "modify-default-beta",

"spec": {

"data": {

"*": {

// create new sbrDateTime key under each array element from

// above and set its value to the initial sbrDateTime

"sbrDateTime": "@(3,sbrDateTime)"

}

}

}

}

]

you set the Jolt Specification property with the spec above in the JoltTransformJSON

This should give you new json flowfile as follows:

{

"data" : [ {

"sbrActivityType" : "ACT+A",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+X",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+A",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+X",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}, {

"sbrActivityType" : "ACT+X",

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

} ],

"sbrDateTime" : "Sun Mar 19 13:45:15 AST 2023"

}

After that, use SplitJosn to split each record into its own json flowfile. The split JsonPath Expression is set to $.data.*:

Then you use ConvertJSONToSQL as follows:

And Finally you use the PUTSQL processor as follows:

This should populate the table accordingly:

If this helps please accept solution.

Thanks

Created 03-23-2023 01:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I think the jolt spec is incorrect because one of parentheses are more or less at 5th line.

If you can help me on this I appreciate it.

Created 03-23-2023 04:15 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ignore my statement, it is ok now, but I did not finish the pipeline yet, I will let you know back again once I done

Created 03-23-2023 04:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Once I d SplitJson processor, it give so many flow files, how to limit to the number of input flow file. For example, in my example here: we have 5 ACTs (means we have 5 records in the target database table)

Created 03-23-2023 05:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What do you mean by it gave you "so many flowfiles". If you follow the same jolt spec and split processors configuration as I provided it should give you the exact amount flowfiles similar to the number of ACT in the original input. Can you provide more details?

Created 03-26-2023 12:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @SAMSAL , it gives the following number of flow files (forever), although the source flow file as provided in the question around 3 ACT.As and 2 ACT.Xs.

I hope my explanation was fine and ok.

Created on 03-26-2023 06:10 AM - edited 03-26-2023 06:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I noticed that you have an "Original" relationship going from SplitJson back to itself. This where your duplicates are coming from. The "Original" relationship means the original input flowfile that is passed to the splitJson from JoltTransformation processor. You need to terminate this relationship and not pass it to anything unless you are using Wait-Notify processors which I dont think in your case. You can terminate relationship by going to splitJson Configuration then go to the Relationship tab and check the terminate box under the Original relationship.

Created 03-27-2023 03:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @SAMSAL , everything it works fine....