Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: DBCP connection pool issue in NIFI

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

DBCP connection pool issue in NIFI

- Labels:

-

Apache NiFi

Created on

03-02-2020

02:48 AM

- last edited on

03-02-2020

06:09 AM

by

cjervis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Team,

We are using DBCP connection pool to connect postgres sql with some range of time. After sometime NiFi processor (to get data from Postgres) went idle state, seems to be dead.

Disabling and enabling the DBCP connection fixing the hung issue. Kindly help us to address this issue.

Created 03-02-2020 07:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@saivenkatg55

My first suggestion is that you always make sure you have configured the "validation query" property when using the DBCPConnectionPool controller service.

When you enable the DBCPConnectionPool it does not immediately establish the configured number of connections for the pool. Upon receiving the first request from a processor for a connection from the DBCPConnectionPool, the entire pool will be created (by default configuration pool would consist of 8 connection).

Subsequent requests by processor to for a connection results in one of the previously established connections being handed off to the requesting processor. If and only if the validation query is configured, the connection is first validated to make sure it is still good before being passed to requesting processor. So often times we see this issue when validation query is not set and the connections are no longer any good. Connections can go bad for many reasons (network issues, idle to long, etc...)

You should keep your validation query very simple so it can execute very fast. "select 1" is often used to validate a connection.

Also make sure you are using the latest version of NiFi. There have been numerous bug fixes around the processors and DBCPConnectionPool between Apache 1.0 and 1.11.

Hope this helps,

Matt

Created 03-03-2020 05:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@MattWho thanks for the solution. After adding the select query in connection string however, we are still facing the same hung issue. Do we need to change any parameter in the NIFI server?

Created 03-03-2020 01:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

By "hung", you mean the processor shows active thread(s) in the upper right corner continuously and never produce any ERROR or WARN log output? Active threads show as a small number in parenthesis (1).

If that is the case, you would need to get a series of thread dumps from the NiFi node where the active thread is executing. 5 dumps taken with 5 minutes between each thread dump is usually pretty good. Then you will want to inspect those thread dumps for the active thread associated to your hung processor type. Look to see if the thread changes between any of the thread dumps. If the thread output in the dumps is changing then the thread is not hung, but rather just taking long time to execute. If the thread is identical across all thread dumps, you'll want to look at the thread to see fi it identifies what the thread is waiting on.

Hope this helps,

Matt

Created 03-04-2020 06:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

By "hung", you mean the processor shows active thread(s) in the upper right corner continuously and never produce any ERROR or WARN log output? Active threads show as a small number in parenthesis (1).

@MattWho exactly we are getting the same, we do not know for which process that we need to take thread dump and inspect it. Could you please guide us to fix this issue.

Created 03-04-2020 09:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Analyzing thread dump output is a cumbersome and time consuming process for which I cannot do for you here. If you have a support contract with Cloudera, I recommend opening a support case with them and they can help you get to the root cause of your issue.

You can obtain a thread by executing the nifi.sh command as follows:

./nifi.sh dump /<path to write dump output>/nifinode<num>-dump-<num>.txtFirst you want to identify which node in your NiFi cluster has the long running or hung thread.

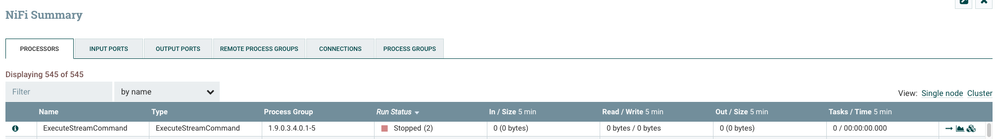

To do this you can use the NiFi Summary Ui found under the global menu.

Here is an example:

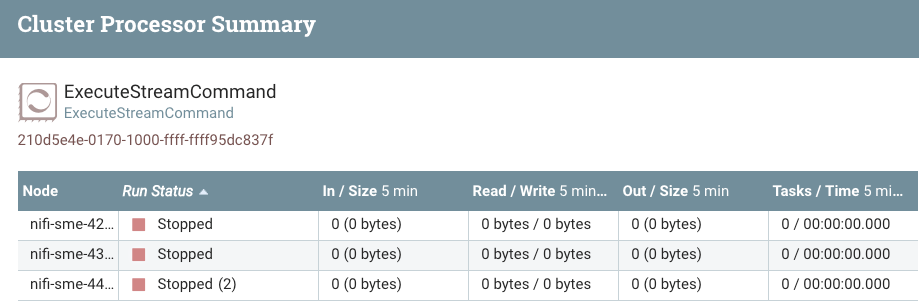

In above I can see I have an ExecuteStreamCommand processor that is in state of stopped, but shows (2) active threads. If i click on the three stacked boxes icon to the far right side of that row, I will get a per node break down for the processor:

From above break down I can see both active threads are on my cluster node 44. This is the node where I would take my 5 thread dumps from for inspection.

Hope this helps you identify where your issue is.

Matt

Created on 05-24-2022 08:11 AM - edited 05-24-2022 08:11 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi! Did you could able to solve it??

Im facing the same problem with the same scenario.