Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Error on Tutorial "How to Process Data with Ap...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Error on Tutorial "How to Process Data with Apache Hive" - Cannot load data into Hive tables

- Labels:

-

Apache Hive

Created 01-05-2016 02:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Problem loading data to table in this tutorial

http://hortonworks.com/hadoop-tutorial/how-to-process-data-with-apache-hive/

This command

<code>LOAD DATA INPATH '/user/admin/Batting.csv' OVERWRITE INTO TABLE temp_batting;

produces error

H110 Unable to submit statement. Error while compiling statement: FAILED: HiveAccessControlException Permission denied: user [admin] does not have [READ] privilege on [hdfs://sandbox.hortonworks.com:8020/user/admin/elecMonthly_Orc] [ERROR_STATUS]

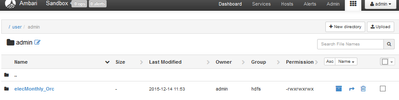

I created both user/admin and temp/admin folders. I used hdfs superuser to make admin owner of file, folder, and even parent folder. I gave full permissions in HDFS. and this is clearly shown in Ambari. Error persists.

Can anyone help? Thanks

Created 02-03-2016 04:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It was because when I thought I was creating an elecMonthly_Orc file, actually I created an elecMonthly_Orc folder with several files three files: _SUCCESS, part-r-r-00000-1a0c14e3-0dd0-42db-abc7-7f655a02f634.orc ... and another similar orc files. The files within the elecMonthly_Orc directory were owned by Hive, and that's why the permissions error.

Resolved by using command line as superuser hdfs:

hadoop fs -chown admin:admin /user/admin/elecMonthly_Orc/*.*

Now I just have to figure out how to recombine Orc files in Hive!

Created 01-05-2016 02:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you please take a screenshot of the output of

hdfs dfs -ls /user/admin/elecMonthly_Orc

Created 01-05-2016 02:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Aidan Condron what user are you running the Hive Statement as?

Created 02-03-2016 11:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Andrew. Think it was discongruity between Hive account running statement and Admin owing file. Thanks for this and sorry for delay in reply.

Created 02-03-2016 11:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Aidan Condron Is it resolved?

Created 02-03-2016 11:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Aidan Condron accept best answer below

Created 01-05-2016 02:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you using the latest sandbox? Try the Ambari Hive view, it has the Upload File action specifically for csv files.

Created on 01-05-2016 02:35 PM - edited 08-19-2019 05:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for answering so quickly!

Created 01-05-2016 02:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Aidan Condron what about the directory about it. What's the output of:

hdfs dfs -ls /user/admin

It looks like the files are owned by the user 'Spark'. Which user is the running the Hive Statement?

Created on 01-05-2016 02:43 PM - edited 08-19-2019 05:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

admin is running statement as per tutorial

I thought I did hdfs chown on files. Shown in Ambari as owned by admin