Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: HDFS resiliency - DR - rack aware

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

HDFS resiliency - DR - rack aware

- Labels:

-

Apache Hadoop

Created 07-13-2017 09:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I am in the process of improving the resilience of our hadoop clusters.

We are using a twin-datacenter architecture; the hadoop cluster nodes are located in two different buildings separated by 10 km with Namenode HA activated.

We are using a replica factor of 4 + 2 rack awareness (on rack per site).

The replica factor of 4 is probably a bit "luxury", but it might protect against the lost of an entire rack (lost of a site) + the lost of some nodes on the remaining site.

In case of losing en entire rack, I am wondering if HDFS will try to replicate the data on the remaining rack, thus we will get 4 replica on the same rack and overconsume space on the remaining rack ?...or will it "disable" the replica that is supposed to be located on the failed rack ?

Does it make sense to create 4 racks (one for each replica) in order to ensure that the data will be replicated on the both sites in a balanced way (2x2) ?

Many thanks in advance for your feedback.

Regards

Laurent

Created 07-14-2017 12:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Let's take it one by one:

- One big advantage of 4 replicas might be actually faster jobs in the situation where big jobs are fired simultaneously.

- Rack or no rack if data is lost, and replication factor falls below the specified level hdfs will try to replicate and bring it to the original replication factor.

- All the replicas will never be on the same rack until and unless that is the only rack alive.

- My suggestion for best performance and availability use a minimum of 3 racks per data center.

Wrote an article some time ago, it might also help in clarifying some of your doubts:

https://community.hortonworks.com/content/kbentry/43057/rack-awareness-1.html

Thanks

Created 07-14-2017 12:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Let's take it one by one:

- One big advantage of 4 replicas might be actually faster jobs in the situation where big jobs are fired simultaneously.

- Rack or no rack if data is lost, and replication factor falls below the specified level hdfs will try to replicate and bring it to the original replication factor.

- All the replicas will never be on the same rack until and unless that is the only rack alive.

- My suggestion for best performance and availability use a minimum of 3 racks per data center.

Wrote an article some time ago, it might also help in clarifying some of your doubts:

https://community.hortonworks.com/content/kbentry/43057/rack-awareness-1.html

Thanks

Created 07-17-2017 02:54 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can adjust HDFS rebalance speed per your need. Refer this documentation:

If you are worried about filling the disk space during rack maintenance operation you can configure the balancer to be really slow so that you can have 48 hours window and virtually nothing will be replicated. Or if the situation permits you can take the namenode in safe mode. This will allow read operations but no write.

This is correct "if the number of replica factor is equal to the number of racks, there is no guarantee that there will be a replica spread in each rack." The policy is all the replicas will not be on the same rack.

Created 07-19-2017 05:18 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you have any more questions on this? Else you can accept the answer to close the thread.

Thanks

Created on 07-20-2017 08:37 AM - edited 08-18-2019 01:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @rbiswas,

Sorry, I'm a bit confused by your last statement.

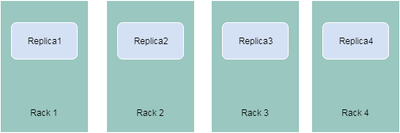

Could you please confirm that if I define a replication factor of 4, and 4 racks, I will get the following distribution of replicas ? (see diagram below)

regards

Laurent

Created 07-21-2017 04:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Laurent lau that equal distribution of replica is not guaranteed.

If you think about it in high level it does compromise the speed of writes as well as reads. So not recommended even if you are planning to do it programmatically.

Created 08-02-2017 02:39 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @rbiswas,

Sorry for the delay in getting back to you (I was on holidays).

Thanks for your answers. Yes we can close the thread.

regards

Laurent

Created 07-17-2017 08:19 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks @rbiswas for your answer.

My concern is regarding the speed of the replication if, let's say one rack is unavailable during 24 / 48hours for maintenance reasons, and in the meantime HDFS is trying to replicate all then data on the remaining rack, thus might saturate the disk space on this rack !

I can't find any documentation mentionning this " HDFS rebalance speed" .

Also it looks to me that, if the number of replica factor is equal to the number of racks, there is no guarantee that there will be a replica spread in each rack.

Do you confirm it ?

Thanks in advance.

rgds

Laurent