Support Questions

- Cloudera Community

- Support

- Support Questions

- HELP PLEASE!!!, Failed to write at least 1000 rows...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

HELP PLEASE!!!, Failed to write at least 1000 rows to Kudu; Sample errors: Timed out: cannot complete before timeout:

Created on 01-24-2023 03:58 AM - edited 01-24-2023 04:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have an exception thrown when trying to write a data frame to kudu of size 524GB.

After calling this writing part:

df.write.format("org.apache.kudu.spark.kudu").option(

"kudu.master",

"master1:port,master2:port,master3:port",

).option("kudu.table", f"impala::{schema}.{table}").mode("append").save()

this exception is thrown:

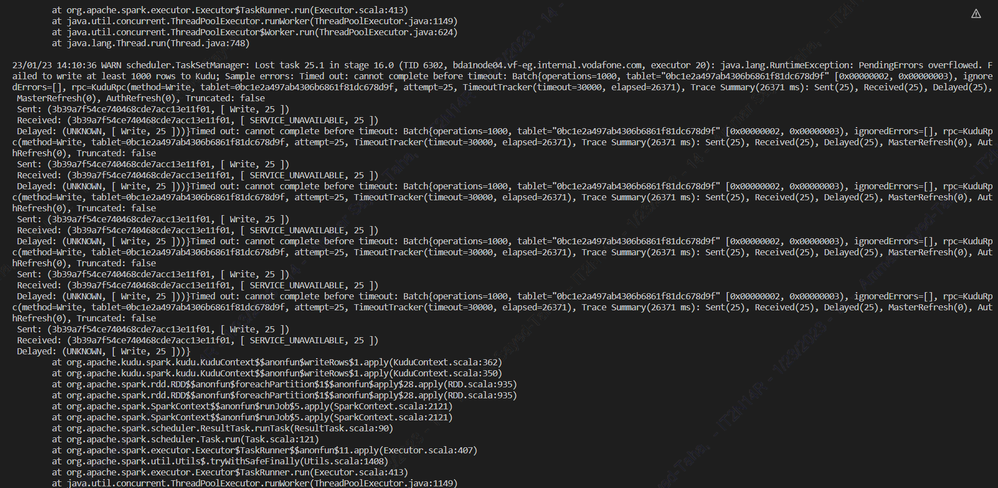

java.lang.RuntimeException: PendingErrors overflowed. Failed to write at least 1000 rows to Kudu; Sample errors: Timed out: cannot complete before timeout: Batch{operations=1000, tablet="0bc1e2a497ab4306b6861f81dc678d9f" [0x00000002, 0x00000003), ignoredErrors=[], rpc=KuduRpc(method=Write, tablet=0bc1e2a497ab4306b6861f81dc678d9f, attempt=26, TimeoutTracker(timeout=30000, elapsed=29585), Trace Summary(29585 ms): Sent(26), Received(26), Delayed(26), MasterRefresh(0), AuthRefresh(0), Truncated: false

here is the yarn.log for this spark job that throws the error

Here is the error that is thrown when inserting data to kudu.

I really appreciate any help you can provide.

Thanks in advance!

Created 02-13-2023 11:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 05-07-2023 01:08 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

the size of the Dataframe was huge and when partitioning with 'date' column, there were partitions with very large data and others not.

the partitions with very large data trying to write data in kudu which somehow gives this error

try to rebalance the partitions to have the same size of records or split into small ones to be written multiple times in kudu

Created 04-19-2023 10:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Its a kudu version issue where kudu client fails in demoting the leader as specified here: [KUDU-3349] Kudu java client failed to demote leader and caused a lot of deleting rows timeout - ASF...

It's fixed since kudu version 1.16: [java] KUDU-3349 Fix the failure to demote a leader · apache/kudu@90895ce · GitHub

Try upgrading your kudu version and see if the problem still persists.

Created 04-20-2023 03:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @as30

Could be data related issue here, can you please share DDL for table?

Also do you have partitions created before hand if you are trying to write data under partitioned table?

Created on 05-07-2023 01:56 PM - edited 05-07-2023 01:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @AsimShaikh

I'm facing the same issue kudu version 1.11

Kindly find below ddl

create table Test ( my_mid bigint, my_ timest String, my_ uuid String, my__PROC_DATE timestamp, my_PROC_HOUR String, my_MONITORING_POINT String, my_SUBSCRIBER_TYPE String, my_EVENT_TYPE String, my_TRAFFIC_TYPE String,my_LOCATION_IDENTIFIER String, my_DESTINATION String, my_FILENAME String, my_APN String, my_ROAMING_OPERATOR String, my_START_DATE timestamp, my_SERVICE_FLAG String, my_START_HOUR String, my_CGI String, my_COUNTRY_FLAG String, my_SWITCH_NAME String, my_FLAG_2G_3G String, my_MINIMUM_TIME bigint, my_MAXIMUM_TIME bigint, my_CDR_COUNT bigint, my_EVENT_COUNT bigint, my_EVENT_DURATION bigint, my_DATA_VOLUME double, my_UPLINK_VOLUME double, my_DOWNLINK_VOLUME double, my_NODE_NAME String, my_A_NUMBER String, my_B_NUMBER String, my_IMSI String, my_IMEI String, my_FILENAME String, my_APN String, my_ACTUAL_VOLUME double, my_IMSI String, my_SESSION_ID String, my_IPC_SERVICE_FLAG String, my_RATING_GROUP String, my_CHARGING_CHARACT String, my_CAUSE_FOR_CLOSING String, PRIMARY KEY (my_mid, my_timest, my_uuid) ) PARTITION BY HASH(my_timest) PARTITIONS 4 STORED AS KUDU TBLPROPERTIES ( 'kudu.master_addresses' = "XXXXXX" );

Created 05-07-2023 02:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As per the OP's response if the data isn't well distributed along the partitioned column you will end up having some very large partitions while others will be very small.

Writing into a single large partition can lead Kudu to fail.

If your partitioned column is skewed aim for redesigning your table partitioning.

Final note: As per Kudu's documentation (Apache Kudu - Apache Kudu Schema Design)

Typically the primary key columns are used as the columns to hash, but as with

range partitioning, any subset of the primary key columns can be used.