Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Hive server service is not ready. Service endp...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Hive server service is not ready. Service endpoint may not be reachable!

Created on

02-23-2022

08:47 PM

- last edited on

02-23-2022

10:42 PM

by

VidyaSargur

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

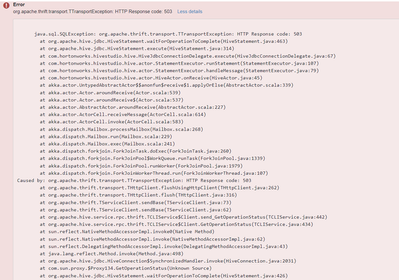

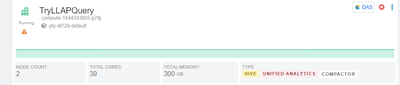

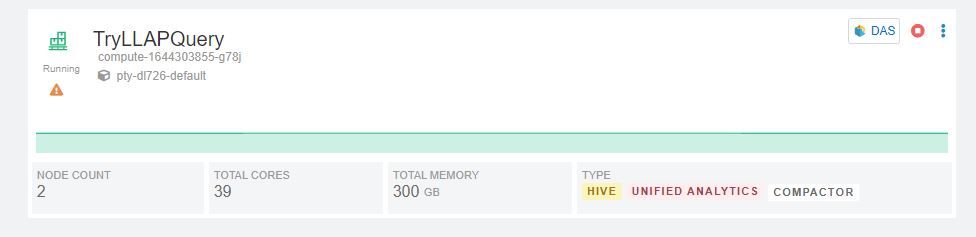

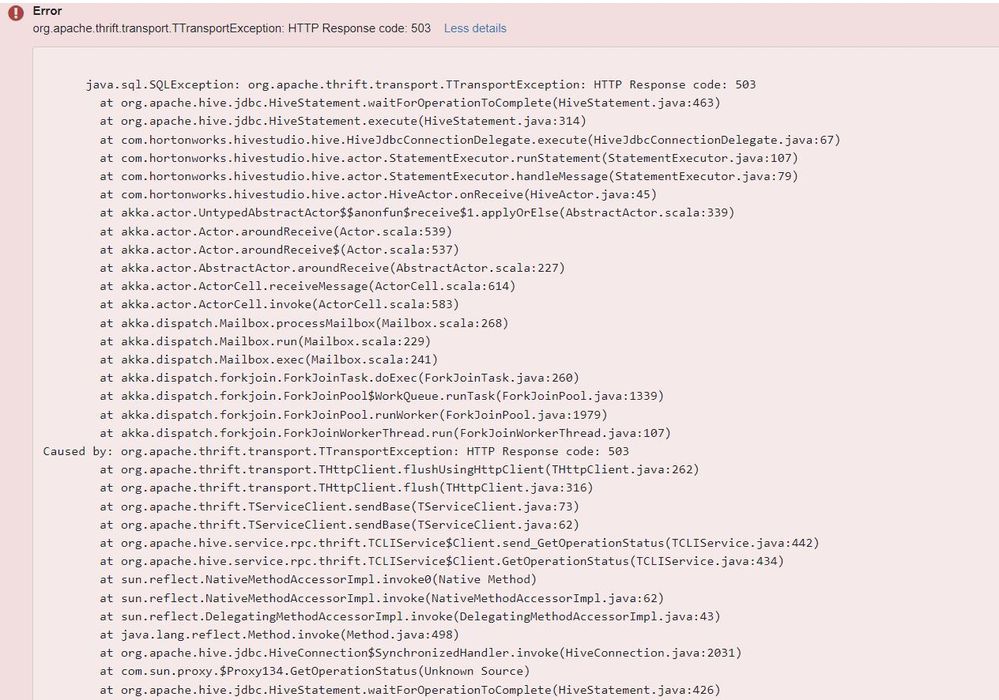

I have a default database catalog in Cloudera Data Warehouse(CDW) for a environment and I have created a virtual warehouse with that database catalog. I am trying to use a newly created UDF with this virtual warehouse. But whenever I execute that UDF I get the following error.

When I looked over at virtual warehouse there comes a alert symbol, on hovering which I get a message saying "Hive server service is not ready. Service endpoint may not be reachable!"

I collected the diagnostic bundle and tried to search for the issue. In hiveserver log I found the issue of java core dump.

A fatal error has been detected by the Java Runtime Environment:

#

# SIGSEGV (0xb) at pc=0x0000000000093c9e, pid=1, tid=0x00007f7bf5bc2700

#

# JRE version: OpenJDK Runtime Environment (8.0_312-b07) (build 1.8.0_312-b07)

# Java VM: OpenJDK 64-Bit Server VM (25.312-b07 mixed mode linux-amd64 compressed oops)

# Problematic frame:

# C 0x0000000000093c9e

#

# Core dump written. Default location: /usr/lib/core or core.1

#

# An error report file with more information is saved as:

# /tmp/hs_err_pid1.log

So my question is what could be the issue here causing core dump or can there be possibly different reason for hiveserver not starting. And is there a way I can get the core dump present at /tmp/hs_err_pid1.log location to analyze?

Created 02-25-2022 03:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@sandipkumar we can get to know from hs_err_pid1.log

Created 02-27-2022 08:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you know how can I access hs_err_pid1.log. I tried looking for it in compute nodes and in some service nodes. I did not found them in /tmp directory and if they are present in some containers, how to locate which containers to access?

Created 03-14-2022 01:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@sandipkumar its mentioned /tmp/hs_err_pid1.log

Created 03-23-2022 11:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@sandipkumar, Has the reply helped resolve your issue? If so, please mark the appropriate reply as the solution, as it will make it easier for others to find the answer in the future.

Regards,

Vidya Sargur,Community Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community: