Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: How to access HDFS and Hive database.

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to access HDFS and Hive database.

- Labels:

-

Apache Hadoop

-

Apache Hive

Created on 01-22-2018 10:15 AM - edited 08-17-2019 10:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello community,

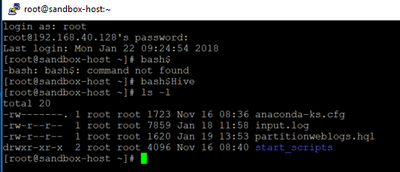

I have logged into the Sandbox for the first time using Putty.exe.

I'm currently at the location shown in the image below.

Can someone please show me how to access the HDFS drive and Hive database

Created 01-22-2018 10:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Once you are able to login to Sandbox on SSH port 2222 then you should be able to run the commands.

# ssh root@localhost -p 2222 Enter password: hadoop

Now you can run the hdfs commands like inside the Sandbox terminal:

# su - hdfs # hdfs dfs -ls /

Also if you want to access the "HDFS" filesystem using Ambari File View then login to ambari UI with credential (admin / admin)

http://localhost:8080

The access the "File View" from the drop down menu on the top right corner.

Similarly in order to access "hive"

# su - hive # hive --hiveconf hive.execution.engine=tez

Or use the "Hive View" from ambari UI.

.

Created 01-22-2018 10:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to access Hive View: https://hortonworks.com/tutorial/hadoop-tutorial-getting-started-with-hdp/section/3/

How to Access File View: https://hortonworks.com/tutorial/hadoop-tutorial-getting-started-with-hdp/section/2/

Sandbox Learning Ropes: https://hortonworks.com/tutorial/hadoop-tutorial-getting-started-with-hdp/

Created 02-01-2018 10:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jay, can you please let me know why I'm suddenly not able to access the Sandbox on port 2222? I was able before, but now I can't.

Created 01-22-2018 11:34 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jay,

This a great answer - I had no idea about port 2222.

I'm going to try and explain what I'm attempting to do.

I have created the following .hql code to create a table. When I execute the code Ambari successfully creates the the table.

CREATE EXTERNAL TABLE mysample

(

code STRING,

description STRING,

total_emp INT,

salary INT

)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY ','

STORED AS TEXTFILE

LOCATION '/root/music'

TBLPROPERTIES ("skip.header.line.count" = "1");However, whenever run the the following code from Zeppelin I'm unable to see any data

%jdbc(hive) select * from newmoves.nexflix2 limit 14

I have uploaded a dataset called 'movies.csv' into the /root/music folder on the the Sandbox. However, it was mentioned to me that the problem was that I pointed the location to dataset to LOCATION '/root/music'. Apparently, the directory needs to be in HDFS not in Local. Therefore, can someone let me know what I need to do to get the 'movies.csv' file into HDFS.

As you have probably will have guessed, I'm very new to Sandbox etc... so I hope I explained myself well enough for someone to help me.

Cheers

Created 01-22-2018 12:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello I made an error,

I meant to say when try to run the following I don't see any data

%jdbc(hive) select * from mysample limit 14

Created 01-22-2018 02:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK, So I'm getting there. I have uploaded a table into Sandbox from the GUI, the table is movies3.csv and I've called the table movies4

I have also uploaded the same table using from putty

when I run the command I get data

%jdbc(hive) select * from movies4 limit 14

But when I run the following command I don't get any data.

%jdbc(hive) select * from mymovies limit 14

I to generate the mymovies table i used the following .hql

CREATE EXTERNAL TABLE mymovies

(

movieId INT,

title STRING,

genre STRING

)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY ','

STORED AS TEXTFILE

LOCATION '/home/hive'

TBLPROPERTIES ("skip.header.line.count" = "1");Can someone please let me know why i don't get any data with .hql

Created 02-01-2018 10:10 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jay,

Can you please help figure out why the Sandbox suddenly won't allow to login to it on port 2222? I was able before, as you suggested above, but now I can't.