Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: How to make Yarn deploy resources to new added...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to make Yarn deploy resources to new added node?

- Labels:

-

Apache Spark

-

Apache YARN

Created 07-24-2023 08:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

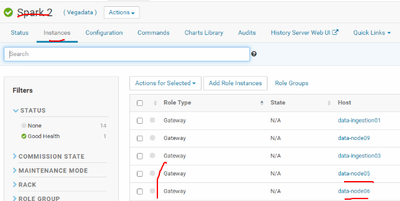

I added some new nodes to my cluster and it works fine. Then I add Spark Gateway roles to all the new nodes. We're using Yarn to manage and distribute Spark work.

Does adding Spark Gateway roles to new nodes enough to make Yarn think like "Hey there are some new nodes here, let's distribute some containers and work to these new nodes"? Or do I have to add Yarn Gateway roles to these new nodes too?

How to make sure that Yarn will use these new nodes when executing jobs to reduce the overall workload of my cluster

Created 10-19-2023 04:36 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The node must have a NodeManager role to take part of the processing, Spark gateway, and Yarn Gateway

Created 10-05-2023 02:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When we submit the spark using YARN, based on YARN resources application will run. In your case you need to add more YARN Gateway nodes to process with more resources. We can't process the data by only added new nodes and yarn will distribute processing all nodes.

Created 10-19-2023 04:36 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The node must have a NodeManager role to take part of the processing, Spark gateway, and Yarn Gateway