Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Kerberos and LDAP integration

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Kerberos and LDAP integration

- Labels:

-

Apache Ambari

Created on 12-06-2018 10:18 AM - edited 08-17-2019 03:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am creating a kerberised HDP cluster on AWS. For managing the user and groups I am using openLDAP (on RHEL 7 machine) and want to configure it to work with Kerberos.

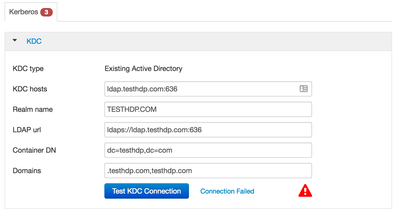

I am following the official tutorial for setting up kerberos with existing AD but while testing connection during the setup it constantly fails (see screenshot).

I have LDAPS setup and working fine - I am able to sync users using ambari-server sync-ldap command over ldaps and also able to login to the ambari-server using the users created on openLDAP. Telnet to ldap.testhdp.com:636 (my LDAP server) from my edge node (where ambari-sevrer is installed) also works fine.

It is only while setting up kerberos that the connection fails.

Just for testing I installed krb5-server on the edge node and tried installing kerberos with a existing MIT KDC which works fine. I hope to make it work with openLDAP (existing AD)

Created 12-06-2018 02:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The KDC is usually on port 88. Port 636 is the LDAPS port. So you need to change the KDC Hosts line to read "ldap.testhdp.com" or "ldap.testhdp.com:88", or if the KDC is not listening on port 88, change 88 to the correct port.

When using an Active Directory, the KDC interface in the Active Directory is on port 88 (by default). I assume this can be changed, but I haven't see anyone do it... so your best bet is probably to change the KDC Hosts value to "ldap.testhdp.com".

Created 12-06-2018 02:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The KDC is usually on port 88. Port 636 is the LDAPS port. So you need to change the KDC Hosts line to read "ldap.testhdp.com" or "ldap.testhdp.com:88", or if the KDC is not listening on port 88, change 88 to the correct port.

When using an Active Directory, the KDC interface in the Active Directory is on port 88 (by default). I assume this can be changed, but I haven't see anyone do it... so your best bet is probably to change the KDC Hosts value to "ldap.testhdp.com".

Created on 12-07-2018 01:14 PM - edited 08-17-2019 03:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Robert Levas thank you for the quick response.

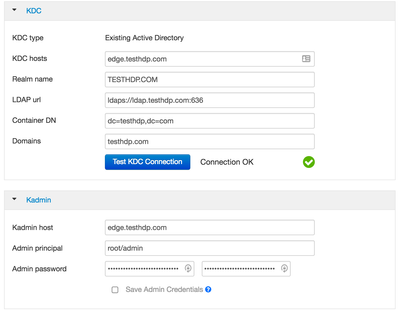

I tried your suggestion and passed through the initial step (see pic1).

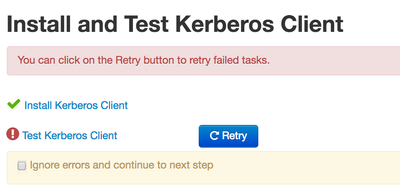

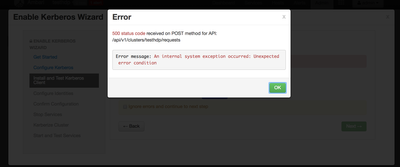

However on proceeding further, I get an error while testing the kerberos client (see pic2 and pic3).

On checking ambari-server logs, I notice the error:

Caused by: org.apache.ambari.server.AmbariException: Unexpected error condition at org.apache.ambari.server.controller.KerberosHelperImpl.validateKDCCredentials(KerberosHelperImpl.java:1935) at org.apache.ambari.server.controller.KerberosHelperImpl.handleTestIdentity(KerberosHelperImpl.java:2230) at org.apache.ambari.server.controller.KerberosHelperImpl.createTestIdentity(KerberosHelperImpl.java:1029) at org.apache.ambari.server.controller.AmbariManagementControllerImpl.createAction(AmbariManagementControllerImpl.java:4216) at org.apache.ambari.server.controller.internal.RequestResourceProvider$1.invoke(RequestResourceProvider.java:263) at org.apache.ambari.server.controller.internal.RequestResourceProvider$1.invoke(RequestResourceProvider.java:192) at org.apache.ambari.server.controller.internal.AbstractResourceProvider.invokeWithRetry(AbstractResourceProvider.java:455) at org.apache.ambari.server.controller.internal.AbstractResourceProvider.createResources(AbstractResourceProvider.java:278)

Caused by: javax.naming.InvalidNameException: [LDAP: error code 34 - invalid DN] at com.sun.jndi.ldap.LdapCtx.processReturnCode(LdapCtx.java:3077) at com.sun.jndi.ldap.LdapCtx.processReturnCode(LdapCtx.java:2883) at com.sun.jndi.ldap.LdapCtx.connect(LdapCtx.java:2797) at com.sun.jndi.ldap.LdapCtx.<init>(LdapCtx.java:319) at com.sun.jndi.ldap.LdapCtxFactory.getUsingURL(LdapCtxFactory.java:192) at com.sun.jndi.ldap.LdapCtxFactory.getUsingURLs(LdapCtxFactory.java:210) at com.sun.jndi.ldap.LdapCtxFactory.getLdapCtxInstance(LdapCtxFactory.java:153) at com.sun.jndi.ldap.LdapCtxFactory.getInitialContext(LdapCtxFactory.java:83) at javax.naming.spi.NamingManager.getInitialContext(NamingManager.java:684) at javax.naming.InitialContext.getDefaultInitCtx(InitialContext.java:313) at javax.naming.InitialContext.init(InitialContext.java:244) at javax.naming.ldap.InitialLdapContext.<init>(InitialLdapContext.java:154) at org.apache.ambari.server.serveraction.kerberos.ADKerberosOperationHandler.createInitialLdapContext(ADKerberosOperationHandler.java:514) at org.apache.ambari.server.serveraction.kerberos.ADKerberosOperationHandler.createLdapContext(ADKerberosOperationHandler.java:465) ... 102 more

I am not sure what is causing this error and couldn't find any support online either.

My base domain for LDAP is dc=testhdp,dc=com which works fine while authenticating using (open)LDAP alone (see pic4).

But here I get the error "Caused by: javax.naming.InvalidNameException: [LDAP: error code 34 - invalid DN]"

Can you please help me what I am doing wrong to setup LDAP working with kerberos. I have attached relevant ambari-server logs.logs.txt

Thanks

Created 12-07-2018 02:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

the container, 'dc=testhdp,dc=com', seems to be rather high up in the LDAP tree. Maybe your admin user credential does not have privs to write there. Usually a container is created for the hadoop principals... like 'ou=hadoop,dc=testhdp,dc=com' and a user in the AD is delegated administrative access to manage user accounts in that container (some times referred to as an "O U" - I prefer "container" though).

Try to create a container and ensure the AD account that Ambari is using has privileges to create users in that container.

Created 12-07-2018 07:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Robert Levas thank you so very much. Your answer makes a lot of sense and I think this is exactly what is causing the problem. I'll create a new "OU" 'ou=hadoop,dc=testhdp,dc=com' in openLDAP right? Now my admin user in ambari is (admin) and for kerberos it is (root/admin), how do I provide it access to create the users in there? Can you please help me there? - sorry to bug you again.

Created 12-07-2018 08:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OpenLDAP? I thought this was Active Directory. If you are not using an Active Directory, you will need to choose a different KDC type when enabling Kerberos.

Ambari does not generally create users in an LDAP directory. However when enabling Kerberos, it needs to create accounts in the KDC to store the principal names and password. This is done differently depending on the type of KDC you are using. For Active Directory, the method Ambari uses is to connect to its LDAP interface and create user accounts with the needed attributes. For MIT KDC, it uses the MIT kadmin utility to request the creation of new principals and export keytab files. And for IPA, it uses the ipa client utilities to request the creation of new principals and export keytab files. In each case, you tell Ambari what the administrator credentials are in the Enable Kerberos wizard - like you have in the screen shots above.

Created 12-08-2018 06:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Robert Levas thank you for the clarification - I understand things better. So, now that I am using openLDAP, what KDC type then should I use then? Ambari setup only gives me two types for automated kerberos setup: AD and MIT KDC.

Created 12-08-2018 08:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Active Directory is a Microsoft product that runs on a Microsoft Windows server. It provides a lot of services for a (Windows) network. In this case, it can also provide LDAP and KDC services for a your Hadoop cluster. If you do not already have an Active Directory set up, or if you do not wish to use your Active Directory as a KDC for Kerberos authentication, then you probably want to install an MIT KDC. However, if using Ambari 2.7.0 or above, you might consider IPA (or FreeIPA), since it is sort of similar to an Active Directory. An IPA server provides several services for a network like DNS, LDAP, and KCD; however, it will take some learning to get it all installed and working.

That said, if you are already set on using OpenLDAP, you should use the MIT KDC option. See https://web.mit.edu/kerberos/krb5-1.12/doc/admin/install.html for information on installing this KDC. You can even configure it to use OpenLDAP as it's backend - see https://web.mit.edu/kerberos/krb5-1.12/doc/admin/conf_ldap.html.

I have a script that will install an MIT KDC using the most simple options (I use it for testing) - install-kdc-centossh.txt (I needed to add the .txt extension to the .sh file to upload it here). If you do not edit the script, it will create a KDC with the following properties:

realm: EXAMPLE.COM administrator principal: admin/admin@EXAMPLE.COM administrator password: hadoop

I hope this helps.

Created 12-10-2018 04:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you are on a newer version of Ambari I recommend you take advantage of using FreeIPA option. (Basically AD for Redhat)

Created 12-10-2018 07:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you @Robert Levas @dvillarreal

Yes, I am using a newer version of ambari and also tried FreeIPA since openLDAP didn't seem to work art all with kerberos.

I followed the exact steps as on https://community.hortonworks.com/articles/59645/ambari-24-kerberos-with-freeipa.html - everything seems to be working fine but fails when kerberizing the cluster. I get the following error:

Also, important to note that while I get the following error:

DNS query for data2.testhdp.com. A failed: The DNS operation timed out after 30.0005660057 seconds DNS resolution for hostname data2.testhdp.com failed: The DNS operation timed out after 30.0005660057 seconds Failed to update DNS records. Missing A/AAAA record(s) for host data2.testhdp.com: 172.31.6.79. Missing reverse record(s) for address(es): 172.31.6.79.

I installed server as:

ipa-server-install --domain=testhdp.com \ --realm=TESTHDP.COM \ --hostname=ldap2.testhdp.com \ --setup-dns \ --forwarder=8.8.8.8 \ --reverse-zone=3.2.1.in-addr.arpa.

and the clients on each node as

ipa-client-install --domain=testhdp.com \

--server=ldap2.testhdp.com \

--realm=TESTHDP.COM \

--principal=hadoopadmin@TESTHDP.COM\

--enable-dns-updatesAlso, that post doing the following step:

echo "nameserver ldap2.testhdp.com" > /etc/resolv.conf

my yum is broken and I need to revert to make it work.

Do you guys have any idea about it? I thought that there is no need of DNS as I have resolution of *.testhdp.com in my hostfile on all nodes.