Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: ListFile on a huge directory

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

ListFile on a huge directory

- Labels:

-

Apache NiFi

Created 05-27-2020 12:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I'm trying to do a recursive list file on a massive directory. We're talking close to 100 GB. It seems like ListFile is walking through every single solitary file before it will spit out the first flow file. I was assuming it would spit out files one at a time as it finds them.

It seems as though there's simply too much data. It just kind of sits there and spins. I left it run over the weekend and it never got through everything or spit anything out. I can't tell if its still working or if it's overloaded or what is going on.

How would I go about walking through a directory like this returning one file at a time without duplicates?

Created 05-29-2020 08:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

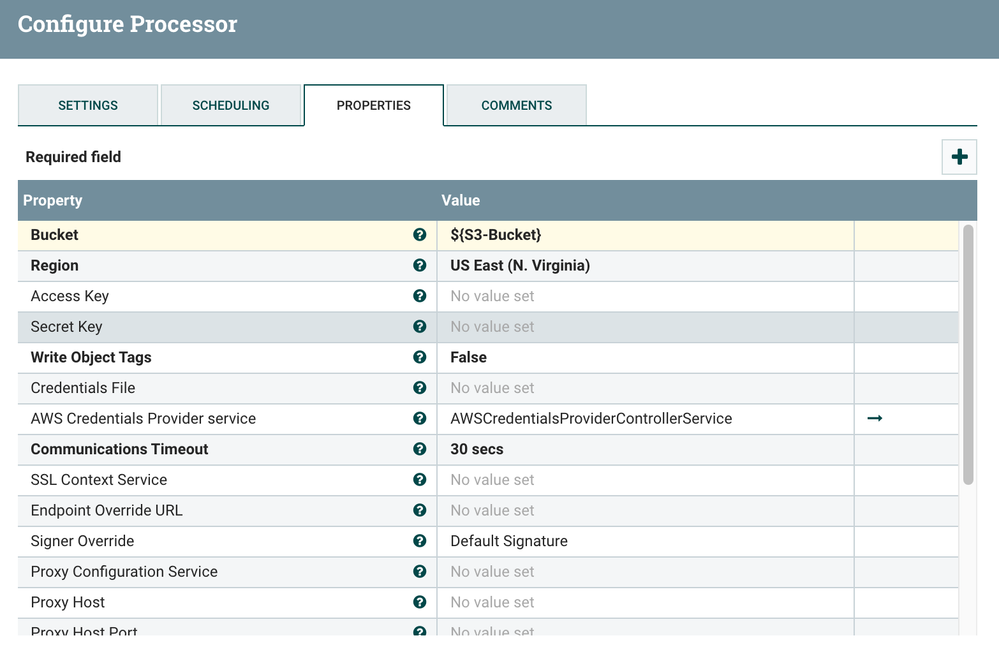

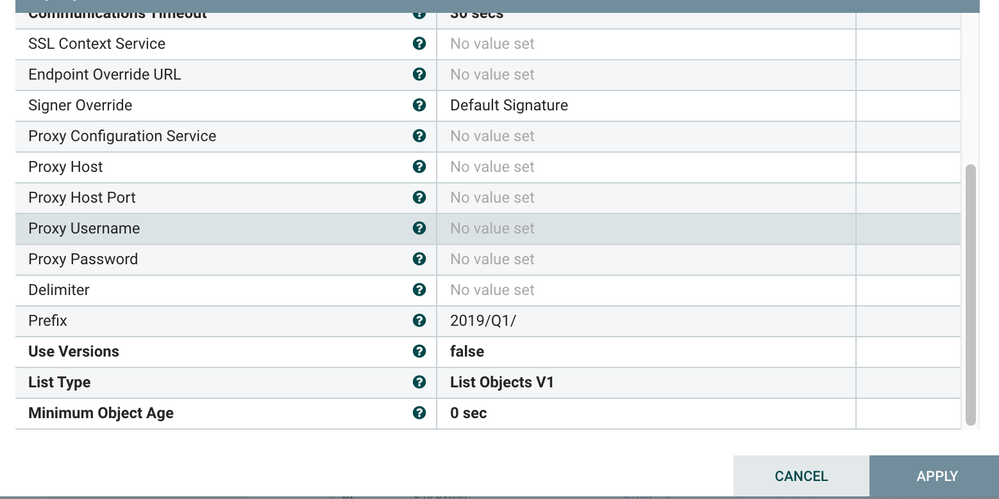

I feel ListS3 should work well even with massive data buckets.

It collects only metadata and create a flow file.

Below few things you can try and see :

1)

If you have multiple subdirectories inside that bucket, try to put filter for a specific directory in ListS3 processor (if this helps then you can run multiple processors in parallel pointing to same bucket but different specific sub directory)

In the screenshot attached, "2019/Q1" is the one specific directory in side bucket and ListS3 will list only the files of that specific directory.

2)

Duplicate(copy and paste) the ListS3 processor (which you are trying to run), and run the newly created (duplicated) processor again.

As ListS3 tracks(internally) the files already listed, it will not re-list even if you stop and start, so easy way is to duplicate the processor and run that new processor and see.

Thanks

Mahendra

Created 06-01-2020 09:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi heg,

This appears to require some kind of AWS account. I'm not sure that is going to work for what I'm trying to do.

Thanks

Created 05-30-2020 08:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@ischuldt24 ListFile reads the entire directory and creates a 0 byte flowfile for each file to send to FetchFile. If the run schedule is not controlled for your large folder, it is possible to create endless loops which never complete. What you describe sounds like run schedule is very low (0 sec is default), and not enough time to finish reading the directory before it would try again.

The length of time that ListFile takes to read the directory would depend on the number of files, network connectivity to the file location, and the NiFi Enviroment's Configuration and Tuning (how many nodes, spec tuning, and flow tuning).

Additionally it would be nice for community members to know the details of your environment. NiFi Version, screen shots of configuration of the processor(s), including the run schedule above, details of the NiFi Environment Nodes (ram, cpu, cores, etc)...

As for your question about alternatives to ListFIle. You can always execute a custom script, but I suspect this may result in some of the same issues you have if the environment is not capable of handling large numbers of files.

If this answer resolves your issue or allows you to move forward, please choose to ACCEPT this solution and close this topic. If you have further dialogue on this topic please comment here or feel free to private message me. If you have new questions related to your Use Case please create separate topic and feel free to tag me in your post.

Thanks,

Steven @ DFHZ

Created 06-01-2020 09:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Steven,

Thanks for the reply. I tried increasing the run schedule. I haven't seen any success yet. For the record, I'm using nifi 1.9.2. You were correct that the run schedule was set to zero. Another thing I've noticed is that I tend to get at least one file that errors out somewhere due to bad permissions or a bad filename. Could that cause the entire process to fail? If so how would I avoid that?

I'm pulling the data off a removable hard drive that I have mapped a network drive to. The exact same set up works just fine on relatively small folders with 20 or so files in it.

It's hard for me to post screenshots, but if you're interested in properties, I'm tracking timestamps, recursing subdirectories, I've tried an input directory of both local and remote. The file filter is wide open. Path filter is unchanged(although I tried it with the same regex as the file filter a couple of times), not including file attributes, I tried bumping the max directory listing time up to a couple of hours.

Created 06-15-2020 12:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I still haven't had any luck with this at all. Do you know if an error on one specific file could cause the entire process to crash and not complete or does it attempt to skip over bad files?