Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Machine Learning in Apache Nifi

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Machine Learning in Apache Nifi

- Labels:

-

Apache NiFi

-

Apache Pig

-

Apache Spark

Created on 04-30-2016 02:53 AM - edited 09-16-2022 03:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello guys,

First, i want to make a project with Apache Nifi, but there is some problem that i face it now.

1. Can i make Machine Learning Calculation in Apache Nifi ?

I know that i must use Mahout and Spark, but i dont find Mahout and Spark Processor in Apache Nifi. What should i do ?

Can you suggest me another idea.

2. I want to make web service with Apache Nifi ?

First time, i use ListenHTTP Processor, but the text that i send become Flow File. How can i convert it to text so i can put it in GetTwitter Processor ?

Thanks for reply 🙂

Created on 04-30-2016 03:10 PM - edited 08-19-2019 03:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

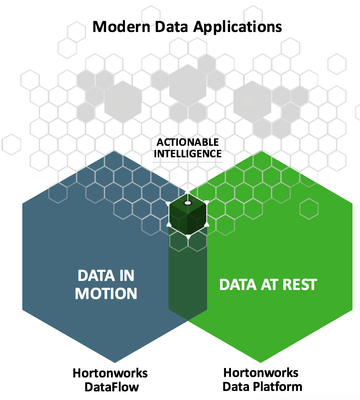

What are you trying to build is what we call Connected Data Platform at Hortonworks. You need to understand that you have two types of workloads/requirements and you need to use HDF and HDP jointly.

- ML model construction: the first step towards you goal is to build your machine learning model. This require processing lot of historical data (data at rest) to detect some pattern related to what you are trying to predict. This phase is called "training phase".The best tool do this is HDP and more specifically Spark.

- Applying the ML model: once step1 completed, you will have a model that you can apply to new data to predict something. In my understanding you want to apply this at real time data coming from twitter (data at motion). To get the data in real time and transform to what the ML model needs, you can use NiFi. Next, NiFi send the data to Storm or Spark Streaming that applies the model and get the prediction.

So you will have to use HDP to construct the model, HDF to get and transform the data, and finally a combination of HDF/HDP to apply the model and make the prediction.

To build a web service with NiFi you need to use several processors: one to listen to incoming requests, one or several processors to implement your logic (transformation, extraction, etc), one to publish the result. You can check this page that contains several data flow examples. The "Hello_NiFi_Web_Service.xml" gives an example on how to do it.

https://cwiki.apache.org/confluence/display/NIFI/Example+Dataflow+Templates

Created 06-30-2016 11:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have tried building a web service with NiFi and am able to get the incoming requests and pass it to Spark/Storm. Assuming that I compute the prediction inside Spark, I wish to know how to send back the score/result as response to NiFi.

If that is currently not possible, what are the chances of creating a custom processor in R to predict the scores and pass it on as response?

Thanks.

Created 07-21-2017 08:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can we automate a R script in nifi.

Created 10-08-2017 10:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Call Spark: https://community.hortonworks.com/articles/73828/submitting-spark-jobs-from-apache-nifi-using-livy.h...

Or call Machine learning from NiFi or write Machine Learning or Deep Learning components in nifi

https://community.hortonworks.com/articles/80418/open-nlp-example-apache-nifi-processor.html

Created 10-08-2017 10:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

easy to integrate NiFi -> Kafka -> Spark or Storm or Flink or APEX

Also NiFi -> S2s -> Spark / Flink / ...

- « Previous

-

- 1

- 2

- Next »