Support Questions

- Cloudera Community

- Support

- Support Questions

- MergeRecord Defragment confusing record count and ...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

MergeRecord Defragment confusing record count and fragment.count

- Labels:

-

Apache NiFi

Created on 12-17-2018 09:38 PM - edited 09-16-2022 06:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, I have a pagination scenario, where an API returns 50 records at a time. Total count is 368, so multiple pages are involved. My goal is to combine all into a single FlowFile. NiFi version is 1.8.0.

I have set up a Notify/Wait scenario to wait until all 8 pages are gathered. Then I send all of them to a MergeRecord processor with a Defragment merge strategy. Before the MergeRecord processor, the defragment attributes of fragment.identifier, fragment.count, and fragment.index are all properly set.

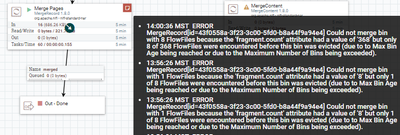

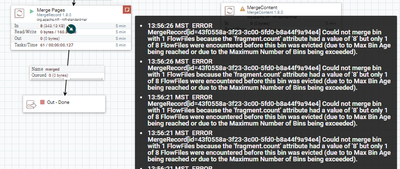

I expect the MergeRecord process to join the 8 flowfiles (368 total records) into a single flowfile (with 368 total records). However, I receive an error "fragment.count had a value of 8 but only 1 of 8 FlowFiles were encountered before this bin was evicted."

As part of troubleshooting, I set the fragment.count to 368 for all the flowfiles. Similar error message, but interestingly it found all 8 of the flowfiles this time.

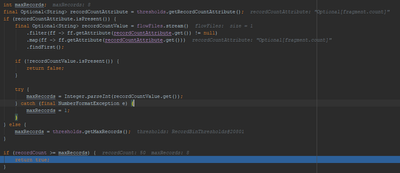

I ended up debugging from source to understand what was going on. The code compares the record count from the first file (50) to the maxRecords (8), which is populated from the fragment.identifier attribute. It's not apples to apples, one value is the number of records in an individual flowfile, the other is the number of expected flow files.

What am I missing here?

Created on 12-17-2018 11:37 PM - edited 08-17-2019 03:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

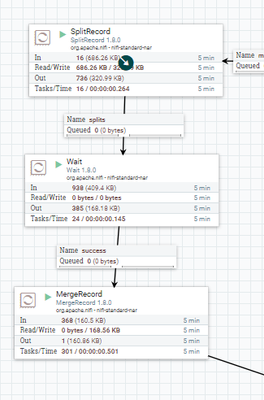

I can get it to work if I use a SplitRecord to split all the paged files into flowfiles with a single record each. In this case, fragment.count is equal to the count of flow files, which are both equal to the total number of records.

I do not believe this is the intended design of the MergeRecord processor. Has anyone been successful configuring the MergeRecord processor in Defragment mode?

Created 02-05-2019 05:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I have the exact same issue. was this issue resolved ? @Adam Roderick, were you able to use the defrag mergerecord process?

Created 02-05-2019 09:47 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, @Rohit K, unfortunately I the issue persists.