Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Minimal executable jar based on Scala code pac...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Minimal executable jar based on Scala code packed with Maven

- Labels:

-

Apache Spark

Created on 03-21-2017 02:59 PM - edited 08-18-2019 04:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi folks,

I would like to make a minimal example packed with Maven based on Scala code (like a hello world) and run it on a HDP2.5 sandbox. What do I need to specify in my pom.xml? So far I have this:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.test.spark</groupId>

<artifactId>Test</artifactId>

<version>0.0.1</version>

<name>${project.artifactId}</name>

<description>Simple test app</description>

<inceptionYear>2017</inceptionYear>

<!-- change from 1.6 to 1.7 depending on Java version -->

<properties>

<maven.compiler.source>1.6</maven.compiler.source>

<maven.compiler.target>1.6</maven.compiler.target>

<encoding>UTF-8</encoding>

<scala.version>2.11.5</scala.version>

<scala.compat.version>2.11</scala.compat.version>

<spark.version>1.6.1</spark.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<!-- Spark dependency -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_${scala.compat.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Spark sql dependency -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_${scala.compat.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Spark hive dependency -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_${scala.compat.version}</artifactId>

<version>${spark.version}</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<!-- Create JAR with all dependencies -->

<plugins>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.0.0</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

</plugin>

<plugin>

<!-- see http://davidb.github.com/scala-maven-plugin -->

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

<scalaCompatVersion>${scala.compat.version}</scalaCompatVersion>

</configuration>

<executions>

<execution>

<phase>compile</phase>

<goals>

<goal>compile</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

And my scala code is this:

package com.test.spark

import org.apache.spark.SparkContext

import org.apache.spark.SparkConf

import org.apache.spark.sql

import org.apache.commons.lang

import org.apache.spark.sql.SQLContext

import org.apache.spark.sql.hive.HiveContext

import org.apache.spark.rdd.RDD

object Test {

def main(args: Array[String]) {

val conf = new SparkConf().setAppName("Test") .setMaster("local[2]")

val spark = new SparkContext(conf)

println( "Hello World!" )

}

}

I run the code with

spark-submit --class com.test.spark.Test --master yarn --deploy-mode cluster hdfs://HDP25/test.jar

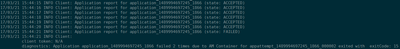

Unfortunately it does not run. 😞 See the image attached.

Am I missing something? Can you please help me to get a minimal example running?

Thanks and kind regards

Created 03-22-2017 04:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @Ken Jiiii

Looking at your error, application master failed 2 times due to exit code 15, Did you check your /spark/conf if you have placed hive-site.xml and in your code can you try removing " .setMaster("local[2]") " as you are running on yarn.

try running it

spark-submit --class com.test.spark.Test --master yarn-cluster hdfs://HDP25/test.jar

Created 03-23-2017 06:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Vinod Bonthu,

your answer really helped but unfortunately it wasn't the complete solution. As statd in my error log I had to add some jars via the

spark-submit --jars

command and I also added my hive site with

spark-submit --files /usr/hdp/current/spark-client/conf/hive-site.xml

With those two changes and removing

.setMaster("local[2]")

it worked!

Thanks for your help!

Created 03-23-2017 04:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

awesome @Ken Jiiii

hive-site.xml should be available across the cluster in /etc/spark/conf ( where /usr/hdp/current/spark-client/conf will be symlink to) and spark client need to be installed across the cluster worker nodes for your yarn-cluster mode to run as your spark driver can run on any worker node and should be having client installed with spark/conf. If you are using Ambari it will taking care of hive-site.xml available in /spark-client/conf/

- « Previous

-

- 1

- 2

- Next »