Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: NiFi - how to remove efficiently a line from a...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

NiFi - how to remove efficiently a line from a big flow file?

- Labels:

-

Apache NiFi

Created on 02-24-2017 03:21 PM - edited 08-19-2019 03:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Task may seem to be easy, but in fact it isn't...

I have a big flow file (>1GB), from which I need to remove, let's say, first line (header) before further processing.

So far I had 3 attempt, but none of them works as expected:

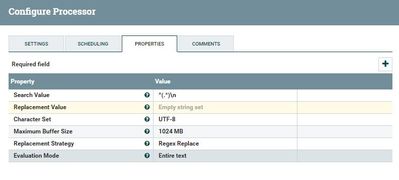

1) ReplaceText

Works for small files, but the problematic file is too big to load it into memory (I get memory out of bounds exception).

2) SplitText

I was trying to use SplitText, but due to this issue I cannot skip the header line in this processor at the moment.

In other words - this processor fails whenever Header Line Count > 0.

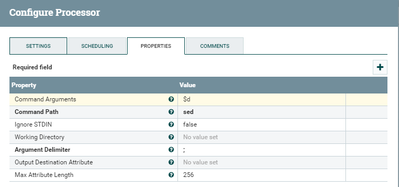

3) ExecuteProcess

I can imagine running a linux command (e.g. tail or sed) to do this job, but it requires saving the flow file to the disk, which might be also costly.

Do you have any ideas if this can be done more efficiently?

Thanks, Michal

Created 02-24-2017 03:28 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I suggest you use SplitText a few times to avoid loading all flow files into memory. Go from 1 million --> 100,000, --> 10,000 --> 1000 --> 1. You can cut those down to as well meaning from 1mil->10 thousand -> 1000 -> 1. Then from there use routeontext and route the header to one point and rest of the lines to another point.

Created 02-24-2017 03:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you please give us some context. where are you getting this file from? sftp?

wether you explicitly do this or not, the flowfile received in nifi will always be saved to disk. if this can be done easily with Executeprocess, it is a good option and it really will not impact your flows performance. Nifi is very efficient at File IO.

Created 02-24-2017 03:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Karthik Narayanan File is local on NiFi box. I know that ExecuteProcess would be an option but I'd like to avoid saving the file on the disk. How about ExecuteStreamCommand and something like: sed '1d' simple.tsv > noHeader.tsv but in a way: sed '1d' myFlow > myFlow. How I can reference myFlow in this command?

Created 02-24-2017 04:02 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

FWIW, the https://issues.apache.org/jira/browse/NIFI-3255 issue has been resolved and is available in the current master.

Created on 03-15-2019 03:04 PM - edited 08-19-2019 03:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Use the ExecuteStreamCommand Processor and use the sed command something like this. This worked for me.