Support Questions

- Cloudera Community

- Support

- Support Questions

- NiFi wait processor huge number of events IN compa...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

NiFi wait processor huge number of events IN compared to OUT.

- Labels:

-

Apache NiFi

Created on

03-26-2020

05:40 AM

- last edited on

03-26-2020

05:54 AM

by

cjervis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I need help with config of wait module.

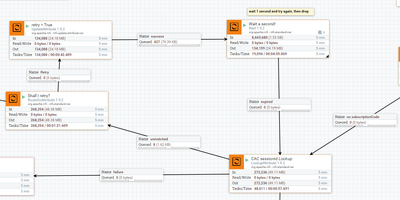

I am trying to execute lookup and if not matched , retry loop is entered with wait 1sec. second loop the message is dropped.

THe mechanism works, but I worry of numbers provided by wait module.

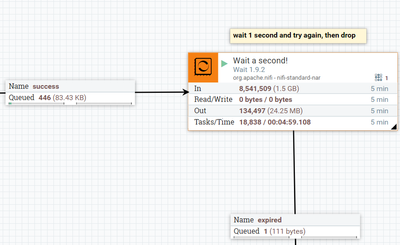

it shows 8mil files IN, and 134k files out.

Is this a correct setup ?

Why and where is the 8mil IN comming from?

How to avoid this?

I worry it will crash when more traffic comes in.

Thank you

Created 03-27-2020 01:39 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No matter which processor you are looking at the stats presented all tell you the same information:

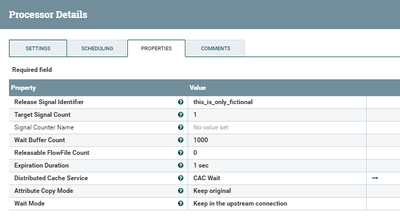

In <-- Tells you how many FlowFile were processed from one or more inbound connections over the last rolling 5 minute window. With this processor you have it configured the "wait mode" to leave the FlowFile on the inbound connection. So the processor is constantly looking at the file over and over again until the configured expiration time has elapsed.

Read/Write. <-- Tells you how much FlowFile content was read from or written to the NiFi content repository (helps user identify processors that may be disk I/O heavy)

Out. <-- Tells you how many FlowFiles have been released to an outbound connection over the last rolling 5 minute window. Here you see a number that reflects only those flowfiles that expired and where sent to your outbound expired connection.

Tasks/Time. <-- Tells you how many threads this processor completed execution over the last rolling 5 minutes and the total cumulative time those threads consumed from the CPU. (helps user identify what processors consume lots of CPU time)

So the stats you are seeing are not surprising.

While this processor works for your use case i guess, it has overhead needing to connect to a distributed map cache on every execution against an inbound FlowFile. If your intent is only to delay a FlowFile for 1 second before it proceeds down the flow path, a better solution may be to just use an updateAttribute processor that creates an attribute with current time and RouteOnAttribute processor that checks to see if that recorded time plus 1000 ms is less than current time. Then loop that check until it is not.

Hope this helps,

Matt

Created 03-27-2020 01:39 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No matter which processor you are looking at the stats presented all tell you the same information:

In <-- Tells you how many FlowFile were processed from one or more inbound connections over the last rolling 5 minute window. With this processor you have it configured the "wait mode" to leave the FlowFile on the inbound connection. So the processor is constantly looking at the file over and over again until the configured expiration time has elapsed.

Read/Write. <-- Tells you how much FlowFile content was read from or written to the NiFi content repository (helps user identify processors that may be disk I/O heavy)

Out. <-- Tells you how many FlowFiles have been released to an outbound connection over the last rolling 5 minute window. Here you see a number that reflects only those flowfiles that expired and where sent to your outbound expired connection.

Tasks/Time. <-- Tells you how many threads this processor completed execution over the last rolling 5 minutes and the total cumulative time those threads consumed from the CPU. (helps user identify what processors consume lots of CPU time)

So the stats you are seeing are not surprising.

While this processor works for your use case i guess, it has overhead needing to connect to a distributed map cache on every execution against an inbound FlowFile. If your intent is only to delay a FlowFile for 1 second before it proceeds down the flow path, a better solution may be to just use an updateAttribute processor that creates an attribute with current time and RouteOnAttribute processor that checks to see if that recorded time plus 1000 ms is less than current time. Then loop that check until it is not.

Hope this helps,

Matt