Support Questions

- Cloudera Community

- Support

- Support Questions

- Nifi & Hive are not running map jobs in parallel. ...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Nifi & Hive are not running map jobs in parallel. How can I better utilize my Big Data?,

Created on 10-19-2016 12:51 PM - edited 08-19-2019 01:32 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

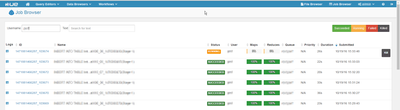

I'm in the middle of a massive ingest process in NiFi using putHiveQL which is pretty much choking.

Using: Nifi 1 (Beta - untill the queues get empty) and CDH 5.4.3 (Hive 1.1)

I have made everything I could think of to enable parallel processing. But still I can see only one or two jobs running in parallel.

Do you think I'm missing somthing?

1. hive configuration - enabling hive.exec.parallel and increasing hive.exec.parallel.thread.number

<property> <name>hive.exec.parallel </name> <value>true</value> <description>Whether to execute jobs in parallel </description> </property> <property> <name>hive.exec.parallel.thread.number</name> <value>35</value> <description>Whether to execute jobs in parallel</description> </property>

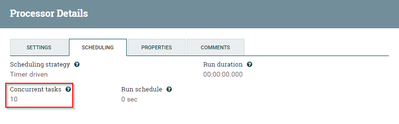

2. Configured Connect2HiveAndExec-Concurrent tasks to 10

3.Increased NiFi settings Max threads

,

Created 10-19-2016 12:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

PutHiveQL is not really intended to be used for "massive ingest" purposes since it has to go through the Hive JDBC driver which has a lot of overhead for a single insert. PutHiveStreaming would probably be what you want to use, or just writing data to a directory in HDFS (using PutHDFS) and creating a Hive external table on top of it.

Created 10-19-2016 12:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

PutHiveQL is not really intended to be used for "massive ingest" purposes since it has to go through the Hive JDBC driver which has a lot of overhead for a single insert. PutHiveStreaming would probably be what you want to use, or just writing data to a directory in HDFS (using PutHDFS) and creating a Hive external table on top of it.

Created 10-19-2016 01:13 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the fastest response ever 🙂

You are generally right. But this flow should stablized soon as it complete loading history files and handle only two files per minute. The current "backlog" is about 2500 insert commands waiting in queue. Maybe I exaggerated using "massive ingest" to describe the problem...

Is there another way to temporarily boost this process?

Created 10-19-2016 01:24 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I suspect it is something more on the Hive side of things, which is out of my domain.

Increasing the concurrent tasks on the PutHiveQL processor is the appropriate approach on the NiFi side, generally somewhere between 1-5 concurrent tasks is usually enough, but the concurrent tasks can only work as fast as whatever they are calling. If all 10 of your threads go to make a call to the Hive JDBC driver, and 2 of them are doing stuff, and 8 are blocking because of something in Hive, then there isn't much NiFi can do.

Created 10-26-2016 04:02 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I found this about insert operation and parallelism:

Note: The INSERT ... VALUES technique is not suitable for loading large quantities of data into HDFS-based tables, because the insert operations cannot be parallelized, and each one produces a separate data file. Use it for setting up small dimension tables or tiny amounts of data for experimenting with SQL syntax, or with HBase tables. Do not use it for large ETL jobs or benchmark tests for load operations. Do not run scripts with thousands of INSERT ... VALUES statements that insert a single row each time. If you do run INSERT ... VALUES operations to load data into a staging table as one stage in an ETL pipeline, include multiple row values if possible within each VALUES clause, and use a separate database to make cleanup easier if the operation does produce many tiny files.

http://www.cloudera.com/documentation/enterprise/5-5-x/topics/impala_insert.html