Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Not able to parse text file to json format

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Not able to parse text file to json format

- Labels:

-

Apache NiFi

Created on

01-23-2020

06:51 AM

- last edited on

01-25-2020

02:52 PM

by

ask_bill_brooks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi folks, i have problem in parsing json textfile.

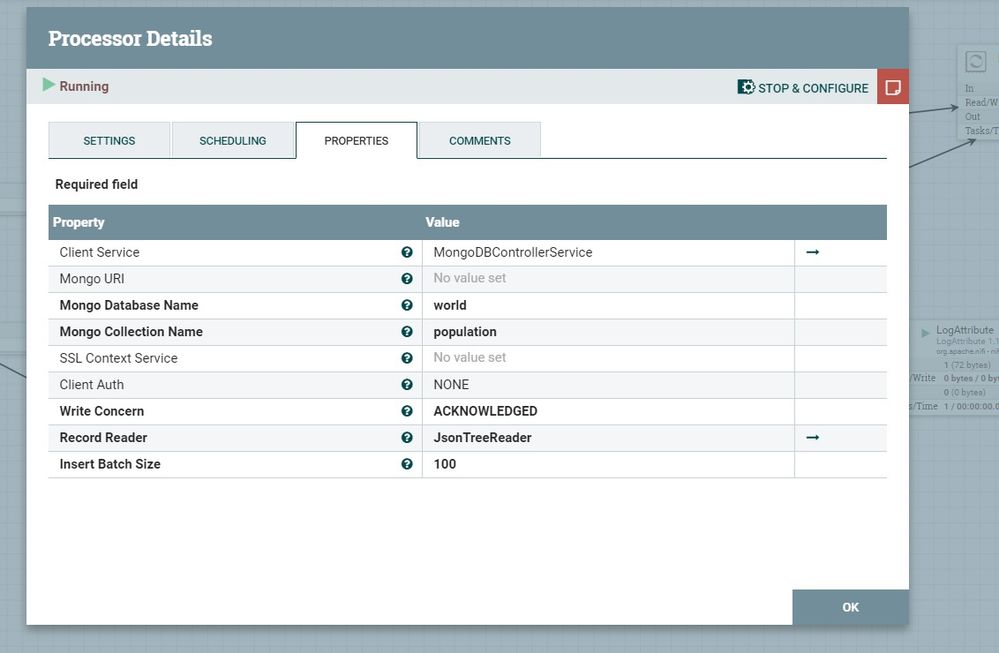

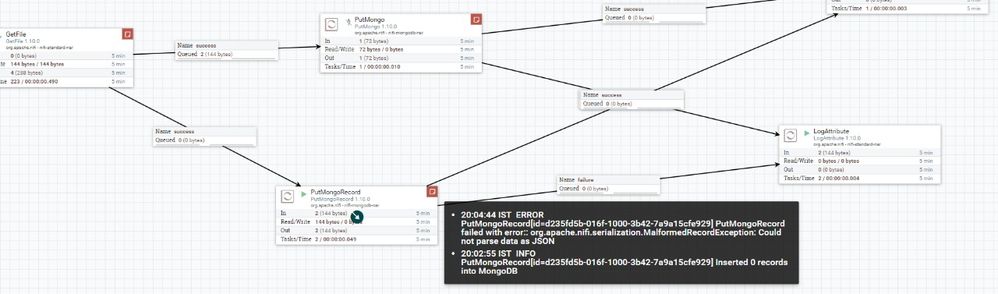

what i have done is, I sent a text file which is in a json architecture to PutMongoRecord To parse that file into json but it is not getting parsed.

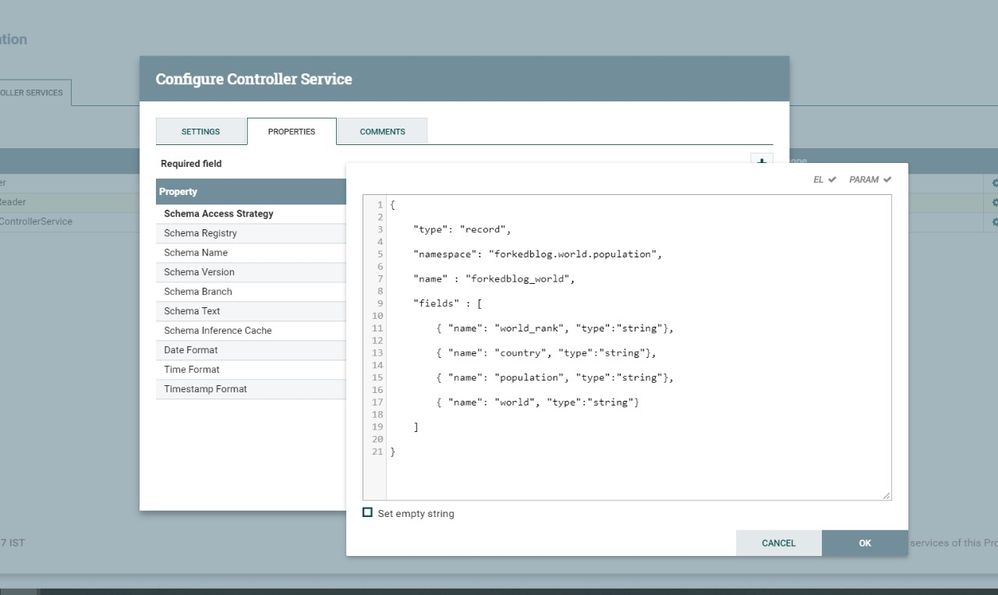

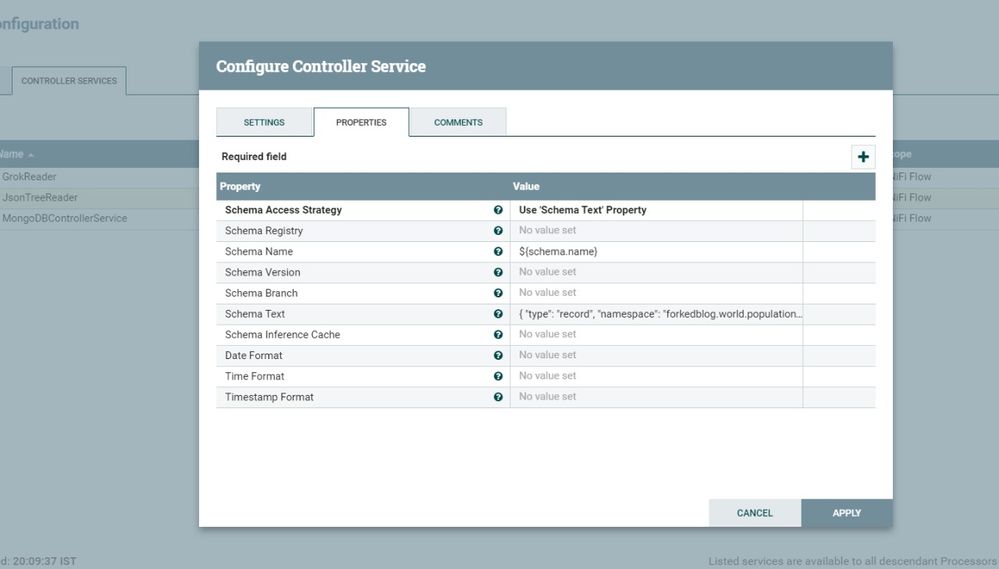

i have attached the configuration done by me.

please help me in this problem.

Created on 01-24-2020 04:11 AM - edited 01-24-2020 04:19 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

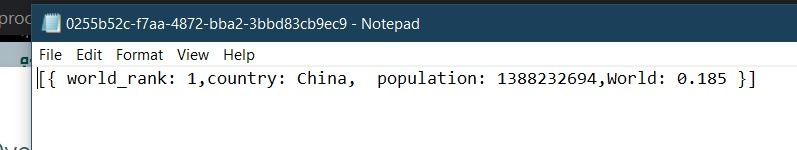

The FlowFile is not valid json.

Try something like a basic json example found on the internet:

{"results":[{"term":"term1"},{"term":"term2"}..]}Notice the object results is the array of values and everything is "quoted".

Something like this based on your sample image:

{ "world_rank": "1", "country": "China", "population": "1388232694", "World": "0.185" }

If you have to use what you have in the sample image, then you will need to extracText (link here for examples of regex to match values) to get the values for world_rank, country, population, world.

Each values attribute regex like this:

.*world_rank :(.*?) .*

.*country: (.*?) .*

.*population: (.*?) .*

.*world: (.*?) .*

Then use attributesToJson to build the Json object of the attributes you defined above which you can then send to the reader and ultimately to MongoDb.

If my reply helps you solve your Use Case, please accept it as a solution to close the topic.

Created 01-23-2020 07:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can find the indices and matches of the header with the finditer of the re package. Then, use that to process the rest:

import reimport json thefile = open("file.txt")line = thefile.readline()iter = re.finditer("\w+\s+", line)columns = [(m.group(0), m.start(0), m.end(0)) for m in iter]records = [] while line: line = thefile.readline() record = {} for col in columns: record[col[0]] = line[col[1]:col[2]] records.append(record) print(json.dumps(records))

Created on 01-24-2020 04:11 AM - edited 01-24-2020 04:19 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The FlowFile is not valid json.

Try something like a basic json example found on the internet:

{"results":[{"term":"term1"},{"term":"term2"}..]}Notice the object results is the array of values and everything is "quoted".

Something like this based on your sample image:

{ "world_rank": "1", "country": "China", "population": "1388232694", "World": "0.185" }

If you have to use what you have in the sample image, then you will need to extracText (link here for examples of regex to match values) to get the values for world_rank, country, population, world.

Each values attribute regex like this:

.*world_rank :(.*?) .*

.*country: (.*?) .*

.*population: (.*?) .*

.*world: (.*?) .*

Then use attributesToJson to build the Json object of the attributes you defined above which you can then send to the reader and ultimately to MongoDb.

If my reply helps you solve your Use Case, please accept it as a solution to close the topic.

Created 01-24-2020 11:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I need to send

{"value" : [{"id1":"10"}]}

for the above data what must be its schema ?so that it must store into database in such a way

id1=10

thanks in advance!!

Created 01-24-2020 11:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 01-25-2020 06:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I’m the schema remove the second row in fields. So it’s just id1.

Now do a test: use generateflowfile with the schema in contents. Then evaluatejson. In this processor click +, and make id1. The value for id1 is then $.value.id1 (this is the code to get the real value. Then route evaluatejson routes to an output port. Run. Inspect the item in the queue and confirm you see the attribute id1.

This is a simple example to help you understand how json works, how to get the data from the value object, how to confirm schema is correct, and how to unit test by looking at flowfile in the queue.