Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Only one HBase Regionserver has no region & n...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Only one HBase Regionserver has no region & no request

- Labels:

-

Apache HBase

Created 03-05-2021 02:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

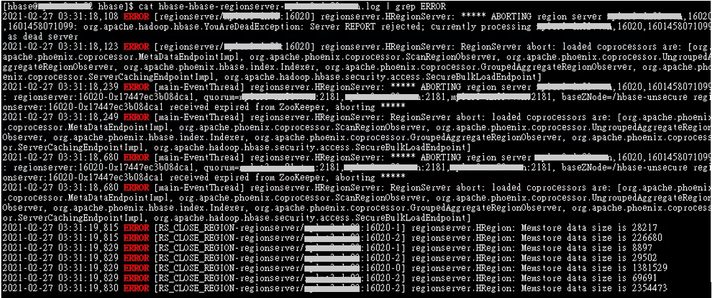

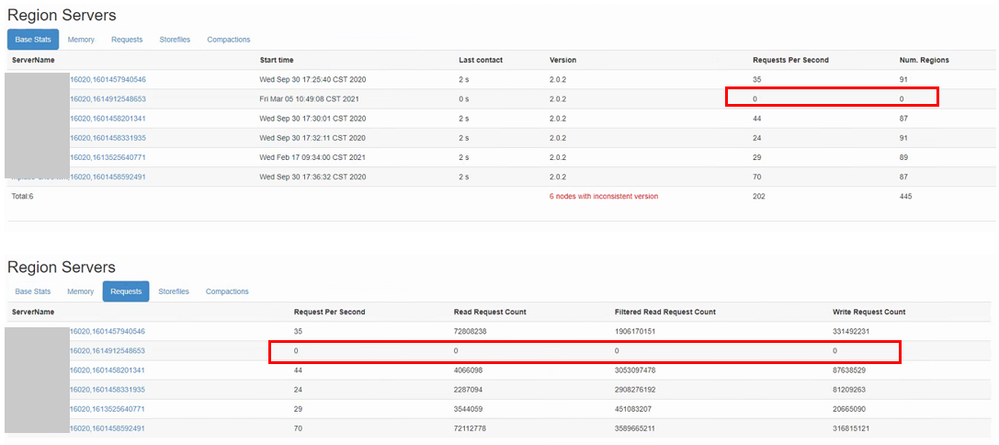

There is a Regionserver that is not in a dead state, but it doen not have any regions and has not received any Request.

This situation occurred after a memstore ERROR, and then the status of RS on the HBase Master UI was always 0. Then this RS does not show any ERROR Log anymore.

Also tried to delete this RS and reinstall it, but it still shows 0 on the HBase Master UI.

And still No ERROR Log...

memstore error:

HBase Master UI:

Hadoop Release: HDP 3.1.0

Created 03-16-2021 01:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @smdas

We found that the problem with the Balancer is due to the strategy preset by hbase.master.loadbalancer.class that causes the abnormal RegionServer to fail to return to the balance. The final solution is to temporarily set hbase.master.loadbalancer.class=org.apache.hadoop.hbase.master.balancer.SimpleLoadBalancer.

After restarting HBase, the abnormal RegionServer successfully returned to balance.

And finally canceled the parameters back to the original state, the abnormal situation did not appear again.

Created 03-13-2021 11:47 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Kenzan

Thanks for using Cloudera Community. Based on the synopsis, Your Team have 1 RegionServer being allocated no Regions. Deleting the RegionServer & adding the same afresh doesn't help either.

The 1st Screen-Shot showing the Log shows the RegionServer received a ZooKeeper expiry. It's likely the RegionServer experienced a ZooKeeper Timeout or the Master didn't receive any Heartbeat for the same. As 1 RegionServer is impacted, Review the Host Level concerns (CPU/Memory) if the RegionServer is being aborted (Likely, No relationship with Zero Region assignment).

Coming the Zero Region assignment, Enable TRACE Logging for HMaster Balancer Thread or briefly enable the Complete HMaster Trace Logging (HMaster UI > LogLevel > "org.apache.hadoop.hbase" & TRACE for "Set Log Level"). This would enable the TRACE Logging for HMaster Service & capture any Balancer associated tracing, which would confirm the reasoning for AssignmentManager skipping the Region from any Region assignment. Once the TRACE Logging is captured & a Balancer Run has been captured to confirm the reasoning for Region Balancing being skipped, We can set the Logging to INFO again.

- Smarak

Created 03-16-2021 01:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @smdas

We found that the problem with the Balancer is due to the strategy preset by hbase.master.loadbalancer.class that causes the abnormal RegionServer to fail to return to the balance. The final solution is to temporarily set hbase.master.loadbalancer.class=org.apache.hadoop.hbase.master.balancer.SimpleLoadBalancer.

After restarting HBase, the abnormal RegionServer successfully returned to balance.

And finally canceled the parameters back to the original state, the abnormal situation did not appear again.

Created 03-16-2021 01:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Kenzan

Thanks for the Update. Typically, Such issues lies with Balancing & the TRACE Logging prints the finer details into the same. Ideally, We should have "StochasticLoadBalancer" as Default & "SimpleLoadBalancer" (Set by your Team) extends on BaseLoadBalancer. Have shared 2 Links documenting the 2 Balancer & their running configurations.

As your issue has been resolved, Kindly mark the Post as Resolved to ensure we close the Post as well.

Thanks again for being a Cloudera Community Members & contributing as well.

- Smarak

[1] https://hbase.apache.org/devapidocs/org/apache/hadoop/hbase/master/balancer/SimpleLoadBalancer.html