Support Questions

- Cloudera Community

- Support

- Support Questions

- Scripted start / stop of HDP services

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Scripted start / stop of HDP services

Created 03-08-2022 07:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am trying to perform a scripted start / stop of HDP services and its components. I need to get this done service by service, because there are few components like Hive Interactive / Spark Thrift server / Kafka broker, which does not get started / stopped in proper time. Most of the times it is observed that even if HSInteractive is still starting (visible in the background operations panel on Ambari), command moves to next service to start / stop and ultimately HSI fails. Hence, I also want to ensure that previous service is completely stopped / started, before attempting to stop / start next service in the list.

To achieve, below is the script I have written (this is to stop, I have similar for start). However, when it reaches to the point for Hive or Spark or Kafka services, even though the the internal components like Hive Interactive or Spark Thrift server or Kafka broker are not started / stopped, it moves to the next service. Most of the times the start of Spark Thrift server fails via this script. However, if same API call is sent only for Spark or Hive individually it works as expected.

Shell script for reference:

USER='admin'

PASS='admin'

CLUSTER='xxxxxx'

HOST='xxxxxx:8080'

function stop(){

curl -s -u $USER:$PASS -H 'X-Requested-By: ambari' -X PUT -d '{"RequestInfo": {"context" :"Stop '"$1"' via REST"}, "Body": {"ServiceInfo": {"state": "INSTALLED"}}}' http://$HOST/api/v1/clusters/$CLUSTER/services/$1

echo -e "\nWaiting for $1 to stop...\n"

wait $1 "INSTALLED"

maintOn $1

}

function wait(){

finished=0

check=0

while [[] $finished -ne 1 ]]

do

str=$(curl -s -u $USER:$PASS http://{$HOST}/api/v1/clusters/$CLUSTER/services/$1)

if [[ $str == *"INSTALLED"* ]]

then

finished=1

echo "\n$1 Stopped...\n"

fi

check=$((check+1))

sleep 3

done

if [[ $check -eq 3 ]]

then

echo -e "\n${1} failed to stop after 3 attempts. Exiting...\n"

exit $?

fi

}

function maintOn(){

curl -u $USER:$PASS -i -H 'X-Requested-By: ambari' -X PUT -d '{"RequestInfo":{"context":"Turn ON Maintenance Mode for $1 via Rest"},"Body":{"ServiceInfo":{"maintenance_state":"ON"}}}' http://$HOST/api/v1/clusters/$CLUSTER/services/$1

}

stop AMBARI_INFRA_SOLR

stop AMBARI_METRICS

stop HDFS

stop HIVE

stop KAFKA

stop MAPREDUCE2

stop SPARK2

stop YARN

stop ZOOKEEPER

Any help / guidance would be great.

Thanks

Snm1523

Created 03-09-2022 06:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@snm1523 This may not be the direction you want to take, but I cannot help but wonder what the environment spec is. Starting/reStarting services should work nicely. Instead of trying to create logic around start/finish race conditions, maybe focus on why any certain services are not restarting quickly. This may identify that one or more nodes are doing too much, creating the race condition.

Created 03-09-2022 06:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@snm1523 This may not be the direction you want to take, but I cannot help but wonder what the environment spec is. Starting/reStarting services should work nicely. Instead of trying to create logic around start/finish race conditions, maybe focus on why any certain services are not restarting quickly. This may identify that one or more nodes are doing too much, creating the race condition.

Created 03-09-2022 07:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the response @steven-matison.

I have 3 different scenarios so far:

1. Kafka, at times one or other Kafka Broker doesn't come up quickly.

2. Spark, Spark Thrift server is always not starting at the first place when bundled in an All services script. However, if called individually (only SPARK) works as expected.

3. Hive, we have around 6 Hive Interactive servers. HSI by nature takes a little long to start (they do start eventually though).

In all the scenarios mentioned above, the moment there is an API call to start or stop service, the status in ServiceInfo field of API output changes to what is needed (i.e. Installed in case of Stop and Started in case of start), however, the underlying components are still doing their work to start / stop. Since, I am checking the status at the service level (reference below), the condition is passed and moves ahead. Ultimately I am in a situation of one service still starting / stopping and other is already triggered.

str=$(curl -s -u $USER:$PASS http://{$HOST}/api/v1/clusters/$CLUSTER/services/$1)

if [[ $str == *"INSTALLED"* ]]

then

finished=1

echo "\n$1 Stopped...\n"

fi

So far I have noticed this only for those 3 services. Hence, I am seeking suggestions on how do I overcome this OR if there is a way I could check status of each component of each service.

Hope I was able to explain this better.

Thanks

snm1523

Created 03-09-2022 07:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

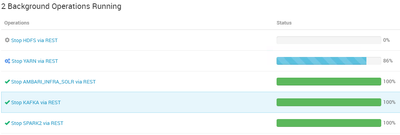

Something like this. This time Spark, Kafka and Hive got stopped as expected, but since YARN took a longer to Stop (internal components were still getting stopped), moment script got service status of YARN as INSTALLED, it triggered HDFS and HDFS was waiting. Please refer to screenshot:

What I want is to ensure previous service is completely started / stopped before the next one is triggered.

Not sure if that is even possible.

Thanks

snm1523

Created on 03-09-2022 07:29 AM - edited 03-09-2022 07:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Additional info @steven-matison,

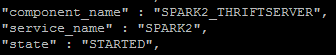

I checked further an API call for SPARK2 service and found a difference. Spark Thrift Server is reported as STARTED in the API output, however, on Ambari UI it is stopped. See the screenshots.

Ambari UI:

API Output:

Any thoughts / suggestions.

Thanks

snm1523

Created 04-08-2022 08:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

I was able to get my script tested on my 10 nodes DEV cluster. Below are the results:

1. All HDP core services started / stopped okay

2. None of Hive Service Interactive service started and hence, Hive service was not marked as STARTED though HMS and HS2 were started okay

3. None of the Spark2_THRIFTSERVER was started

Any one can share some thoughts on points 2 and 3?

Thanks

snm1523