Support Questions

- Cloudera Community

- Support

- Support Questions

- Spark java.lang.StackOverflowError

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Spark java.lang.StackOverflowError

- Labels:

-

Apache Spark

Created 08-26-2018 12:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

<br>

I try to build a model for movie lens rating data with Spark ALS. On Windows host, I use Spark 2.3.1. Data has just and 100.000 rows and three columns; userid, movieid, and rating. My machine has Intel i7 and 32 GB memory. I have increased executor memory to 10 G. I get java.lang.StackOverflowErrorMy error. My codes are below:

object ErkansALS {

def main(args: Array[String]): Unit = {

val sparkConf = new SparkConf()

.setMaster("local[4]")

.setAppName("SparkALS")

.setExecutorEnv("spark.driver.memory","8g")

.setExecutorEnv("spark.executor.memory","10g")

.setExecutorEnv("spark.sql.broadcastTimeout","1200")

val spark = SparkSession.builder()

.config(sparkConf)

.getOrCreate()

val movieRatings = spark.read.format("csv")

.option("header","true")

.option("inferSchema","true")

.load("ratings.csv")

.drop("timestamp")

val Array(training, test) = movieRatings.randomSplit(Array(0.8, 0.2),seed = 142)

training.cache()

val alsObject = new ALS()

.setUserCol("userId")

.setItemCol("movieId")

.setRatingCol("rating")

.setColdStartStrategy("drop")

.setNonnegative(true)

val paramGridObject = new ParamGridBuilder()

.addGrid(alsObject.rank, Array(12,14))

.addGrid(alsObject.maxIter, Array(18,20))

.addGrid(alsObject.regParam, Array(.17,.19))

.build()

val evaluator = new RegressionEvaluator()

.setMetricName("rmse")

.setLabelCol("rating")

.setPredictionCol("prediction")

val tvs = new TrainValidationSplit()

.setEstimator(alsObject)

.setEstimatorParamMaps(paramGridObject)

.setEvaluator(evaluator)

val model = tvs.fit(training)

val bestModel = model.bestModel

val predictions = bestModel.transform(test)

val rmse = evaluator.evaluate(predictions)

predictions.show()

println("RMSE = ", rmse)

println("Best Model")

}

}

Errors are attached.

But when I try without TrainValidationSplit it works:

package spark.ml.recommendation.als

import org.apache.spark.ml.evaluation.RegressionEvaluator

import org.apache.spark.ml.recommendation.ALS

import org.apache.spark.ml.tuning.{TrainValidationSplit, ParamGridBuilder}

import org.apache.spark.sql.{SparkSession}

import org.apache.spark.{SparkConf, SparkContext}

object ErkansALS {

def main(args: Array[String]): Unit = {

/* val sparkConf = new SparkConf() .setExecutorEnv("spark.driver.memory","4g") .setExecutorEnv("spark.executor.memory","8g") .setExecutorEnv("spark.sql.broadcastTimeout","1200") .setExecutorEnv("spark.eventLog.enabled","false")*/val spark = SparkSession.builder()

.master("local[*]")

.appName("SparkALS")

.getOrCreate()

val movieRatings = spark.read.format("csv")

.option("header","true")

.option("inferSchema","true")

.load("C:\\Users\\toshiba\\SkyDrive\\veribilimi.co\\Datasets\\ml-latest-small\\ratings.csv")

.drop("timestamp")

// .sample(0.1,142)movieRatings.show()

println(movieRatings.count())

// 100.004 adet rating var. // Create training and test setval Array(training, test) = movieRatings.randomSplit(Array(0.8, 0.2),seed = 142)

training.cache()

// Create ALS modelval alsObject = new ALS()

.setUserCol("userId")

.setItemCol("movieId")

.setRatingCol("rating")

.setColdStartStrategy("drop")

.setNonnegative(true)

/* // Tune model using ParamGridBuilder val paramGridObject = new ParamGridBuilder() .addGrid(alsObject.rank, Array(14)) .addGrid(alsObject.maxIter, Array(20)) .addGrid(alsObject.regParam, Array(.19)) .build()*/ // Define evaluator as RMSEval evaluator = new RegressionEvaluator()

.setMetricName("rmse")

.setLabelCol("rating")

.setPredictionCol("prediction")

/* // Build cross validation using TrainValidationSplit val tvs = new TrainValidationSplit() .setEstimator(alsObject) .setEstimatorParamMaps(paramGridObject) .setEvaluator(evaluator)*/ // Fit ALS model to training setval model = alsObject.fit(training)

/* // Take best model val bestModel = model.bestModel*/ // Generate predictions and evaluate RMSEval predictions = model.transform(test)

val rmse = evaluator.evaluate(predictions)

predictions.show()

// Print evaluation metrics and model parametersprintln("RMSE = ", rmse)

}

}

Created 08-27-2018 12:22 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Erkan ŞİRİN, have you tried to increase the stack memory space using -Xss flag? If not you may want to try something like this:

--conf "spark.executor.extraJavaOptions='-Xss512m'"

--driver-java-options "-Xss512m"

HTH

*** If you found this answer addressed your question, please take a moment to login and click the "accept" link on the answer.

Created 08-27-2018 01:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Felix Albani I have used your suggestion before compiling. I think it doesn't make any different

val spark = SparkSession.builder()

.master("local[4]")

.appName("SparkALS")

.config("spark.executor.extraJavaOptions","-Xss4g")

.config("driver-java-options","-Xss4g")

.getOrCreate()

Unfortunately, when I use TrainValidationSplit, even with only one grid params, I get the same error. It works fine without TrainValidationSplit.

Created 08-27-2018 01:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is not a compile time option. Its runtime and should be set in the command line not in code by spark session options. If you are you running this code from eclipse you should add this as an argument to the java directly -Xss. Else if running using spark-submit command then add as I indicated before. With these settings at least it should take longer to error out since you will be making the memory area for stack bigger to fill.

HTH

*** If you found this answer addressed your question, please take a moment to login and click the "accept" link on the answer.

Created on 08-27-2018 06:36 PM - edited 08-17-2019 06:23 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

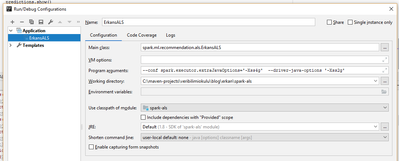

Hi again @Felix Albani I use Intellij IDEA. I put the arguments into run -> edit configurations -> program arguments as below.

But it didn't work.