Support Questions

- Cloudera Community

- Support

- Support Questions

- SparkSQL returns empty result when accessing Hive ...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

SparkSQL returns empty result when accessing Hive Tables

Created on 08-01-2018 06:32 PM - edited 08-17-2019 09:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Community !

I recently upgraded the HDP version from 2.6.4 to 3.0.0.

There were some problems but most of them were resolved, except one important problem.

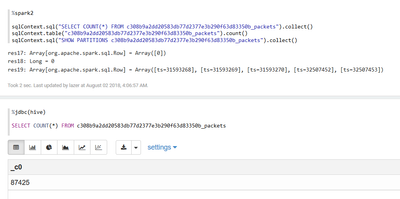

Strangely, when I query 'SELECT' to Hive's tables with SparkQL, It shows no results, just like empty tables,

but when I try exact same 'SELECT' query at beeline shell, It works perfectlly.

More Strangely, if I query 'SHOW TABLES' or 'SHOW PARTITIONS table_name' with SparkQL, It works perfectlly as I expected.

I already spent few days for this problem, but I couldn't find any solutions.

also I checked all logs, but I did not see any errors or warnings.

Did I misconfigured Spark or Hive something?

Has anyone experienced this like problem?

Created 08-02-2018 03:51 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Jeongmin Ryu,

Apparently spark could not be retrieve/visible underline file system which is seen by the meta store.

can I request to run

ALTER TABLE table_name RECOVER PARTITIONS; or MSCK REPAIR TBALE table_name;

this will ensure that Meta store updated with relevant partitions.

at the same time can you please ensure that your spark-conf (of zeppelin version) has the most latest hive-site.xml, core-site.xml and hdfs-site.xml files as these are important for spark to determine the underline file system.

Created 08-02-2018 04:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for your reply @bkosaraju,

but it seems I have no luck with suggested query. I don't see any differences after submit both queries.

I just found this (Hive Transactional Tables are not readable by Spark) question.

as per JIRA tickets, my situation seems caused by exact same problem that is still exists in latest Spark version.

Is there any workarounds to use Hive 3.0 Table (sure, with 'transaction = true', it is mandatory for Hive 3.0 as I know) with Spark?

If not, maybe I should rollback to HDP 2.6...