Support Questions

- Cloudera Community

- Support

- Support Questions

- Unable to start the node manager

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Unable to start the node manager

- Labels:

-

Apache YARN

Created on

12-19-2019

05:16 AM

- last edited on

12-19-2019

05:23 AM

by

VidyaSargur

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Traceback (most recent call last):

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/YARN/package/scripts/nodemanager.py", line 102, in <module>

Nodemanager().execute()

File "/usr/lib/ambari-agent/lib/resource_management/libraries/script/script.py", line 351, in execute

method(env)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/YARN/package/scripts/nodemanager.py", line 53, in start

service('nodemanager',action='start')

File "/usr/lib/ambari-agent/lib/ambari_commons/os_family_impl.py", line 89, in thunk

return fn(*args, **kwargs)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/YARN/package/scripts/service.py", line 93, in service

Execute(daemon_cmd, user = usr, not_if = check_process)

File "/usr/lib/ambari-agent/lib/resource_management/core/base.py", line 166, in __init__

self.env.run()

File "/usr/lib/ambari-agent/lib/resource_management/core/environment.py", line 160, in run

self.run_action(resource, action)

File "/usr/lib/ambari-agent/lib/resource_management/core/environment.py", line 124, in run_action

provider_action()

File "/usr/lib/ambari-agent/lib/resource_management/core/providers/system.py", line 263, in action_run

returns=self.resource.returns)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 72, in inner

result = function(command, **kwargs)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 102, in checked_call

tries=tries, try_sleep=try_sleep, timeout_kill_strategy=timeout_kill_strategy, returns=returns)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 150, in _call_wrapper

result = _call(command, **kwargs_copy)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 314, in _call

raise ExecutionFailed(err_msg, code, out, err)

resource_management.core.exceptions.ExecutionFailed: Execution of 'ulimit -c unlimited; export HADOOP_LIBEXEC_DIR=/usr/hdp/3.0.1.0-187/hadoop/libexec && /usr/hdp/3.0.1.0-187/hadoop-yarn/bin/yarn --config /usr/hdp/3.0.1.0-187/hadoop/conf --daemon start nodemanager' returned 1. -bash: line 0: ulimit: core file size: cannot modify limit: Operation not permitted

/usr/hdp/3.0.1.0-187/hadoop/libexec/hadoop-functions.sh: line 1847: /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid: Permission denied

ERROR: Cannot write nodemanager pid /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid.

/usr/hdp/3.0.1.0-187/hadoop/libexec/hadoop-functions.sh: line 1866: /var/log/hadoop-yarn/yarn/hadoop-yarn-nodemanager

Created 12-19-2019 05:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jsensharma Please look into this issue

Created 12-19-2019 06:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please check if this file exists /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid . If not create the directory

mkdir /var/run/hadoop-yarn/yarn/

chown -R yarn:hadoop /var/run/hadoop-yarn/yarn/

touch hadoop-yarn-nodemanager.pid

chown yarn:hadoop /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

This will work.

Created 12-19-2019 01:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

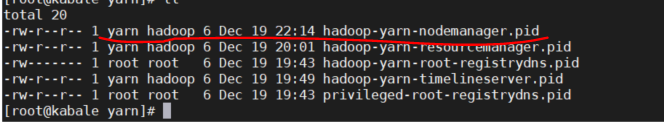

I think there is a permission issue with the pid file

Can you check the permissions, if for any reason the are not as shown in the screenshot please run the chown as root to rectify that

# chown yarn:hadoop /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

Do that for all files in the directory whose permissions are not correct.

HTH

Created 12-20-2019 02:07 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Shelton I tried the below solution even though the pid file created with 444 permission upon multiple restarts.

-r--r--r-- 1 yarn hadoop 6 Dec 20 05:00 hadoop-yarn-nodemanager.pid

Still the above issue is persisting

resource_management.core.exceptions.ExecutionFailed: Execution of 'ulimit -c unlimited; export HADOOP_LIBEXEC_DIR=/usr/hdp/3.0.1.0-187/hadoop/libexec && /usr/hdp/3.0.1.0-187/hadoop-yarn/bin/yarn --config /usr/hdp/3.0.1.0-187/hadoop/conf --daemon start nodemanager' returned 1. -bash: line 0: ulimit: core file size: cannot modify limit: Operation not permitted

/usr/hdp/3.0.1.0-187/hadoop/libexec/hadoop-functions.sh: line 1847: /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid: Permission denied

ERROR: Cannot write nodemanager pid /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid.

/usr/hdp/3.0.1.0-187/hadoop/libexec/hadoop-functions.sh: line 1866: /var/log/hadoop-yarn/yarn/hadoop-yarn-nodemanager-Hostname.org.out: Permission denied

Created 12-20-2019 03:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The file permission should be 644 not 444

# chmod 644 /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

Do that and revert please

Created on 12-20-2019 03:53 AM - edited 12-20-2019 03:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Shelton I have changed it to 644 but however after starting node manager it remains the same 444.

Before:

-rw-r--r-- 1 yarn hadoop 6 Dec 20 05:00 hadoop-yarn-nodemanager.pid

After

-r--r--r-- 1 yarn hadoop 6 Dec 20 05:00 hadoop-yarn-nodemanager.pid

Not able to find the exact cause why it is changing again to 444 though i did the permission manually.

Created 12-23-2019 02:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Shelton Any update on the above

Created 12-24-2019 01:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have tried to analyze your situation but with access to the Linux box it rather difficult,but I think there is a workaround.

The chattr linux command makes important files IMMUTABLE (Unchangeable).

The immutable bit [ +i ] can only be set by superuser (i.e root) user or a user with sudo privileges can be able to set. This will prevent the file from being deleted forcefully, renamed or change the permissions, but it won’t be allowed says 'Operation not permitted“'

# ls -al /var/run/hadoop-yarn/yarn/

total 8

.

..

-rw-r--r-- 1 yarn hadoop 0 Dec 24 09:34 hadoop-yarn-nodemanager.pid

Set immutable bit

# chattr +i hadoop-yarn-nodemanager.pid

Verify the attribute with command the below command

# lsattr

----i--------e-- ./hadoop-yarn-nodemanager.pid

The normal ls command shows no difference

# ls -al /var/run/hadoop-yarn/yarn/

total 8

drwxr-xr-x 2 root root 4096 Dec 24 09:34 .

drwxr-xr-x 3 root root 4096 Dec 24 09:34 ..

-rw-r--r-- 1 yarn hadoop 0 Dec 24 09:34 hadoop-yarn-nodemanager.pid

Deletion protection

# rm -rf /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

rm: cannot remove ‘/var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid’: Operation not permitted

Permission change protected

# chmod 755 /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

chmod: changing permissions of ‘/var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid’: Operation not permitted

How to unset attribute on Files

# chattr -i /var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

After resetting permissions, verify the immutable status of files using lsattr command

# lsattr

---------------- ./var/run/hadoop-yarn/yarn/hadoop-yarn-nodemanager.pid

Please do that and revert

Created 12-24-2019 03:15 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have tried to set the attribute for the file hadoop-yarn-nodemanager.pid

however, the file system /var/run seems to be XFS file system. The chattr commad does not work with xfs FS as per redhat. Please provide an alternate solution for this issue.

[root@w0lxdhdp05 yarn]# lsattr

lsattr: Inappropriate ioctl for device While reading flags on ./hadoop-yarn-nodemanager.pid

chattr: Inappropriate ioctl for device while reading flags on hadoop-yarn-nodemanager.pid

Please refer this -> https://access.redhat.com/solutions/184693