Support Questions

- Cloudera Community

- Support

- Support Questions

- What does fsck -delete do ?

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

What does fsck -delete do ?

- Labels:

-

HDFS

Created 10-13-2020 08:45 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

We currently have corrupted files (missing blocks) on our cluster. The active namenode is finding 2 out of 3 replicas on most of those files.

It seems the hdfs service is not replicating on its own, so we went through existing commands to attempt to fix things manually, and we found the hdfs "fsck" command here: https://hadoop.apache.org/docs/r2.8.4/hadoop-project-dist/hadoop-hdfs/HDFSCommands.html#fsck

From the documentation alone, I don't understand what the "-delete" option does. Does it delete all blocks of the file ? Does it delete the missing blocks (their metadata) ? I'm a bit confused.

Does anyone have experience with this command and could help me clarify what it does?

cbfr

Created 10-15-2020 04:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

fsck -delete, deletes the corrupted files, and the blocks related to that file.

Created 10-18-2020 03:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Before you decide whether the cluster has corrupt files can you check the replication factor? If it's set to 2 then that normal

The help option will give you a full list of sub commands

$ hdfs fsck / ? fsck: can only operate on one path at a time '?' The list of sub command options

$ fsck <path> [-list-corruptfileblocks | [-move | -delete | -openforwrite] [-files [-blocks [-locations | -racks | -replicaDetails | -upgradedomains]]]] [-includeSnapshots] [-showprogress] [-storagepolicies]

[-maintenance] [-blockId <blk_Id>]

start checking from this path

-move move corrupted files to /lost+found

-delete delete corrupted files

-files print out files being checked

-openforwrite print out files opened for write

-includeSnapshots include snapshot data of a snapshottable directory

-list-corruptfileblocks print out list of missing blocks and files they belong to

-files -blocks print out block report

-files -blocks -locations print out locations for every block

-files -blocks -racks print out network topology for data-node locations

-files -blocks -replicaDetails print out each replica details

-files -blocks -upgradedomains print out upgrade domains for every block

-storagepolicies print out storage policy summary for the blocks

-maintenance print out maintenance state node details

-showprogress show progress in output. Default is OFF (no progress)

-blockId print out which file this blockId belongs to, locations (nodes, racks)

It would be good to first check for corrupt files and then run the delete

$ hdfs fsck / -list-corruptfileblocks

Connecting to namenode via http://mackenzie.test.com:50070/fsck?ugi=hdfs&listcorruptfileblocks=1&path=%2F

---output--

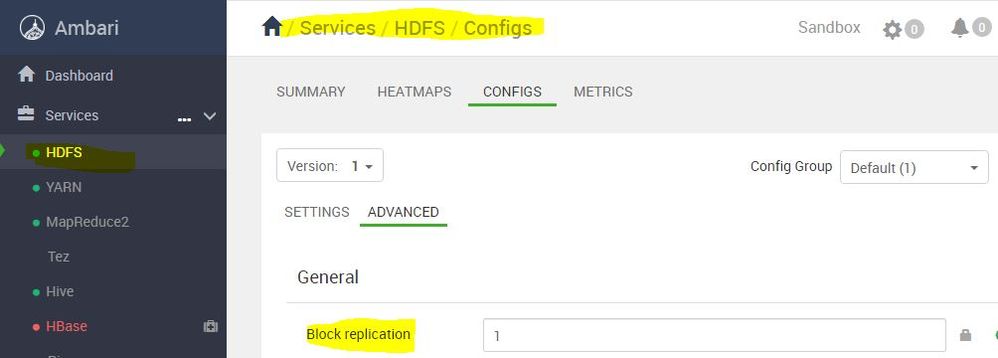

The filesystem under path '/' has 0 CORRUPT files A simple demo here my replication factor is 1 see above screenshot when I create a new file in hdfs the default repélication factor is set to 1

$ hdfs dfs -touch /user/tester.txtNow to check the replication fact see the number 1 before the group:user hdfs:hdfs

$ hdfs dfs -ls /user/tester.txt

-rw-r--r-- 1 hdfs hdfs 0 2020-10-18 10:21 /user/tester.txt

Hope that helps