Support Questions

- Cloudera Community

- Support

- Support Questions

- Why the number of reducer determined by Hadoop Map...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Why the number of reducer determined by Hadoop MapReduce and Tez has a great differ?

- Labels:

-

Apache Hive

-

Apache Tez

Created 12-10-2015 06:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hive on tez,sometimes the reduce number of tez is very fewer,in hadoop mapreduce has 2000 reducers, but in tez only 10.This cause take a long time to complete the query task.

the hive.exec.reducers.bytes.per.reducer is same.Is there any mistake in judging the Map output in tez?

how can to solve this problem?

Created on 12-10-2015 12:12 PM - edited 08-19-2019 05:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

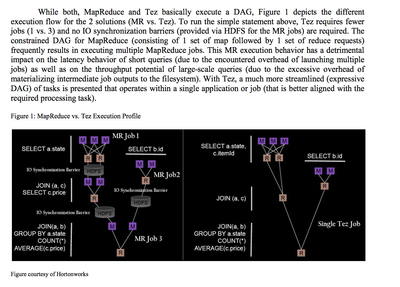

Tez architecture is different from mapreduce.

http://hortonworks.com/hadoop/tez/

Tez requires fewer jobs (1 vs. 3) and no IO synchronization barriers (provided via HDFS for the MR jobs) are required.

Created on 12-10-2015 12:12 PM - edited 08-19-2019 05:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Tez architecture is different from mapreduce.

http://hortonworks.com/hadoop/tez/

Tez requires fewer jobs (1 vs. 3) and no IO synchronization barriers (provided via HDFS for the MR jobs) are required.

Created 12-10-2015 09:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you're experiencing performance issue on Tez you need to start checking hive.tez.container.size: we had worked a lot in Hive / Tez performance optimization and very often you need to check your jobs. Sometimes we lowered the hive.tez.container.size to 1024 (less memory means more containers), other times we need to set the property value to 8192. It really depend on your workload.

Hive / Tez optimization could be a real long work but you can achieve good performance using hive.tez.container.size, ORC (and compression algorithm) and "pre-warming" Tez container.

Created 12-11-2015 02:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Jun Chen check if you have parameter below turned on:

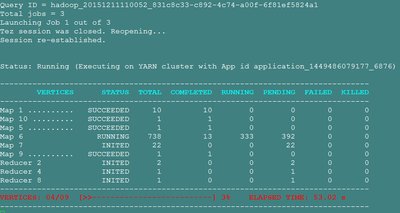

hive.tez.auto.reducer.parallelismwhen it's on, tez automatically decrease number of reducer tasks based on output from map. you can disable it if you need.

Created on 12-11-2015 03:09 AM - edited 08-19-2019 05:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Tez set very few reduces initially before automatically decreasing.Following is the detail picture:

Created 12-11-2015 03:15 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I see... I know tez has a new way to define number of mappers tasks, described in link below, not sure about number of reducers. Usually, we define a high number of reducers by default (in ambari) and use auto.reducer parameter, that works well.

https://cwiki.apache.org/confluence/display/TEZ/How+initial+task+parallelism+works

Created 02-03-2016 03:46 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Jun Chen are you still having issues with this? Can you accept best answer or provide your own solution?