Support Questions

- Cloudera Community

- Support

- Support Questions

- YARN resource manager + what is the count of node ...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

YARN resource manager + what is the count of node manager that resource manager can support

- Labels:

-

Apache Ambari

Created 06-28-2021 06:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we have HDP cluster with 2 resource manager services , and 190 node managers services

HDP version - 2.6.5

YARN version - 2.7.3

Hadoop platform - ambari 2.6.2.1 version

each node manager is located on VM linux machines

now we want to extend the node-managers machines to 220 machines

the Question that I want to ask:

dose resource-manager can support 220 node managers services ( when each node-manager service installed on one node manager linux machine ) ?

what is the max limit of node-mangers services that one resource -manager can support?

Created on 06-29-2021 07:57 AM - edited 06-29-2021 07:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Migration from 190 to 220 is an additional 30 DN and NM theoretically an the Resource Manager Is responsible for resource management and consists of two components: the scheduler and application manager:

The scheduler allocates resources:

It has extensive information about an application's resource needs, which allows it to make scheduling decisions across all applications in the cluster. Essentially, an application can ask for specific resource requests, via the YARN application master, to satisfy its resource needs. The scheduler responds to a resource request by granting a container, which satisfies the requirements laid out by the application master in the initial resource request.

The application manager:

Accepts job submissions, negotiating the first container to execute the application-specific application master and to restart the application master container on failure.

Node managers

A node manager is a per-machine or VM framework agent responsible for managing resources available on a single node. They monitors resource usage for containers and report to the scheduler within the resource manager. You can have multiple node managers just ensure you have required memory reserved for the host OS funtionlity

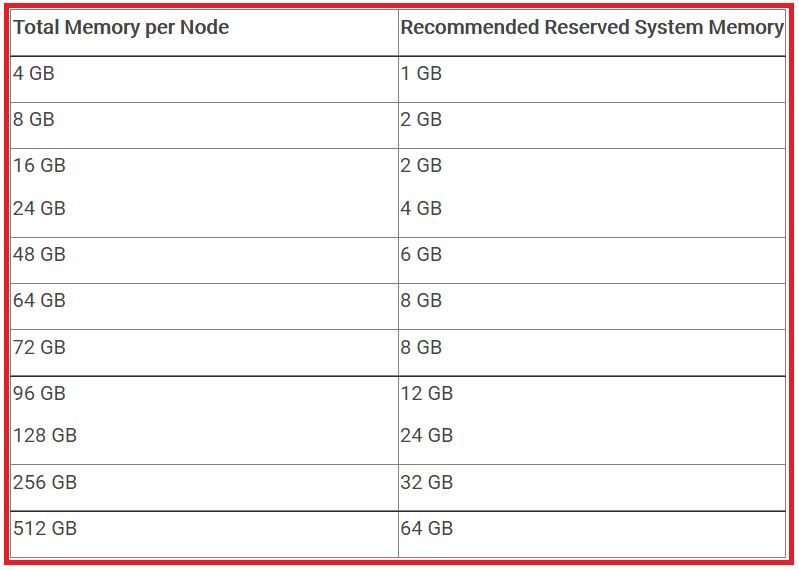

Just like Namenodes Resource managers need to be high spec servers if you can stick to some basics like the table below your 2 RM's can handle 200 nodes with ease.Take into consideration NN and ZK,HBase memory configs.

I remember running 300 data nodes/node manager in a project 2 years ago with exact setup like yours

Hope that helps

Created on 06-29-2021 07:57 AM - edited 06-29-2021 07:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Migration from 190 to 220 is an additional 30 DN and NM theoretically an the Resource Manager Is responsible for resource management and consists of two components: the scheduler and application manager:

The scheduler allocates resources:

It has extensive information about an application's resource needs, which allows it to make scheduling decisions across all applications in the cluster. Essentially, an application can ask for specific resource requests, via the YARN application master, to satisfy its resource needs. The scheduler responds to a resource request by granting a container, which satisfies the requirements laid out by the application master in the initial resource request.

The application manager:

Accepts job submissions, negotiating the first container to execute the application-specific application master and to restart the application master container on failure.

Node managers

A node manager is a per-machine or VM framework agent responsible for managing resources available on a single node. They monitors resource usage for containers and report to the scheduler within the resource manager. You can have multiple node managers just ensure you have required memory reserved for the host OS funtionlity

Just like Namenodes Resource managers need to be high spec servers if you can stick to some basics like the table below your 2 RM's can handle 200 nodes with ease.Take into consideration NN and ZK,HBase memory configs.

I remember running 300 data nodes/node manager in a project 2 years ago with exact setup like yours

Hope that helps

Created 06-30-2021 05:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

can you share please the link/doc that described the above table ?

Created 06-30-2021 08:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here you go how to determine YARN and MapReduce Memory Configuration Settings

Happy hadooping