Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: ambari-agent connection refused after host reb...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

ambari-agent connection refused after host reboot

- Labels:

-

Apache Ambari

Created on 05-24-2019 02:18 PM - edited 08-17-2019 03:18 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a 1-node ambari managed cluster which was working correctly, auto starting server, host and components when restarting the system.

I've changed to a public ip and a diferent hostname and after solving FQDN problems, I have problem with auto start when rebooting. Ambari server and agent are auto starting but heartbeat is lost and ambari-agent logs show connection refused, but if I manually restart ambari-agent, connection is correct and I can start services.

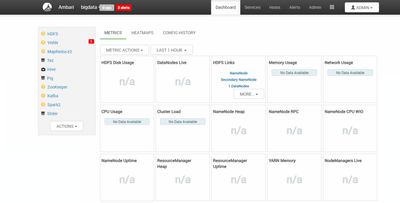

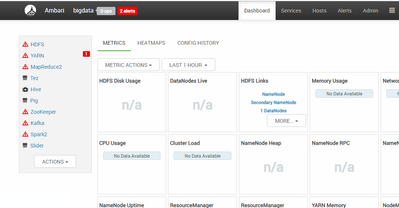

There's ambari-server UI just after rebooting.

ambari-agent log shows the nex tail.

ERROR 2019-05-24 12:28:47,235 script_alert.py:119 - [Alert][hive_metastore_process] Failed with result CRITICAL: ['Metastore on bigdata.es failed (Traceback (most recent call last):\n File "/var/lib/ambari-agent/cache/common-services/HIVE/0.12.0.2.0/package/alerts/alert_hive_metastore.py", line 200, in execute\n timeout_kill_strategy=TerminateStrategy.KILL_PROCESS_TREE,\n File "/usr/lib/python2.6/site-packages/resource_management/core/base.py", line 155, in __init__\n self.env.run()\n File "/usr/lib/python2.6/site-packages/resource_management/core/environment.py", line 160, in run\n self.run_action(resource, action)\n File "/usr/lib/python2.6/site-packages/resource_management/core/environment.py", line 124, in run_action\n provider_action()\n File "/usr/lib/python2.6/site-packages/resource_management/core/providers/system.py", line 262, in action_run\n tries=self.resource.tries, try_sleep=self.resource.try_sleep)\n File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 72, in inner\n result = function(command, **kwargs)\n File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 102, in checked_call\n tries=tries, try_sleep=try_sleep, timeout_kill_strategy=timeout_kill_strategy)\n File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 150, in _call_wrapper\n result = _call(command, **kwargs_copy)\n File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 303, in _call\n raise ExecutionFailed(err_msg, code, out, err)\nExecutionFailed: Execution of \'export HIVE_CONF_DIR=\'/usr/hdp/current/hive-metastore/conf/conf.server\' ; hive --hiveconf hive.metastore.uris=thrift://bigdata.es:9083 --hiveconf hive.metastore.client.connect.retry.delay=1 --hiveconf hive.metastore.failure.retries=1 --hiveconf hive.metastore.connect.retries=1 --hiveconf hive.metastore.client.socket.timeout=14 --hiveconf hive.execution.engine=mr -e \'show databases;\'\' returned 1. Logging initialized using configuration in file:/etc/hive/2.6.3.0-71/0/conf.server/hive-log4j.properties\nException in thread "main" java.lang.RuntimeException: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient\n\tat org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:547)\n\tat org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:681)\n\tat org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:625)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)\n\tat sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)\n\tat java.lang.reflect.Method.invoke(Method.java:498)\n\tat org.apache.hadoop.util.RunJar.run(RunJar.java:233)\n\tat org.apache.hadoop.util.RunJar.main(RunJar.java:148)\nCaused by: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient\n\tat org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1566)\n\tat org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.<init>(RetryingMetaStoreClient.java:92)\n\tat org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.getProxy(RetryingMetaStoreClient.java:138)\n\tat org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.getProxy(RetryingMetaStoreClient.java:110)\n\tat org.apache.hadoop.hive.ql.metadata.Hive.createMetaStoreClient(Hive.java:3510)\n\tat org.apache.hadoop.hive.ql.metadata.Hive.getMSC(Hive.java:3542)\n\tat org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:528)\n\t... 8 more\nCaused by: java.lang.reflect.InvocationTargetException\n\tat sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)\n\tat sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)\n\tat sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)\n\tat java.lang.reflect.Constructor.newInstance(Constructor.java:423)\n\tat org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1564)\n\t... 14 more\nCaused by: MetaException(message:Could not connect to meta store using any of the URIs provided. Most recent failure: org.apache.thrift.transport.TTransportException: java.net.ConnectException: Conexi\xc3\xb3n rehusada (Connection refused)\n\tat org.apache.thrift.transport.TSocket.open(TSocket.java:226)\n\tat org.apache.hadoop.hive.metastore.HiveMetaStoreClient.open(HiveMetaStoreClient.java:487)\n\tat org.apache.hadoop.hive.metastore.HiveMetaStoreClient.<init>(HiveMetaStoreClient.java:282)\n\tat org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient.<init>(SessionHiveMetaStoreClient.java:76)\n\tat sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)\n\tat sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)\n\tat sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)\n\tat java.lang.reflect.Constructor.newInstance(Constructor.java:423)\n\tat org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1564)\n\tat org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.<init>(RetryingMetaStoreClient.java:92)\n\tat org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.getProxy(RetryingMetaStoreClient.java:138)\n\tat org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.getProxy(RetryingMetaStoreClient.java:110)\n\tat org.apache.hadoop.hive.ql.metadata.Hive.createMetaStoreClient(Hive.java:3510)\n\tat org.apache.hadoop.hive.ql.metadata.Hive.getMSC(Hive.java:3542)\n\tat org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:528)\n\tat org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:681)\n\tat org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:625)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)\n\tat sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)\n\tat java.lang.reflect.Method.invoke(Method.java:498)\n\tat org.apache.hadoop.util.RunJar.run(RunJar.java:233)\n\tat org.apache.hadoop.util.RunJar.main(RunJar.java:148)\nCaused by: java.net.ConnectException: Conexi\xc3\xb3n rehusada (Connection refused)\n\tat java.net.PlainSocketImpl.socketConnect(Native Method)\n\tat java.net.AbstractPlainSocketImpl.doConnect(AbstractPlainSocketImpl.java:350)\n\tat java.net.AbstractPlainSocketImpl.connectToAddress(AbstractPlainSocketImpl.java:206)\n\tat java.net.AbstractPlainSocketImpl.connect(AbstractPlainSocketImpl.java:188)\n\tat java.net.SocksSocketImpl.connect(SocksSocketImpl.java:392)\n\tat java.net.Socket.connect(Socket.java:589)\n\tat org.apache.thrift.transport.TSocket.open(TSocket.java:221)\n\t... 22 more\n)\n\tat org.apache.hadoop.hive.metastore.HiveMetaStoreClient.open(HiveMetaStoreClient.java:534)\n\tat org.apache.hadoop.hive.metastore.HiveMetaStoreClient.<init>(HiveMetaStoreClient.java:282)\n\tat org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient.<init>(SessionHiveMetaStoreClient.java:76)\n\t... 19 more\n)']

INFO 2019-05-24 12:28:58,095 logger.py:71 - call[['test', '-w', '/dev']] {'sudo': True, 'quiet': False, 'timeout': 5}

INFO 2019-05-24 12:28:58,108 logger.py:71 - call returned (0, '')

INFO 2019-05-24 12:28:58,119 logger.py:71 - call[['test', '-w', '/']] {'sudo': True, 'quiet': False, 'timeout': 5}

INFO 2019-05-24 12:28:58,131 logger.py:71 - call returned (0, '')

INFO 2019-05-24 12:28:58,143 logger.py:71 - call[['test', '-w', '/Datos']] {'sudo': True, 'quiet': False, 'timeout': 5}

INFO 2019-05-24 12:28:58,154 logger.py:71 - call returned (0, '')

ERROR 2019-05-24 12:29:39,995 script_alert.py:119 - [Alert][hive_webhcat_server_status] Failed with result CRITICAL: ['Connection failed to http://bigdata.es:50111/templeton/v1/status?user.name=ambari-qa + \nTraceback (most recent call last):\n File "/var/lib/ambari-agent/cache/common-services/HIVE/0.12.0.2.0/package/alerts/alert_webhcat_server.py", line 190, in execute\n url_response = urllib2.urlopen(query_url, timeout=connection_timeout)\n File "/usr/lib/python2.7/urllib2.py", line 154, in urlopen\n return opener.open(url, data, timeout)\n File "/usr/lib/python2.7/urllib2.py", line 429, in open\n response = self._open(req, data)\n File "/usr/lib/python2.7/urllib2.py", line 447, in _open\n \'_open\', req)\n File "/usr/lib/python2.7/urllib2.py", line 407, in _call_chain\n result = func(*args)\n File "/usr/lib/python2.7/urllib2.py", line 1228, in http_open\n return self.do_open(httplib.HTTPConnection, req)\n File "/usr/lib/python2.7/urllib2.py", line 1198, in do_open\n raise URLError(err)\nURLError: <urlopen error [Errno 111] Conexi\xc3\xb3n rehusada>\n']

ERROR 2019-05-24 12:29:40,000 script_alert.py:119 - [Alert][yarn_nodemanager_health] Failed with result CRITICAL: ['Connection failed to http://bigdata.es:8042/ws/v1/node/info (Traceback (most recent call last):\n File "/var/lib/ambari-agent/cache/common-services/YARN/2.1.0.2.0/package/alerts/alert_nodemanager_health.py", line 171, in execute\n url_response = urllib2.urlopen(query, timeout=connection_timeout)\n File "/usr/lib/python2.7/urllib2.py", line 154, in urlopen\n return opener.open(url, data, timeout)\n File "/usr/lib/python2.7/urllib2.py", line 429, in open\n response = self._open(req, data)\n File "/usr/lib/python2.7/urllib2.py", line 447, in _open\n \'_open\', req)\n File "/usr/lib/python2.7/urllib2.py", line 407, in _call_chain\n result = func(*args)\n File "/usr/lib/python2.7/urllib2.py", line 1228, in http_open\n return self.do_open(httplib.HTTPConnection, req)\n File "/usr/lib/python2.7/urllib2.py", line 1198, in do_open\n raise URLError(err)\nURLError: <urlopen error [Errno 111] Conexi\xc3\xb3n rehusada>\n)']

INFO 2019-05-24 13:30:00,155 main.py:96 - loglevel=logging.INFO

INFO 2019-05-24 13:30:00,157 main.py:96 - loglevel=logging.INFO

INFO 2019-05-24 13:30:00,157 main.py:96 - loglevel=logging.INFO

INFO 2019-05-24 13:30:00,159 DataCleaner.py:39 - Data cleanup thread started

INFO 2019-05-24 13:30:00,164 DataCleaner.py:120 - Data cleanup started

INFO 2019-05-24 13:30:00,295 DataCleaner.py:122 - Data cleanup finished

INFO 2019-05-24 13:30:00,314 PingPortListener.py:50 - Ping port listener started on port: 8670

INFO 2019-05-24 13:30:00,314 main.py:132 - Newloglevel=logging.DEBUG

INFO 2019-05-24 13:30:00,314 main.py:405 - Connecting to Ambari server at https://bigdata.es:8440 (10.61.2.10)

DEBUG 2019-05-24 13:30:00,314 NetUtil.py:110 - Trying to connect to https://bigdata.es:8440

INFO 2019-05-24 13:30:00,315 NetUtil.py:67 - Connecting to https://bigdata.es:8440/ca

WARNING 2019-05-24 13:30:00,317 NetUtil.py:98 - Failed to connect to https://bigdata.es:8440/ca due to [Errno 111] Conexión rehusada

WARNING 2019-05-24 13:30:00,317 NetUtil.py:121 - Server at https://bigdata.es:8440 is not reachable, sleeping for 10 seconds...And ambari-agent.ini config is the following

[server] hostname = bigdata.es url_port = 8440 secured_url_port = 8441 connect_retry_delay = 10 max_reconnect_retry_delay = 30 [agent] logdir = /var/log/ambari-agent piddir = /var/run/ambari-agent prefix = /var/lib/ambari-agent/data loglevel = DEBUG data_cleanup_interval = 86400 data_cleanup_max_age = 2592000 data_cleanup_max_size_mb = 100 ping_port = 8670 cache_dir = /var/lib/ambari-agent/cache tolerate_download_failures = true run_as_user = root parallel_execution = 0 alert_grace_period = 5 status_command_timeout = 5 alert_kinit_timeout = 14400000 system_resource_overrides = /etc/resource_overrides [security] keysdir = /var/lib/ambari-agent/keys server_crt = ca.crt passphrase_env_var_name = AMBARI_PASSPHRASE ssl_verify_cert = 0 credential_lib_dir = /var/lib/ambari-agent/cred/lib credential_conf_dir = /var/lib/ambari-agent/cred/conf credential_shell_cmd = org.apache.hadoop.security.alias.CredentialShell force_https_protocol = PROTOCOL_TLSv1_2 [services] pidlookuppath = /var/run/ [heartbeat] state_interval_seconds = 60 dirs = /etc/hadoop,/etc/hadoop/conf,/etc/hbase,/etc/hcatalog,/etc/hive,/etc/oozie, /etc/sqoop, /var/run/hadoop,/var/run/zookeeper,/var/run/hbase,/var/run/templeton,/var/run/oozie, /var/log/hadoop,/var/log/zookeeper,/var/log/hbase,/var/run/templeton,/var/log/hive log_lines_count = 300 idle_interval_min = 1 idle_interval_max = 10 [logging] syslog_enabled = 0

If I run sudo ambari-agent restart command, then I can connect and start services in ambari-server ui.

Created 05-28-2019 03:47 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Already done /etc/hosts modifications and with this result:

bigdata@bigdata:~$ cat /etc/hosts IP FQDN ALIAS ------------------------------------------------ 10.61.2.10 bigdatapruebas.es bigdata.es #10.61.2.10 bigdatapruebas.es #bigdata.es bigdata bigdata.pruebasenergia.es ::1 localhost ip6-localhost ip6-loopback ff02::1 ip6-allnodes ff02::02 ip6-allrouters bigdata@bigdata:~$ hotname -f No se ha encontrado la orden «hotname», quizás quiso decir: La orden «hostname» del paquete «hostname» (main) hotname: no se encontró la orden bigdata@bigdata:~$ hostname -f bigdatapruebas.es bigdata@bigdata:~$

But happens the same thing. When applying changes it works but not working if rebooting until I manually restart agent 😞

Created 01-27-2021 11:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is not recommended to update ambari DB directly but as in this case the cmd 'ambari-server update-host-names host_names_changes.json' is not a much help, we can perform below actions by taking ambari DB back up. I tested and it worked.

Note: Please take DB backup! and PLEASE only do at your own risk.

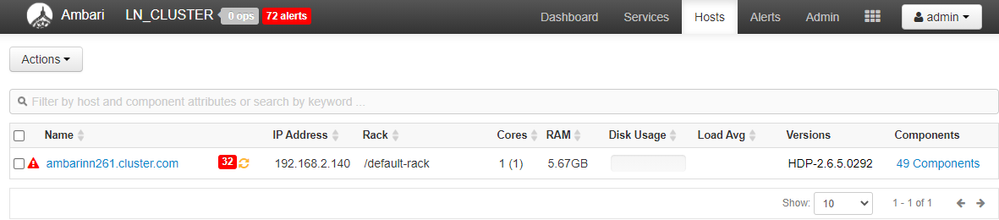

In this case, the new host_name is ambarinn.cluster.com and new name is ambarinn261.cluster.com

We need to concentrate on the host_name that says healthStatus":"UNKNOWN".

Steps to resolve:

ambari-agent and ambari-server successfully stopped

[root@ambarinn261 ~]# su - postgres

Last login: Wed Jan 27 13:14:40 EST 2021 on pts/0

-bash-4.2$ psql

psql (9.2.24)

Type "help" for help.

postgres=# \c ambari

You are now connected to database "ambari" as user "postgres".

ambari=# select host_id,host_name,discovery_status,last_registration_time,public_host_name from ambari.hosts;

host_id | host_name | discovery_status | last_registration_time | public_host_name

---------+-------------------------+------------------+------------------------+-------------------------

201 | ambarinn261.cluster.com | | 1611772466200 | ambarinn261.cluster.com

1 | ambarinn.cluster.com | | 1611768543457 | ambarinn.cluster.com

(2 rows)

ambari=# select * from ambari.hoststate;

agent_version | available_mem | current_state | health_status | host_id | time_in_state | maintenance_state

-----------------------+---------------+---------------+----------------------------------------------+---------+---------------+-------------------

{"version":"2.6.1.5"} | 279948 | INIT | {"healthStatus":"HEALTHY","healthReport":""} | 201 | 1611772466200 |

{"version":"2.6.1.5"} | 2120176 | INIT | {"healthStatus":"UNKNOWN","healthReport":""} | 1 | 1611768543457 |

(2 rows)

ambari=# UPDATE ambari.hosts SET host_name='ambarinn261.cluster.com' WHERE host_id=1;

ERROR: duplicate key value violates unique constraint "uq_hosts_host_name"

DETAIL: Key (host_name)=(ambarinn261.cluster.com) already exists.

ambari=# UPDATE ambari.hosts SET public_host_name='ambarinn261.cluster.com' WHERE host_id=1;

UPDATE 1

ambari=# UPDATE ambari.hosts SET public_host_name='ambarinn261a.cluster.com' WHERE host_id=201;

UPDATE 1

ambari=# UPDATE ambari.hosts SET host_name='ambarinn261a.cluster.com' WHERE host_id=201;

UPDATE 1

ambari=# UPDATE ambari.hosts SET host_name='ambarinn261.cluster.com' WHERE host_id=1;

UPDATE 1

ambari=# \q

-bash-4.2$ exit

logout

[root@ambarinn261 ~]# ambari-server start

Created 05-28-2019 05:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Let's discard the ALIAS what I suggest is completely remove it and have your /etc/hosts look like this

10.61.2.10 bigdatapruebas.es ::1 localhost ip6-localhost ip6-loopback ff02::1 ip6-allnodes ff02::02 ip6-allrouters

Stop the ambari-server and agent

# ambari-server stop # ambari-agent stop

Create a file host_names_changes.json file with hostnames changes.

Contents of the host_names_changes.json

# Ambari host name change

{

"bigdata" : {

"old_name_here" : "bigdatapruebas.es"

}

}

Update the host name for the various components

# Hive

grant all privileges on hive.* to 'hive'@'bigdatapruebas.es' identified by 'hive_password'; grant all privileges on hive.* to 'hive'@'bigdatapruebas.es' with grant option;

# Oozie

grant all privileges on oozie.* to 'hive'@'bigdatapruebas.es' identified by 'oozie_password'; grant all privileges on oozie.* to 'hive'@'bigdatapruebas.es' with grant option;

# Ranger

grant all privileges on ranger.* to 'hive'@'bigdatapruebas.es' identified by 'ranger_password'; grant all privileges on ranger.* to 'hive'@'bigdatapruebas.es' with grant option;

# Rangerkms

grant all privileges on rangerkms.* to 'hive'@'bigdatapruebas.es' identified by 'rangerkms_password'; grant all privileges on rangerkms.* to 'hive'@'bigdatapruebas.es' with grant option;

Change these 3 values in /etc/ambari-server/conf/ambari.properties to the new hostname

server.jdbc.rca.url= server.jdbc.url=jdbc= server.jdbc.hostname=

Edit the ambari-agent.ini under server replace the hostname with new Ambari hostname

[server] hostname=bigdatapruebas.es url_port=8440 secured_url_port=8441 connect_retry_delay=10 max_reconnect_retry_delay=30

Start the ambari-agent

# ambari-agent start

Start the Ambari

# ambari-server start

Open the web browser

http://bigdatapruebas.es:8080

Start all components

Please revert

Created 05-29-2019 12:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did it and I think it's all right, I show terminal output. At the beginning I thougt it was all right but then heartbeat lost after rebooting again

bigdata@bigdata:~$ hostname -f

bigdata.pruebasenergia.es

bigdata@bigdata:~$ sudo cat /etc/hosts

10.61.2.10 bigdata.pruebasenergia.es

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::02 ip6-allrouters

bigdata@bigdata:~$ sudo ambari-server stop

Using python /usr/bin/python

Stopping ambari-server

Ambari Server is not running

bigdata@bigdata:~$

bigdata@bigdata:~$

bigdata@bigdata:~$ sudo ambari-agent stop

Verifying Python version compatibility...

Using python /usr/bin/python

Found ambari-agent PID: 1601

Stopping ambari-agent

Removing PID file at /run/ambari-agent/ambari-agent.pid

ambari-agent successfully stopped

bigdata@bigdata:~$ sudo vi host_names_changes.json

{

"bigdata" : {

"bigdatapruebas.es" : "bigdata.pruebasenergia.es"

}

}

bigdata@bigdata:~$

bigdata@bigdata:~$ sudo vi /etc/ambari-server/conf/ambari.properties

bigdata@bigdata:~$ cat /etc/ambari-server/conf/ambari.properties | grep server.jdbc

server.jdbc.connection-pool=internal

server.jdbc.database=postgres

server.jdbc.database_name=ambari

server.jdbc.driver=org.postgresql.Driver

server.jdbc.driver.path=/usr/ambari/postgresql.jar

server.jdbc.hostname=bigdata.pruebasenergia.es

server.jdbc.port=5432

server.jdbc.postgres.schema=ambari

server.jdbc.rca.driver=org.postgresql.Driver

server.jdbc.rca.url=jdbc:postgresql://bigdata.pruebasenergia.es:5432/ambari

server.jdbc.rca.user.name=ambari

server.jdbc.rca.user.passwd=/etc/ambari-server/conf/password.dat

server.jdbc.url=jdbc:postgresql://bigdata.pruebasenergia.es:5432/ambari

server.jdbc.user.name=ambari

server.jdbc.user.passwd=/etc/ambari-server/conf/password.dat

bigdata@bigdata:~$

bigdata@bigdata:~$

bigdata@bigdata:~$ sudo vi /etc/ambari-agent/conf/ambari-agent.ini

bigdata@bigdata:~$ cat /etc/ambari-agent/conf/ambari-agent.ini | grep hostname

hostname = bigdata.pruebasenergia.es

bigdata@bigdata:~$

bigdata@bigdata:~$

bigdata@bigdata:~$ sudo ambari-server update-host-names host_names_changes.json

Using python /usr/bin/python

Updating host names

Please, confirm Ambari services are stopped [y/n] (n)? y

Please, confirm there are no pending commands on cluster [y/n] (n)? y

Please, confirm you have made backup of the Ambari db [y/n] (n)? y

Ambari Server 'update-host-names' completed successfully.

bigdata@bigdata:~$

bigdata@bigdata:~$

bigdata@bigdata:~$ sudo ambari-agent start

Verifying Python version compatibility...

Using python /usr/bin/python

Checking for previously running Ambari Agent...

ERROR: ambari-agent already running

Check /run/ambari-agent/ambari-agent.pid for PID.

bigdata@bigdata:~$

bigdata@bigdata:~$ sudo ambari-server start

Using python /usr/bin/python

Starting ambari-server

Ambari Server running with administrator privileges.

Organizing resource files at /var/lib/ambari-server/resources...

Ambari database consistency check started...

Server PID at: /var/run/ambari-server/ambari-server.pid

Server out at: /var/log/ambari-server/ambari-server.out

Server log at: /var/log/ambari-server/ambari-server.log

Waiting for server start.........

............

Server started listening on 8080

DB configs consistency check found warnings. See /var/log/ambari-server/ambari-server-check-database.log for more details.

Ambari Server 'start' completed successfully.

bigdata@bigdata:~$In databse I've all granted.

Created 05-29-2019 04:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ping me on LinkedIn I could help you remotely

Created 06-10-2019 10:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you share the below entries of your ambari.ini and your ambari.properties?

[agent] ... [security] ... [heartbeat] ... [logging]

Please revert

- « Previous

-

- 1

- 2

- Next »