Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: ambari metrics collector not display the metri...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

ambari metrics collector not display the metrics after moving to distributed mode

Created on

02-20-2020

12:18 PM

- last edited on

02-20-2020

01:29 PM

by

cjervis

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi all

we have HDP version 2.6.5 and ambari 2.6.2.2

we configured the following

Metrics Service operation mode=distributed

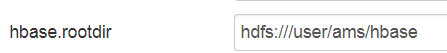

hbase.rootdir=hdfs://hdfsha:8020/user/ams/hbase

and all the rest according to - https://cwiki.apache.org/confluence/display/AMBARI/AMS+-+distributed+mode

so ambari configured to save the data on hdfs instead the local disk

after metrics collector service restart we saw the following from log

2020-02-20 20:09:15,770 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-20 20:11:35,769 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

and

2020-02-20 20:30:10,306 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: phoenix metrics system started

2020-02-20 20:30:10,450 WARN org.apache.hadoop.hbase.io.util.HeapMemorySizeUtil: hbase.regionserver.global.memstore.upperLimit is deprecated by hbase.regionserver.global.memstore.size

2020-02-20 20:30:15,327 WARN org.apache.hadoop.hbase.io.util.HeapMemorySizeUtil: hbase.regionserver.global.memstore.upperLimit is deprecated by hbase.regionserver.global.memstore.size

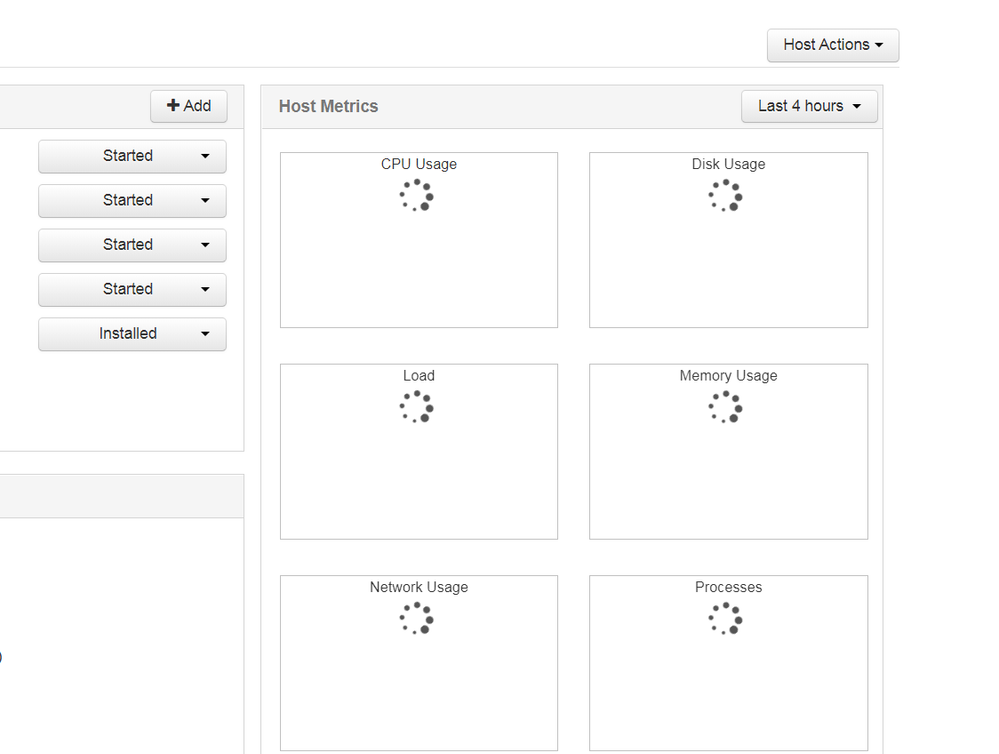

and without metrics as

and after some time the metrics collector service failed

why this happens ?

NOTE - I must to say that - when data of metrics saved on local disk then everything was ok

is that because reaching data on HDFS is more slow?

Created on 02-20-2020 02:47 PM - edited 02-20-2020 02:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This does not look right? Ideally with HDFS HA name we do not use the Port number because "hdfsha" is not a hostname but just a logical name.

hbase.rootdir=hdfs://hdfsha:8020/user/ams/hbase

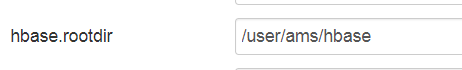

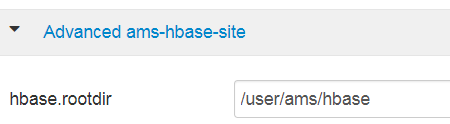

If you NameService name is "hdfsha" (defined in "Custom core-site" as "dfs.nameservices=hdfsha") then ideally you should be using the following in your AMS configuration in your "Advanced ams-hbase-site"

hbase.rootdir=/user/ams/hbase

. As your AMS mode is "distributed" hence AMS will automatically assume that the data is in HDFS and will be able to figure out the actual NameService name dynamically so we do not even need to specify "hdfs://hdfsha" there. After fixing the "hbase.rootdir" in AMS configs please kill and restart the AMS processes. Then specially check the AMS logs specially the following logs and please share if you notice any error ... please share the Full stacktrace.

/var/log/ambari-metrics-collector/hbase-ams-master-*.log

/var/log/ambari-metrics-collector/hbase-ams-region-*.log

/var/log/ambari-metrics-collector/ambari-metrics-collector.log

.

Created on 02-20-2020 02:47 PM - edited 02-20-2020 02:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This does not look right? Ideally with HDFS HA name we do not use the Port number because "hdfsha" is not a hostname but just a logical name.

hbase.rootdir=hdfs://hdfsha:8020/user/ams/hbase

If you NameService name is "hdfsha" (defined in "Custom core-site" as "dfs.nameservices=hdfsha") then ideally you should be using the following in your AMS configuration in your "Advanced ams-hbase-site"

hbase.rootdir=/user/ams/hbase

. As your AMS mode is "distributed" hence AMS will automatically assume that the data is in HDFS and will be able to figure out the actual NameService name dynamically so we do not even need to specify "hdfs://hdfsha" there. After fixing the "hbase.rootdir" in AMS configs please kill and restart the AMS processes. Then specially check the AMS logs specially the following logs and please share if you notice any error ... please share the Full stacktrace.

/var/log/ambari-metrics-collector/hbase-ams-master-*.log

/var/log/ambari-metrics-collector/hbase-ams-region-*.log

/var/log/ambari-metrics-collector/ambari-metrics-collector.log

.

Created on 02-20-2020 06:53 PM - edited 02-20-2020 06:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear jay

do you mean to set it as

or

Created on 02-20-2020 07:28 PM - edited 02-20-2020 07:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As the Metrics Service operation mode is already selected to "distributed" hence Ambari will make AMS aware that it needs to find that hbase.rootdir on HDFS.

Following should be fine.

hbase.rootdir=/user/ams/hbase

Created on 02-20-2020 07:39 PM - edited 02-20-2020 07:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we configured the following

this are the logs ( still metrics are now shown from ambari , and we have the same alert on the metrics service )

tail -15 ambari-metrics-collector.log

at org.apache.hadoop.hbase.ipc.CallRunner.run(CallRunner.java:112)

at org.apache.hadoop.hbase.ipc.RpcExecutor$Handler.run(RpcExecutor.java:187)

at org.apache.hadoop.hbase.ipc.RpcExecutor$Handler.run(RpcExecutor.java:167)

2020-02-21 03:33:49,284 INFO org.apache.hadoop.hbase.client.RpcRetryingCaller: Call exception, tries=15, retries=35, started=629725 ms ago, cancelled=false, msg=java.io.IOException: Table Namespace Manager not fully initialized, try again later

at org.apache.hadoop.hbase.master.HMaster.checkNamespaceManagerReady(HMaster.java:2693)

at org.apache.hadoop.hbase.master.HMaster.ensureNamespaceExists(HMaster.java:2915)

at org.apache.hadoop.hbase.master.HMaster.createTable(HMaster.java:1686)

at org.apache.hadoop.hbase.master.MasterRpcServices.createTable(MasterRpcServices.java:483)

at org.apache.hadoop.hbase.protobuf.generated.MasterProtos$MasterService$2.callBlockingMethod(MasterProtos.java:59846)

at org.apache.hadoop.hbase.ipc.RpcServer.call(RpcServer.java:2150)

at org.apache.hadoop.hbase.ipc.CallRunner.run(CallRunner.java:112)

at org.apache.hadoop.hbase.ipc.RpcExecutor$Handler.run(RpcExecutor.java:187)

at org.apache.hadoop.hbase.ipc.RpcExecutor$Handler.run(RpcExecutor.java:167)

tail -30 hbase-ams-regionserver-master.sys77.com.log

2020-02-21 03:23:23,912 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: No suitable collector found.

2020-02-21 03:23:23,914 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 03:23:23,916 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 03:23:23,916 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 03:24:33,928 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-21 03:28:12,357 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=158.59 KB, freeSize=149.62 MB, max=149.78 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=29, evicted=0, evictedPerRun=0.0

2020-02-21 03:28:13,923 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-21 03:28:23,344 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Stopping HBase metrics system...

2020-02-21 03:28:23,347 INFO [timeline] impl.MetricsSinkAdapter: timeline thread interrupted.

2020-02-21 03:28:23,349 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system stopped.

2020-02-21 03:28:23,855 INFO [HBase-Metrics2-1] impl.MetricsConfig: loaded properties from hadoop-metrics2-hbase.properties

2020-02-21 03:28:23,906 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Initializing Timeline metrics sink.

2020-02-21 03:28:23,906 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Identified hostname = master.sys77.com, serviceName = ams-hbase

2020-02-21 03:28:23,909 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: No suitable collector found.

2020-02-21 03:28:23,911 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 03:28:23,914 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 03:28:23,914 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 03:29:33,925 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-21 03:33:12,356 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=158.59 KB, freeSize=149.62 MB, max=149.78 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=59, evicted=0, evictedPerRun=0.0

2020-02-21 03:33:23,344 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Stopping HBase metrics system...

2020-02-21 03:33:23,346 INFO [timeline] impl.MetricsSinkAdapter: timeline thread interrupted.

2020-02-21 03:33:23,350 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system stopped.

2020-02-21 03:33:23,853 INFO [HBase-Metrics2-1] impl.MetricsConfig: loaded properties from hadoop-metrics2-hbase.properties

2020-02-21 03:33:23,924 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Initializing Timeline metrics sink.

2020-02-21 03:33:23,925 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Identified hostname = master.sys77.com, serviceName = ams-hbase

2020-02-21 03:33:23,929 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: No suitable collector found.

2020-02-21 03:33:23,932 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 03:33:23,936 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 03:33:23,936 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 03:34:33,948 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

tail -30 hbase-ams-regionserver-master.sys77.com.log

2020-02-21 03:23:23,912 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: No suitable collector found.

2020-02-21 03:23:23,914 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 03:23:23,916 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 03:23:23,916 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 03:24:33,928 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-21 03:28:12,357 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=158.59 KB, freeSize=149.62 MB, max=149.78 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=29, evicted=0, evictedPerRun=0.0

2020-02-21 03:28:13,923 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-21 03:28:23,344 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Stopping HBase metrics system...

2020-02-21 03:28:23,347 INFO [timeline] impl.MetricsSinkAdapter: timeline thread interrupted.

2020-02-21 03:28:23,349 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system stopped.

2020-02-21 03:28:23,855 INFO [HBase-Metrics2-1] impl.MetricsConfig: loaded properties from hadoop-metrics2-hbase.properties

2020-02-21 03:28:23,906 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Initializing Timeline metrics sink.

2020-02-21 03:28:23,906 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Identified hostname = master.sys77.com, serviceName = ams-hbase

2020-02-21 03:28:23,909 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: No suitable collector found.

2020-02-21 03:28:23,911 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 03:28:23,914 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 03:28:23,914 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 03:29:33,925 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

2020-02-21 03:33:12,356 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=158.59 KB, freeSize=149.62 MB, max=149.78 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=59, evicted=0, evictedPerRun=0.0

2020-02-21 03:33:23,344 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Stopping HBase metrics system...

2020-02-21 03:33:23,346 INFO [timeline] impl.MetricsSinkAdapter: timeline thread interrupted.

2020-02-21 03:33:23,350 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system stopped.

2020-02-21 03:33:23,853 INFO [HBase-Metrics2-1] impl.MetricsConfig: loaded properties from hadoop-metrics2-hbase.properties

2020-02-21 03:33:23,924 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Initializing Timeline metrics sink.

2020-02-21 03:33:23,925 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Identified hostname = master.sys77.com, serviceName = ams-hbase

2020-02-21 03:33:23,929 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: No suitable collector found.

2020-02-21 03:33:23,932 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 03:33:23,936 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 03:33:23,936 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 03:34:33,948 INFO [timeline] timeline.HadoopTimelineMetricsSink: No live collector to send metrics to. Metrics to be sent will be discarded. This message will be skipped for the next 20 times.

[root@master ambari-metrics-collector]#

Created 02-20-2020 08:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As we see the error as: "Table Namespace Manager not fully initialized"

2020-02-21 03:33:49,284 INFO org.apache.hadoop.hbase.client.RpcRetryingCaller: Call exception, tries=15, retries=35, started=629725 ms ago, cancelled=false, msg=java.io.IOException: Table Namespace Manager not fully initialized, try again later

at org.apache.hadoop.hbase.master.HMaster.checkNamespaceManagerReady(HMaster.java:2693)

at org.apache.hadoop.hbase.master.HMaster.ensureNamespaceExists(HMaster.java:2915)

at org.apache.hadoop.hbase.master.HMaster.createTable(HMaster.java:1686)

Which indicates the AMS HBase master might have some issue. Can you please let us know when was "distributed Mode" AMS running fine last time? Or immediately after enabling AMS distributed mode it is not starting?

Is it kerberos enabled environment?

Can you please check the permission on the HDFS dir? (to verify if the ownership of this HDFS dir is setup correctly as "ams:hdfs")

# su - hdfs -c 'hdfs dfs -ls /user/ams'

# su - hdfs -c 'hdfs dfs -ls /user/ams/hbase'

If you still face any issue then may be you can try to change the Zookeeper ZNode for AMS and then try restarting AMS freshly.

To change the "Zookeeper Znode Parent" property of AMS please try this.

Ambari UI --> Amabri Metrics --> Configs --> "Advanced ams-hbase-site" --> "ZooKeeper Znode Parent"

then change the value of the znode to something slightly different like "/ams-hbase-unsecure" to "/ams-hbase-unsecure1" ...etc and restart AMS and let us know if you see any error?

Created on 02-20-2020 10:57 PM - edited 02-20-2020 10:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Jay

yes change the ams-hbase-unsecure1 is part of the solution , also I increase the heap size since we saw also error about the heap size

I will build more new cluster with your correction , and I will let you know if metrics working fine with

hbase.rootdir=/user/ams/hbase

Created on 02-21-2020 12:23 AM - edited 02-21-2020 12:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Jay

regarding this ambari cluster , I noticed that some of the metrics are shown and some of them are not

as the following example

from the logs we can see

[root@master ambari-metrics-collector]# tail -10 hbase-ams-regionserver-master.sys77.com.log

2020-02-21 08:15:13,237 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Collector Uri: http://master.sys77.com:6188/ws/v1/timeline/metrics

2020-02-21 08:15:13,237 INFO [HBase-Metrics2-1] timeline.HadoopTimelineMetricsSink: Container Metrics Uri: http://master.sys77.com:6188/ws/v1/timeline/containermetrics

2020-02-21 08:15:13,240 INFO [HBase-Metrics2-1] impl.MetricsSinkAdapter: Sink timeline started

2020-02-21 08:15:13,243 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2020-02-21 08:15:13,243 INFO [HBase-Metrics2-1] impl.MetricsSystemImpl: HBase metrics system started

2020-02-21 08:15:48,203 INFO [MemStoreFlusher.1] regionserver.MemStoreFlusher: Flush of region METRIC_RECORD,kafka.server.BrokerTopicMetrics.BytesOutPerSec.15MinuteRate\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00,1582267405534.b665584cec3e6aa7a266b7fc94ef2e91. due to global heap pressure. Total Memstore size=227.6 M, Region memstore size=68.4 M

2020-02-21 08:15:48,205 INFO [MemStoreFlusher.1] regionserver.HRegion: Started memstore flush for METRIC_RECORD,kafka.server.BrokerTopicMetrics.BytesOutPerSec.15MinuteRate\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00,1582267405534.b665584cec3e6aa7a266b7fc94ef2e91., current region memstore size 68.37 MB, and 1/1 column families' memstores are being flushed.

2020-02-21 08:15:48,605 INFO [MemStoreFlusher.1] regionserver.DefaultStoreFlusher: Flushed, sequenceid=3159, memsize=68.4 M, hasBloomFilter=false, into tmp file hdfs://hdfsha/user/ams/hbase/data/default/METRIC_RECORD/b665584cec3e6aa7a266b7fc94ef2e91/.tmp/97bc3e982c0846e2864c2750526cca4f

2020-02-21 08:15:48,648 INFO [MemStoreFlusher.1] regionserver.HStore: Added hdfs://hdfsha/user/ams/hbase/data/default/METRIC_RECORD/b665584cec3e6aa7a266b7fc94ef2e91/0/97bc3e982c0846e2864c2750526cca4f, entries=267808, sequenceid=3159, filesize=3.9 M

2020-02-21 08:15:48,652 INFO [MemStoreFlusher.1] regionserver.HRegion: Finished memstore flush of ~68.37 MB/71693616, currentsize=0 B/0 for region METRIC_RECORD,kafka.server.BrokerTopicMetrics.BytesOutPerSec.15MinuteRate\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00,1582267405534.b665584cec3e6aa7a266b7fc94ef2e91. in 447ms, sequenceid=3159, compaction requested=false

[root@master ambari-metrics-collector]#

[root@master ambari-metrics-collector]#

[root@master ambari-metrics-collector]#

[root@master ambari-metrics-collector]# tail -10 ambari-metrics-collector.log

2020-02-21 08:16:20,918 INFO TimelineClusterAggregatorMinute: End aggregation cycle @ Fri Feb 21 08:16:20 UTC 2020

2020-02-21 08:16:21,227 INFO TimelineClusterAggregatorSecond: Saving 8394 metric aggregates.

2020-02-21 08:16:21,372 INFO TimelineMetricHostAggregatorMinute: 5448 row(s) updated in aggregation.

2020-02-21 08:16:21,419 INFO TimelineMetricHostAggregatorMinute: End Downsampling cycle.

2020-02-21 08:16:21,440 INFO TimelineMetricHostAggregatorMinute: End Downsampling cycle.

2020-02-21 08:16:21,457 INFO TimelineMetricHostAggregatorMinute: End Downsampling cycle.

2020-02-21 08:16:21,479 INFO TimelineMetricHostAggregatorMinute: End Downsampling cycle.

2020-02-21 08:16:21,479 INFO TimelineMetricHostAggregatorMinute: Aggregated host metrics for METRIC_RECORD_MINUTE, with startTime = Fri Feb 21 08:10:00 UTC 2020, endTime = Fri Feb 21 08:15:00 UTC 2020

2020-02-21 08:16:21,479 INFO TimelineMetricHostAggregatorMinute: End aggregation cycle @ Fri Feb 21 08:16:21 UTC 2020

2020-02-21 08:16:21,752 INFO TimelineClusterAggregatorSecond: End aggregation cycle @ Fri Feb 21 08:16:21 UTC 2020

[root@master ambari-metrics-collector]#

[root@master ambari-metrics-collector]#

[root@master ambari-metrics-collector]#

[root@master ambari-metrics-collector]# tail -10 hbase-ams-master-master.sys77.com.log

2020-02-21 07:45:19,565 INFO [RpcServer.FifoWFPBQ.default.handler=29,queue=2,port=61300] master.HMaster: Client=ams/null List Table Descriptor for the METRIC_AGGREGATE_DAILY table succeeds

2020-02-21 07:46:09,290 INFO [timeline] availability.MetricSinkWriteShardHostnameHashingStrategy: Calculated collector shard master.sys77.com based on hostname: master.sys77.com

2020-02-21 07:49:59,377 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=159.41 KB, freeSize=150.39 MB, max=150.54 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=29, evicted=0, evictedPerRun=0.0

2020-02-21 07:54:59,376 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=159.41 KB, freeSize=150.39 MB, max=150.54 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=59, evicted=0, evictedPerRun=0.0

2020-02-21 07:59:59,376 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=159.41 KB, freeSize=150.39 MB, max=150.54 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=89, evicted=0, evictedPerRun=0.0

2020-02-21 08:00:32,031 INFO [WALProcedureStoreSyncThread] wal.WALProcedureStore: Remove log: hdfs://hdfsha/user/ams/hbase/MasterProcWALs/state-00000000000000000055.log

2020-02-21 08:00:32,031 INFO [WALProcedureStoreSyncThread] wal.WALProcedureStore: Removed logs: [hdfs://hdfsha/user/ams/hbase/MasterProcWALs/state-00000000000000000056.log]

2020-02-21 08:04:59,376 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=159.41 KB, freeSize=150.39 MB, max=150.54 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=119, evicted=0, evictedPerRun=0.0

2020-02-21 08:09:59,376 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=159.41 KB, freeSize=150.39 MB, max=150.54 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=149, evicted=0, evictedPerRun=0.0

2020-02-21 08:14:59,376 INFO [LruBlockCacheStatsExecutor] hfile.LruBlockCache: totalSize=159.41 KB, freeSize=150.39 MB, max=150.54 MB, blockCount=0, accesses=0, hits=0, hitRatio=0, cachingAccesses=0, cachingHits=0, cachingHitsRatio=0,evictions=179, evicted=0, evictedPerRun=0.0

[root@master ambari-metrics-collector]#

we can see that

2020-02-21 08:15:48,648 INFO [MemStoreFlusher.1] regionserver.HStore: Added hdfs://hdfsha/user/ams/hbase/data/default/METRIC_RECORD/b665584cec3e6aa7a266b7fc94ef2e91/0/97bc3e982c0846e2864c2750526cca4f, entries=267808, sequenceid=3159, filesize=3.9 M

but we set the - hbase.rootdir=/user/ams/hbase