Support Questions

- Cloudera Community

- Support

- Support Questions

- fail to start all services exept zookeeper

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

fail to start all services exept zookeeper

- Labels:

-

Apache Hadoop

Created on 02-23-2017 12:41 PM - edited 08-19-2019 03:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

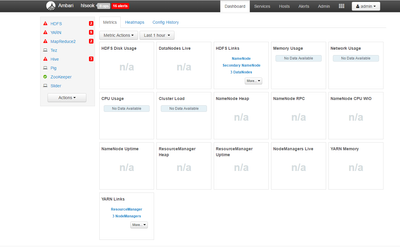

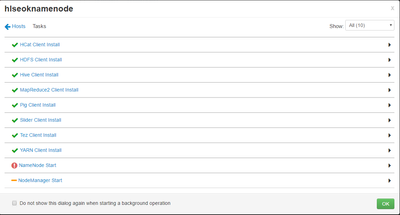

After installation, all services fail to start exept zookeeper.

when I tried to start namenode, I received the following error message.

Traceback (most recent call last):

File "/var/lib/ambari-agent/cache/common-services/HDFS/2.1.0.2.0/package/scripts/namenode.py", line 420, in <module>

NameNode().execute()

File "/usr/lib/python2.6/site-packages/resource_management/libraries/script/script.py", line 280, in execute

method(env)

File "/var/lib/ambari-agent/cache/common-services/HDFS/2.1.0.2.0/package/scripts/namenode.py", line 101, in start

upgrade_suspended=params.upgrade_suspended, env=env)

File "/usr/lib/python2.6/site-packages/ambari_commons/os_family_impl.py", line 89, in thunk

return fn(*args, **kwargs)

File "/var/lib/ambari-agent/cache/common-services/HDFS/2.1.0.2.0/package/scripts/hdfs_namenode.py", line 156, in namenode

create_log_dir=True

File "/var/lib/ambari-agent/cache/common-services/HDFS/2.1.0.2.0/package/scripts/utils.py", line 269, in service

Execute(daemon_cmd, not_if=process_id_exists_command, environment=hadoop_env_exports)

File "/usr/lib/python2.6/site-packages/resource_management/core/base.py", line 155, in __init__

self.env.run()

File "/usr/lib/python2.6/site-packages/resource_management/core/environment.py", line 160, in run

self.run_action(resource, action)

File "/usr/lib/python2.6/site-packages/resource_management/core/environment.py", line 124, in run_action

provider_action()

File "/usr/lib/python2.6/site-packages/resource_management/core/providers/system.py", line 273, in action_run

tries=self.resource.tries, try_sleep=self.resource.try_sleep)

File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 70, in inner

result = function(command, **kwargs)

File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 92, in checked_call

tries=tries, try_sleep=try_sleep)

File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 140, in _call_wrapper

result = _call(command, **kwargs_copy)

File "/usr/lib/python2.6/site-packages/resource_management/core/shell.py", line 293, in _call

raise ExecutionFailed(err_msg, code, out, err)

resource_management.core.exceptions.ExecutionFailed: Execution of 'ambari-sudo.sh su hdfs -l -s /bin/bash -c 'ulimit -c unlimited ; /usr/hdp/current/hadoop-client/sbin/hadoop-daemon.sh --config /usr/hdp/current/hadoop-client/conf start namenode'' returned 1. starting namenode, logging to /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out2017-02-23 07:50:02,497 - Using hadoop conf dir: /usr/hdp/current/hadoop-client/conf

2017-02-23 07:50:02,623 - Using hadoop conf dir: /usr/hdp/current/hadoop-client/conf

2017-02-23 07:50:02,624 - Group['hadoop'] {}

2017-02-23 07:50:02,625 - Group['users'] {}

2017-02-23 07:50:02,626 - User['hive'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'hadoop']}

2017-02-23 07:50:02,626 - User['zookeeper'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'hadoop']}

2017-02-23 07:50:02,627 - User['ambari-qa'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'users']}

2017-02-23 07:50:02,628 - User['tez'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'users']}

2017-02-23 07:50:02,628 - User['hdfs'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'hadoop']}

2017-02-23 07:50:02,629 - User['yarn'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'hadoop']}

2017-02-23 07:50:02,630 - User['hcat'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'hadoop']}

2017-02-23 07:50:02,630 - User['mapred'] {'gid': 'hadoop', 'fetch_nonlocal_groups': True, 'groups': [u'hadoop']}

2017-02-23 07:50:02,631 - File['/var/lib/ambari-agent/tmp/changeUid.sh'] {'content': StaticFile('changeToSecureUid.sh'), 'mode': 0555}

2017-02-23 07:50:02,633 - Execute['/var/lib/ambari-agent/tmp/changeUid.sh ambari-qa /tmp/hadoop-ambari-qa,/tmp/hsperfdata_ambari-qa,/home/ambari-qa,/tmp/ambari-qa,/tmp/sqoop-ambari-qa'] {'not_if': '(test $(id -u ambari-qa) -gt 1000) || (false)'}

2017-02-23 07:50:02,638 - Skipping Execute['/var/lib/ambari-agent/tmp/changeUid.sh ambari-qa /tmp/hadoop-ambari-qa,/tmp/hsperfdata_ambari-qa,/home/ambari-qa,/tmp/ambari-qa,/tmp/sqoop-ambari-qa'] due to not_if

2017-02-23 07:50:02,638 - Group['hdfs'] {}

2017-02-23 07:50:02,639 - User['hdfs'] {'fetch_nonlocal_groups': True, 'groups': [u'hadoop', u'hdfs']}

2017-02-23 07:50:02,639 - FS Type:

2017-02-23 07:50:02,639 - Directory['/etc/hadoop'] {'mode': 0755}

2017-02-23 07:50:02,653 - File['/usr/hdp/current/hadoop-client/conf/hadoop-env.sh'] {'content': InlineTemplate(...), 'owner': 'hdfs', 'group': 'hadoop'}

2017-02-23 07:50:02,654 - Directory['/var/lib/ambari-agent/tmp/hadoop_java_io_tmpdir'] {'owner': 'hdfs', 'group': 'hadoop', 'mode': 01777}

2017-02-23 07:50:02,667 - Execute[('setenforce', '0')] {'not_if': '(! which getenforce ) || (which getenforce && getenforce | grep -q Disabled)', 'sudo': True, 'only_if': 'test -f /selinux/enforce'}

2017-02-23 07:50:02,673 - Skipping Execute[('setenforce', '0')] due to not_if

2017-02-23 07:50:02,673 - Directory['/var/log/hadoop'] {'owner': 'root', 'create_parents': True, 'group': 'hadoop', 'mode': 0775, 'cd_access': 'a'}

2017-02-23 07:50:02,675 - Directory['/var/run/hadoop'] {'owner': 'root', 'create_parents': True, 'group': 'root', 'cd_access': 'a'}

2017-02-23 07:50:02,675 - Changing owner for /var/run/hadoop from 1018 to root

2017-02-23 07:50:02,675 - Changing group for /var/run/hadoop from 1004 to root

2017-02-23 07:50:02,676 - Directory['/tmp/hadoop-hdfs'] {'owner': 'hdfs', 'create_parents': True, 'cd_access': 'a'}

2017-02-23 07:50:02,680 - File['/usr/hdp/current/hadoop-client/conf/commons-logging.properties'] {'content': Template('commons-logging.properties.j2'), 'owner': 'hdfs'}

2017-02-23 07:50:02,684 - File['/usr/hdp/current/hadoop-client/conf/health_check'] {'content': Template('health_check.j2'), 'owner': 'hdfs'}

2017-02-23 07:50:02,685 - File['/usr/hdp/current/hadoop-client/conf/log4j.properties'] {'content': ..., 'owner': 'hdfs', 'group': 'hadoop', 'mode': 0644}

2017-02-23 07:50:02,696 - File['/usr/hdp/current/hadoop-client/conf/hadoop-metrics2.properties'] {'content': Template('hadoop-metrics2.properties.j2'), 'owner': 'hdfs', 'group': 'hadoop'}

2017-02-23 07:50:02,697 - File['/usr/hdp/current/hadoop-client/conf/task-log4j.properties'] {'content': StaticFile('task-log4j.properties'), 'mode': 0755}

2017-02-23 07:50:02,698 - File['/usr/hdp/current/hadoop-client/conf/configuration.xsl'] {'owner': 'hdfs', 'group': 'hadoop'}

2017-02-23 07:50:02,702 - File['/etc/hadoop/conf/topology_mappings.data'] {'owner': 'hdfs', 'content': Template('topology_mappings.data.j2'), 'only_if': 'test -d /etc/hadoop/conf', 'group': 'hadoop'}

2017-02-23 07:50:02,706 - File['/etc/hadoop/conf/topology_script.py'] {'content': StaticFile('topology_script.py'), 'only_if': 'test -d /etc/hadoop/conf', 'mode': 0755}

2017-02-23 07:50:02,868 - Using hadoop conf dir: /usr/hdp/current/hadoop-client/conf

2017-02-23 07:50:02,868 - Stack Feature Version Info: stack_version=2.5, version=None, current_cluster_version=None -> 2.5

2017-02-23 07:50:02,882 - Using hadoop conf dir: /usr/hdp/current/hadoop-client/conf

2017-02-23 07:50:02,891 - checked_call['rpm -q --queryformat '%{version}-%{release}' hdp-select | sed -e 's/\.el[0-9]//g''] {'stderr': -1}

2017-02-23 07:50:02,922 - checked_call returned (0, '2.5.3.0-37', '')

2017-02-23 07:50:02,925 - Directory['/etc/security/limits.d'] {'owner': 'root', 'create_parents': True, 'group': 'root'}

2017-02-23 07:50:02,931 - File['/etc/security/limits.d/hdfs.conf'] {'content': Template('hdfs.conf.j2'), 'owner': 'root', 'group': 'root', 'mode': 0644}

2017-02-23 07:50:02,931 - XmlConfig['hadoop-policy.xml'] {'owner': 'hdfs', 'group': 'hadoop', 'conf_dir': '/usr/hdp/current/hadoop-client/conf', 'configuration_attributes': {}, 'configurations': ...}

2017-02-23 07:50:02,940 - Generating config: /usr/hdp/current/hadoop-client/conf/hadoop-policy.xml

2017-02-23 07:50:02,940 - File['/usr/hdp/current/hadoop-client/conf/hadoop-policy.xml'] {'owner': 'hdfs', 'content': InlineTemplate(...), 'group': 'hadoop', 'mode': None, 'encoding': 'UTF-8'}

2017-02-23 07:50:02,949 - XmlConfig['ssl-client.xml'] {'owner': 'hdfs', 'group': 'hadoop', 'conf_dir': '/usr/hdp/current/hadoop-client/conf', 'configuration_attributes': {}, 'configurations': ...}

2017-02-23 07:50:02,956 - Generating config: /usr/hdp/current/hadoop-client/conf/ssl-client.xml

2017-02-23 07:50:02,957 - File['/usr/hdp/current/hadoop-client/conf/ssl-client.xml'] {'owner': 'hdfs', 'content': InlineTemplate(...), 'group': 'hadoop', 'mode': None, 'encoding': 'UTF-8'}

2017-02-23 07:50:02,962 - Directory['/usr/hdp/current/hadoop-client/conf/secure'] {'owner': 'root', 'create_parents': True, 'group': 'hadoop', 'cd_access': 'a'}

2017-02-23 07:50:02,963 - XmlConfig['ssl-client.xml'] {'owner': 'hdfs', 'group': 'hadoop', 'conf_dir': '/usr/hdp/current/hadoop-client/conf/secure', 'configuration_attributes': {}, 'configurations': ...}

2017-02-23 07:50:02,970 - Generating config: /usr/hdp/current/hadoop-client/conf/secure/ssl-client.xml

2017-02-23 07:50:02,971 - File['/usr/hdp/current/hadoop-client/conf/secure/ssl-client.xml'] {'owner': 'hdfs', 'content': InlineTemplate(...), 'group': 'hadoop', 'mode': None, 'encoding': 'UTF-8'}

2017-02-23 07:50:02,977 - XmlConfig['ssl-server.xml'] {'owner': 'hdfs', 'group': 'hadoop', 'conf_dir': '/usr/hdp/current/hadoop-client/conf', 'configuration_attributes': {}, 'configurations': ...}

2017-02-23 07:50:02,984 - Generating config: /usr/hdp/current/hadoop-client/conf/ssl-server.xml

2017-02-23 07:50:02,985 - File['/usr/hdp/current/hadoop-client/conf/ssl-server.xml'] {'owner': 'hdfs', 'content': InlineTemplate(...), 'group': 'hadoop', 'mode': None, 'encoding': 'UTF-8'}

2017-02-23 07:50:02,991 - XmlConfig['hdfs-site.xml'] {'owner': 'hdfs', 'group': 'hadoop', 'conf_dir': '/usr/hdp/current/hadoop-client/conf', 'configuration_attributes': {u'final': {u'dfs.support.append': u'true', u'dfs.datanode.data.dir': u'true', u'dfs.namenode.http-address': u'true', u'dfs.namenode.name.dir': u'true', u'dfs.webhdfs.enabled': u'true', u'dfs.datanode.failed.volumes.tolerated': u'true'}}, 'configurations': ...}

2017-02-23 07:50:02,998 - Generating config: /usr/hdp/current/hadoop-client/conf/hdfs-site.xml

2017-02-23 07:50:02,998 - File['/usr/hdp/current/hadoop-client/conf/hdfs-site.xml'] {'owner': 'hdfs', 'content': InlineTemplate(...), 'group': 'hadoop', 'mode': None, 'encoding': 'UTF-8'}

2017-02-23 07:50:03,038 - XmlConfig['core-site.xml'] {'group': 'hadoop', 'conf_dir': '/usr/hdp/current/hadoop-client/conf', 'mode': 0644, 'configuration_attributes': {u'final': {u'fs.defaultFS': u'true'}}, 'owner': 'hdfs', 'configurations': ...}

2017-02-23 07:50:03,045 - Generating config: /usr/hdp/current/hadoop-client/conf/core-site.xml

2017-02-23 07:50:03,045 - File['/usr/hdp/current/hadoop-client/conf/core-site.xml'] {'owner': 'hdfs', 'content': InlineTemplate(...), 'group': 'hadoop', 'mode': 0644, 'encoding': 'UTF-8'}

2017-02-23 07:50:03,063 - File['/usr/hdp/current/hadoop-client/conf/slaves'] {'content': Template('slaves.j2'), 'owner': 'hdfs'}

2017-02-23 07:50:03,066 - Directory['/hadoop/hdfs/namenode'] {'owner': 'hdfs', 'group': 'hadoop', 'create_parents': True, 'mode': 0755, 'cd_access': 'a'}

2017-02-23 07:50:03,068 - Called service start with upgrade_type: None

2017-02-23 07:50:03,068 - Ranger admin not installed

2017-02-23 07:50:03,068 - /hadoop/hdfs/namenode/namenode-formatted/ exists. Namenode DFS already formatted

2017-02-23 07:50:03,069 - Directory['/hadoop/hdfs/namenode/namenode-formatted/'] {'create_parents': True}

2017-02-23 07:50:03,070 - File['/etc/hadoop/conf/dfs.exclude'] {'owner': 'hdfs', 'content': Template('exclude_hosts_list.j2'), 'group': 'hadoop'}

2017-02-23 07:50:03,070 - Options for start command are:

2017-02-23 07:50:03,071 - Directory['/var/run/hadoop'] {'owner': 'hdfs', 'group': 'hadoop', 'mode': 0755}

2017-02-23 07:50:03,071 - Changing owner for /var/run/hadoop from 0 to hdfs

2017-02-23 07:50:03,071 - Changing group for /var/run/hadoop from 0 to hadoop

2017-02-23 07:50:03,071 - Directory['/var/run/hadoop/hdfs'] {'owner': 'hdfs', 'group': 'hadoop', 'create_parents': True}

2017-02-23 07:50:03,072 - Directory['/var/log/hadoop/hdfs'] {'owner': 'hdfs', 'group': 'hadoop', 'create_parents': True}

2017-02-23 07:50:03,072 - File['/var/run/hadoop/hdfs/hadoop-hdfs-namenode.pid'] {'action': ['delete'], 'not_if': 'ambari-sudo.sh -H -E test -f /var/run/hadoop/hdfs/hadoop-hdfs-namenode.pid && ambari-sudo.sh -H -E pgrep -F /var/run/hadoop/hdfs/hadoop-hdfs-namenode.pid'}

2017-02-23 07:50:03,089 - Deleting File['/var/run/hadoop/hdfs/hadoop-hdfs-namenode.pid']

2017-02-23 07:50:03,089 - Execute['ambari-sudo.sh su hdfs -l -s /bin/bash -c 'ulimit -c unlimited ; /usr/hdp/current/hadoop-client/sbin/hadoop-daemon.sh --config /usr/hdp/current/hadoop-client/conf start namenode''] {'environment': {'HADOOP_LIBEXEC_DIR': '/usr/hdp/current/hadoop-client/libexec'}, 'not_if': 'ambari-sudo.sh -H -E test -f /var/run/hadoop/hdfs/hadoop-hdfs-namenode.pid && ambari-sudo.sh -H -E pgrep -F /var/run/hadoop/hdfs/hadoop-hdfs-namenode.pid'}

2017-02-23 07:50:07,295 - Execute['find /var/log/hadoop/hdfs -maxdepth 1 -type f -name '*' -exec echo '==> {} <==' \; -exec tail -n 40 {} \;'] {'logoutput': True, 'ignore_failures': True, 'user': 'hdfs'}

==> /var/log/hadoop/hdfs/gc.log-201702160906 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(3700120k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-16T09:06:51.855+0000: 1.313: [GC (Allocation Failure) 2017-02-16T09:06:51.855+0000: 1.313: [ParNew: 104960K->11839K(118016K), 0.0679745 secs] 104960K->11839K(1035520K), 0.0680739 secs] [Times: user=0.13 sys=0.00, real=0.07 secs]

Heap

par new generation total 118016K, used 28662K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 16% used [0x00000000c0000000, 0x00000000c106de08, 0x00000000c6680000)

from space 13056K, 90% used [0x00000000c7340000, 0x00000000c7ecfce8, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16983K, capacity 17190K, committed 17536K, reserved 1064960K

class space used 2073K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/hdfs-audit.log <==

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.log <==

at org.apache.hadoop.hdfs.server.namenode.NameNode.<init>(NameNode.java:976)

at org.apache.hadoop.hdfs.server.namenode.NameNode.createNameNode(NameNode.java:1686)

at org.apache.hadoop.hdfs.server.namenode.NameNode.main(NameNode.java:1754)

Caused by: java.net.BindException: Cannot assign requested address

at sun.nio.ch.Net.bind0(Native Method)

at sun.nio.ch.Net.bind(Net.java:433)

at sun.nio.ch.Net.bind(Net.java:425)

at sun.nio.ch.ServerSocketChannelImpl.bind(ServerSocketChannelImpl.java:223)

at sun.nio.ch.ServerSocketAdaptor.bind(ServerSocketAdaptor.java:74)

at org.mortbay.jetty.nio.SelectChannelConnector.open(SelectChannelConnector.java:216)

at org.apache.hadoop.http.HttpServer2.openListeners(HttpServer2.java:958)

... 8 more

2017-02-23 07:50:04,652 INFO impl.MetricsSystemImpl (MetricsSystemImpl.java:stop(211)) - Stopping NameNode metrics system...

2017-02-23 07:50:04,653 INFO impl.MetricsSystemImpl (MetricsSystemImpl.java:stop(217)) - NameNode metrics system stopped.

2017-02-23 07:50:04,653 INFO impl.MetricsSystemImpl (MetricsSystemImpl.java:shutdown(606)) - NameNode metrics system shutdown complete.

2017-02-23 07:50:04,653 ERROR namenode.NameNode (NameNode.java:main(1759)) - Failed to start namenode.

java.net.BindException: Port in use: HlseokNamenode:50070

at org.apache.hadoop.http.HttpServer2.openListeners(HttpServer2.java:963)

at org.apache.hadoop.http.HttpServer2.start(HttpServer2.java:900)

at org.apache.hadoop.hdfs.server.namenode.NameNodeHttpServer.start(NameNodeHttpServer.java:170)

at org.apache.hadoop.hdfs.server.namenode.NameNode.startHttpServer(NameNode.java:933)

at org.apache.hadoop.hdfs.server.namenode.NameNode.initialize(NameNode.java:746)

at org.apache.hadoop.hdfs.server.namenode.NameNode.<init>(NameNode.java:992)

at org.apache.hadoop.hdfs.server.namenode.NameNode.<init>(NameNode.java:976)

at org.apache.hadoop.hdfs.server.namenode.NameNode.createNameNode(NameNode.java:1686)

at org.apache.hadoop.hdfs.server.namenode.NameNode.main(NameNode.java:1754)

Caused by: java.net.BindException: Cannot assign requested address

at sun.nio.ch.Net.bind0(Native Method)

at sun.nio.ch.Net.bind(Net.java:433)

at sun.nio.ch.Net.bind(Net.java:425)

at sun.nio.ch.ServerSocketChannelImpl.bind(ServerSocketChannelImpl.java:223)

at sun.nio.ch.ServerSocketAdaptor.bind(ServerSocketAdaptor.java:74)

at org.mortbay.jetty.nio.SelectChannelConnector.open(SelectChannelConnector.java:216)

at org.apache.hadoop.http.HttpServer2.openListeners(HttpServer2.java:958)

... 8 more

2017-02-23 07:50:04,654 INFO util.ExitUtil (ExitUtil.java:terminate(124)) - Exiting with status 1

2017-02-23 07:50:04,656 INFO namenode.NameNode (LogAdapter.java:info(47)) - SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at HlseokNamenode/40.115.250.160

************************************************************/

==> /var/log/hadoop/hdfs/SecurityAuth.audit <==

==> /var/log/hadoop/hdfs/gc.log-201702170121 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(3553896k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-17T01:21:37.155+0000: 1.422: [GC (Allocation Failure) 2017-02-17T01:21:37.155+0000: 1.422: [ParNew: 104960K->11843K(118016K), 0.0464303 secs] 104960K->11843K(1035520K), 0.0465126 secs] [Times: user=0.09 sys=0.01, real=0.05 secs]

Heap

par new generation total 118016K, used 27266K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 14% used [0x00000000c0000000, 0x00000000c0f0fbf0, 0x00000000c6680000)

from space 13056K, 90% used [0x00000000c7340000, 0x00000000c7ed0e98, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16774K, capacity 16998K, committed 17280K, reserved 1064960K

class space used 2048K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702170138 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(3545636k free), swap 0k(0k free)

CommandLine flags: -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=209715200 -XX:MaxTenuringThreshold=6 -XX:NewSize=209715200 -XX:OldPLABSize=16 -XX:ParallelGCThreads=4 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-17T01:38:53.247+0000: 1.513: [GC (Allocation Failure) 2017-02-17T01:38:53.247+0000: 1.513: [ParNew: 163840K->13769K(184320K), 0.0340374 secs] 163840K->13769K(1028096K), 0.0341287 secs] [Times: user=0.02 sys=0.00, real=0.03 secs]

2017-02-17T01:38:55.304+0000: 3.570: [GC (CMS Initial Mark) [1 CMS-initial-mark: 0K(843776K)] 93066K(1028096K), 0.0114980 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-17T01:38:55.316+0000: 3.581: [CMS-concurrent-mark-start]

2017-02-17T01:38:55.321+0000: 3.586: [CMS-concurrent-mark: 0.005/0.005 secs] [Times: user=0.01 sys=0.00, real=0.01 secs]

2017-02-17T01:38:55.321+0000: 3.586: [CMS-concurrent-preclean-start]

2017-02-17T01:38:55.322+0000: 3.588: [CMS-concurrent-preclean: 0.001/0.001 secs] [Times: user=0.00 sys=0.00, real=0.00 secs]

2017-02-17T01:38:55.322+0000: 3.588: [CMS-concurrent-abortable-preclean-start]

CMS: abort preclean due to time 2017-02-17T01:39:00.335+0000: 8.601: [CMS-concurrent-abortable-preclean: 1.472/5.013 secs] [Times: user=1.36 sys=0.00, real=5.01 secs]

2017-02-17T01:39:00.336+0000: 8.601: [GC (CMS Final Remark) [YG occupancy: 93066 K (184320 K)]2017-02-17T01:39:00.336+0000: 8.601: [Rescan (parallel) , 0.0588942 secs]2017-02-17T01:39:00.394+0000: 8.660: [weak refs processing, 0.0000218 secs]2017-02-17T01:39:00.394+0000: 8.660: [class unloading, 0.0027711 secs]2017-02-17T01:39:00.397+0000: 8.663: [scrub symbol table, 0.0023912 secs]2017-02-17T01:39:00.400+0000: 8.665: [scrub string table, 0.0004538 secs][1 CMS-remark: 0K(843776K)] 93066K(1028096K), 0.0650476 secs] [Times: user=0.05 sys=0.00, real=0.07 secs]

2017-02-17T01:39:00.401+0000: 8.666: [CMS-concurrent-sweep-start]

2017-02-17T01:39:00.401+0000: 8.666: [CMS-concurrent-sweep: 0.000/0.000 secs] [Times: user=0.00 sys=0.00, real=0.00 secs]

2017-02-17T01:39:00.401+0000: 8.666: [CMS-concurrent-reset-start]

2017-02-17T01:39:00.404+0000: 8.670: [CMS-concurrent-reset: 0.004/0.004 secs] [Times: user=0.00 sys=0.00, real=0.00 secs]

2017-02-17T02:09:24.118+0000: 1832.383: [GC (Allocation Failure) 2017-02-17T02:09:24.118+0000: 1832.383: [ParNew: 177609K->12432K(184320K), 0.0516793 secs] 177609K->17346K(1028096K), 0.0517730 secs] [Times: user=0.06 sys=0.00, real=0.05 secs]

2017-02-17T03:58:36.815+0000: 8385.080: [GC (Allocation Failure) 2017-02-17T03:58:36.815+0000: 8385.080: [ParNew: 176272K->6162K(184320K), 0.0165474 secs] 181186K->11076K(1028096K), 0.0166471 secs] [Times: user=0.03 sys=0.00, real=0.02 secs]

2017-02-17T06:02:42.557+0000: 15830.822: [GC (Allocation Failure) 2017-02-17T06:02:42.557+0000: 15830.822: [ParNew: 170002K->4971K(184320K), 0.0175281 secs] 174916K->9885K(1028096K), 0.0175974 secs] [Times: user=0.02 sys=0.00, real=0.02 secs]

2017-02-17T07:58:42.559+0000: 22790.825: [GC (Allocation Failure) 2017-02-17T07:58:42.559+0000: 22790.825: [ParNew: 168811K->5726K(184320K), 0.0196550 secs] 173725K->10641K(1028096K), 0.0197209 secs] [Times: user=0.02 sys=0.00, real=0.02 secs]

Heap

par new generation total 184320K, used 90993K [0x00000000c0000000, 0x00000000cc800000, 0x00000000cc800000)

eden space 163840K, 52% used [0x00000000c0000000, 0x00000000c53449e8, 0x00000000ca000000)

from space 20480K, 27% used [0x00000000cb400000, 0x00000000cb997b80, 0x00000000cc800000)

to space 20480K, 0% used [0x00000000ca000000, 0x00000000ca000000, 0x00000000cb400000)

concurrent mark-sweep generation total 843776K, used 4914K [0x00000000cc800000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 29266K, capacity 29960K, committed 30180K, reserved 1075200K

class space used 3310K, capacity 3481K, committed 3556K, reserved 1048576K

==> /var/log/hadoop/hdfs/hadoop-hdfs-datanode-HlseokNamenode.log <==

2017-02-23 07:49:44,179 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 32 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:45,180 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 33 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:46,181 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 34 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:47,184 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 35 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:48,186 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 36 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:49,188 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 37 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:50,190 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 38 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:51,194 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 39 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:52,205 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 40 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:53,206 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 41 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:54,228 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 42 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:55,238 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 43 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:56,240 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 44 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:57,241 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 45 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:58,242 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 46 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:49:59,243 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 47 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:50:00,244 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 48 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:50:01,245 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 49 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

2017-02-23 07:50:01,246 WARN ipc.Client (Client.java:handleConnectionFailure(886)) - Failed to connect to server: HlseokNamenode/40.115.250.160:8020: retries get failed due to exceeded maximum allowed retries number: 50

java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:495)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:650)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:745)

at org.apache.hadoop.ipc.Client$Connection.access$3200(Client.java:397)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1618)

at org.apache.hadoop.ipc.Client.call(Client.java:1449)

at org.apache.hadoop.ipc.Client.call(Client.java:1396)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:233)

at com.sun.proxy.$Proxy15.versionRequest(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.DatanodeProtocolClientSideTranslatorPB.versionRequest(DatanodeProtocolClientSideTranslatorPB.java:275)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.retrieveNamespaceInfo(BPServiceActor.java:216)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.connectToNNAndHandshake(BPServiceActor.java:262)

at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.run(BPServiceActor.java:740)

at java.lang.Thread.run(Thread.java:745)

2017-02-23 07:50:01,247 WARN datanode.DataNode (BPServiceActor.java:retrieveNamespaceInfo(222)) - Problem connecting to server: HlseokNamenode/40.115.250.160:8020

2017-02-23 07:50:07,248 INFO ipc.Client (Client.java:handleConnectionFailure(904)) - Retrying connect to server: HlseokNamenode/40.115.250.160:8020. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=50, sleepTime=1000 MILLISECONDS)

==> /var/log/hadoop/hdfs/hadoop-hdfs-datanode-HlseokNamenode.out.1 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702170139 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(3289068k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-17T01:39:01.840+0000: 1.360: [GC (Allocation Failure) 2017-02-17T01:39:01.840+0000: 1.360: [ParNew: 104960K->11671K(118016K), 0.0355196 secs] 104960K->11671K(1035520K), 0.0356193 secs] [Times: user=0.05 sys=0.00, real=0.03 secs]

Heap

par new generation total 118016K, used 26913K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 14% used [0x00000000c0000000, 0x00000000c0ee2938, 0x00000000c6680000)

from space 13056K, 89% used [0x00000000c7340000, 0x00000000c7ea5d38, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16757K, capacity 16998K, committed 17280K, reserved 1064960K

class space used 2046K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/hadoop-hdfs-datanode-HlseokNamenode.out <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702230522 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(11905220k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-23T05:22:21.024+0000: 1.387: [GC (Allocation Failure) 2017-02-23T05:22:21.024+0000: 1.387: [ParNew: 104960K->11960K(118016K), 0.0148542 secs] 104960K->11960K(1035520K), 0.0149173 secs] [Times: user=0.02 sys=0.00, real=0.02 secs]

Heap

par new generation total 118016K, used 18705K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 6% used [0x00000000c0000000, 0x00000000c0696148, 0x00000000c6680000)

from space 13056K, 91% us2017-02-23T05:22:20.452+0000: 5.827: [CMS-concurrent-abortable-preclean-start]

CMS: abort preclean due to time 2017-02-23T05:22:25.465+0000: 10.841: [CMS-concurrent-abortable-preclean: 1.591/5.013 secs] [Times: user=1.54 sys=0.00, real=5.01 secs]

2017-02-23T05:22:25.465+0000: 10.841: [GC (CMS Final Remark) [YG occupancy: 92507 K (184320 K)]2017-02-23T05:22:25.465+0000: 10.841: [Rescan (parallel) , 0.0177390 secs]2017-02-23T05:22:25.483+0000: 10.859: [weak refs processing, 0.0000331 secs]2017-02-23T05:22:25.483+0000: 10.859: [class unloading, 0.0035134 secs]2017-02-23T05:22:25.487+0000: 10.862: [scrub symbol table, 0.0030139 secs]2017-02-23T05:22:25.490+0000: 10.865: [scrub string table, 0.0005994 secs][1 CMS-remark: 0K(843776K)] 92507K(1028096K), 0.0255382 secs] [Times: user=0.04 sys=0.00, real=0.03 secs]

2017-02-23T05:22:25.491+0000: 10.867: [CMS-concurrent-sweep-start]

2017-02-23T05:22:25.491+0000: 10.867: [CMS-concurrent-sweep: 0.000/0.000 secs] [Times: user=0.00 sys=0.00, real=0.00 secs]

2017-02-23T05:22:25.491+0000: 10.867: [CMS-concurrent-reset-start]

2017-02-23T05:22:25.496+0000: 10.872: [CMS-concurrent-reset: 0.005/0.005 secs] [Times: user=0.00 sys=0.00, real=0.01 secs]

2017-02-23T05:57:56.438+0000: 2141.814: [GC (Allocation Failure) 2017-02-23T05:57:56.438+0000: 2141.814: [ParNew: 177603K->11182K(184320K), 0.0653415 secs] 177603K->16311K(1028096K), 0.0654428 secs] [Times: user=0.07 sys=0.01, real=0.06 secs]

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out.3 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702170140 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(3283372k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-17T01:40:14.644+0000: 1.249: [GC (Allocation Failure) 2017-02-17T01:40:14.644+0000: 1.249: [ParNew: 104960K->11773K(118016K), 0.0375956 secs] 104960K->11773K(1035520K), 0.0376742 secs] [Times: user=0.04 sys=0.00, real=0.04 secs]

Heap

par new generation total 118016K, used 28171K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 15% used [0x00000000c0000000, 0x00000000c10037e8, 0x00000000c6680000)

from space 13056K, 90% used [0x00000000c7340000, 0x00000000c7ebf490, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16905K, capacity 17110K, committed 17280K, reserved 1064960K

class space used 2058K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702220922 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(10780604k free), swap 0k(0k free)

CommandLine flags: -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=209715200 -XX:MaxTenuringThreshold=6 -XX:NewSize=209715200 -XX:OldPLABSize=16 -XX:ParallelGCThreads=4 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-22T09:22:46.119+0000: 1.671: [GC (Allocation Failure) 2017-02-22T09:22:46.119+0000: 1.671: [ParNew: 163840K->13785K(184320K), 0.0183364 secs] 163840K->13785K(1028096K), 0.0184286 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-22T09:22:48.137+0000: 3.689: [GC (CMS Initial Mark) [1 CMS-initial-mark: 0K(843776K)] 94304K(1028096K), 0.0136848 secs] [Times: user=0.03 sys=0.00, real=0.02 secs]

2017-02-22T09:22:48.150+0000: 3.703: [CMS-concurrent-mark-start]

2017-02-22T09:22:48.157+0000: 3.709: [CMS-concurrent-mark: 0.006/0.006 secs] [Times: user=0.00 sys=0.00, real=0.00 secs]

2017-02-22T09:22:48.157+0000: 3.709: [CMS-concurrent-preclean-start]

2017-02-22T09:22:48.158+0000: 3.711: [CMS-concurrent-preclean: 0.002/0.002 secs] [Times: user=0.00 sys=0.00, real=0.01 secs]

2017-02-22T09:22:48.158+0000: 3.711: [CMS-concurrent-abortable-preclean-start]

CMS: abort preclean due to time 2017-02-22T09:22:53.197+0000: 8.749: [CMS-concurrent-abortable-preclean: 1.567/5.038 secs] [Times: user=1.57 sys=0.00, real=5.03 secs]

2017-02-22T09:22:53.197+0000: 8.749: [GC (CMS Final Remark) [YG occupancy: 94304 K (184320 K)]2017-02-22T09:22:53.197+0000: 8.749: [Rescan (parallel) , 0.0212470 secs]2017-02-22T09:22:53.218+0000: 8.770: [weak refs processing, 0.0000251 secs]2017-02-22T09:22:53.218+0000: 8.770: [class unloading, 0.0026857 secs]2017-02-22T09:22:53.221+0000: 8.773: [scrub symbol table, 0.0023905 secs]2017-02-22T09:22:53.223+0000: 8.775: [scrub string table, 0.0004366 secs][1 CMS-remark: 0K(843776K)] 94304K(1028096K), 0.0272617 secs] [Times: user=0.03 sys=0.00, real=0.03 secs]

2017-02-22T09:22:53.224+0000: 8.776: [CMS-concurrent-sweep-start]

2017-02-22T09:22:53.224+0000: 8.776: [CMS-concurrent-sweep: 0.000/0.000 secs] [Times: user=0.00 sys=0.00, real=0.00 secs]

2017-02-22T09:22:53.224+0000: 8.776: [CMS-concurrent-reset-start]

2017-02-22T09:22:53.228+0000: 8.780: [CMS-concurrent-reset: 0.004/0.004 secs] [Times: user=0.00 sys=0.01, real=0.00 secs]

2017-02-22T09:47:45.859+0000: 1501.412: [GC (Allocation Failure) 2017-02-22T09:47:45.859+0000: 1501.412: [ParNew: 177625K->11372K(184320K), 0.0646533 secs] 177625K->16280K(1028096K), 0.0647865 secs] [Times: user=0.09 sys=0.00, real=0.06 secs]

2017-02-22T11:19:05.689+0000: 6981.242: [GC (Allocation Failure) 2017-02-22T11:19:05.689+0000: 6981.242: [ParNew: 175212K->6099K(184320K), 0.0333260 secs] 180120K->11007K(1028096K), 0.0334418 secs] [Times: user=0.06 sys=0.00, real=0.03 secs]

2017-02-22T13:02:05.691+0000: 13161.243: [GC (Allocation Failure) 2017-02-22T13:02:05.691+0000: 13161.243: [ParNew: 169939K->6574K(184320K), 0.0226622 secs] 174847K->11482K(1028096K), 0.0227620 secs] [Times: user=0.02 sys=0.00, real=0.02 secs]

2017-02-22T14:48:05.716+0000: 19521.268: [GC (Allocation Failure) 2017-02-22T14:48:05.716+0000: 19521.268: [ParNew: 170414K->4950K(184320K), 0.0257478 secs] 175322K->9858K(1028096K), 0.0258255 secs] [Times: user=0.03 sys=0.00, real=0.03 secs]

2017-02-22T16:35:05.691+0000: 25941.243: [GC (Allocation Failure) 2017-02-22T16:35:05.691+0000: 25941.243: [ParNew: 168790K->4990K(184320K), 0.0348417 secs] 173698K->9897K(1028096K), 0.0349280 secs] [Times: user=0.06 sys=0.00, real=0.03 secs]

2017-02-22T18:23:05.687+0000: 32421.239: [GC (Allocation Failure) 2017-02-22T18:23:05.687+0000: 32421.239: [ParNew: 168830K->5548K(184320K), 0.0157105 secs] 173737K->10456K(1028096K), 0.0157992 secs] [Times: user=0.04 sys=0.00, real=0.02 secs]

2017-02-22T20:12:05.702+0000: 38961.255: [GC (Allocation Failure) 2017-02-22T20:12:05.702+0000: 38961.255: [ParNew: 169388K->3874K(184320K), 0.0370025 secs] 174296K->11968K(1028096K), 0.0371057 secs] [Times: user=0.06 sys=0.00, real=0.04 secs]

2017-02-22T22:02:26.452+0000: 45582.004: [GC (Allocation Failure) 2017-02-22T22:02:26.452+0000: 45582.004: [ParNew: 167714K->863K(184320K), 0.0127527 secs] 175808K->9077K(1028096K), 0.0128708 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-22T23:53:05.688+0000: 52221.240: [GC (Allocation Failure) 2017-02-22T23:53:05.688+0000: 52221.240: [ParNew: 164703K->767K(184320K), 0.0179263 secs] 172917K->8995K(1028096K), 0.0180328 secs] [Times: user=0.03 sys=0.00, real=0.02 secs]

2017-02-23T01:46:19.757+0000: 59015.310: [GC (Allocation Failure) 2017-02-23T01:46:19.758+0000: 59015.310: [ParNew: 164607K->1096K(184320K), 0.0218749 secs] 172835K->9335K(1028096K), 0.0219638 secs] [Times: user=0.04 sys=0.00, real=0.03 secs]

2017-02-23T03:39:57.281+0000: 65832.833: [GC (Allocation Failure) 2017-02-23T03:39:57.281+0000: 65832.834: [ParNew: 164936K->985K(184320K), 0.0542463 secs] 173175K->9237K(1028096K), 0.0543975 secs] [Times: user=0.05 sys=0.00, real=0.05 secs]

Heap

par new generation total 184320K, used 141993K [0x00000000c0000000, 0x00000000cc800000, 0x00000000cc800000)

eden space 163840K, 86% used [0x00000000c0000000, 0x00000000c89b41f8, 0x00000000ca000000)

from space 20480K, 4% used [0x00000000ca000000, 0x00000000ca0f6418, 0x00000000cb400000)

to space 20480K, 0% used [0x00000000cb400000, 0x00000000cb400000, 0x00000000cc800000)

concurrent mark-sweep generation total 843776K, used 8252K [0x00000000cc800000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 29221K, capacity 29672K, committed 29796K, reserved 1075200K

class space used 3275K, capacity 3411K, committed 3428K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702230526 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(11811828k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-23T05:26:52.809+0000: 1.535: [GC (Allocation Failure) 2017-02-23T05:26:52.809+0000: 1.535: [ParNew: 104960K->11949K(118016K), 0.0491981 secs] 104960K->11949K(1035520K), 0.0492931 secs] [Times: user=0.08 sys=0.00, real=0.05 secs]

Heap

par new generation total 118016K, used 18714K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 6% used [0x00000000c0000000, 0x00000000c069b190, 0x00000000c6680000)

from space 13056K, 91% used [0x00000000c7340000, 0x00000000c7eeb760, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16312K, capacity 16606K, committed 17024K, reserved 1064960K

class space used 1994K, capacity 2093K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702170817 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(2759528k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-17T08:17:21.766+0000: 1.342: [GC (Allocation Failure) 2017-02-17T08:17:21.766+0000: 1.342: [ParNew: 104960K->11747K(118016K), 0.0454467 secs] 104960K->11747K(1035520K), 0.0455246 secs] [Times: user=0.08 sys=0.00, real=0.05 secs]

Heap

par new generation total 118016K, used 29617K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 17% used [0x00000000c0000000, 0x00000000c1173980, 0x00000000c6680000)

from space 13056K, 89% used [0x00000000c7340000, 0x00000000c7eb8df0, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 17344K, capacity 17542K, committed 17920K, reserved 1064960K

class space used 2113K, capacity 2193K, committed 2304K, reserved 1048576K

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out.5 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702210615 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(10657932k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-21T06:15:23.686+0000: 1.262: [GC (Allocation Failure) 2017-02-21T06:15:23.686+0000: 1.262: [ParNew: 104960K->11723K(118016K), 0.0485317 secs] 104960K->11723K(1035520K), 0.0486139 secs] [Times: user=0.08 sys=0.00, real=0.05 secs]

Heap

par new generation total 118016K, used 26968K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 14% used [0x00000000c0000000, 0x00000000c0ee35b0, 0x00000000c6680000)

from space 13056K, 89% used [0x00000000c7340000, 0x00000000c7eb2da8, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16817K, capacity 17062K, committed 17280K, reserved 1064960K

class space used 2051K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/hadoop-hdfs-datanode-HlseokNamenode.out.3 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/hadoop-hdfs-datanode-HlseokNamenode.out.2 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out.2 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702170837 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(2720348k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-17T08:37:41.795+0000: 1.371: [GC (Allocation Failure) 2017-02-17T08:37:41.795+0000: 1.371: [ParNew: 104960K->11680K(118016K), 0.0155797 secs] 104960K->11680K(1035520K), 0.0156572 secs] [Times: user=0.02 sys=0.00, real=0.02 secs]

Heap

par new generation total 118016K, used 25957K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 13% used [0x00000000c0000000, 0x00000000c0df1438, 0x00000000c6680000)

from space 13056K, 89% used [0x00000000c7340000, 0x00000000c7ea8220, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16798K, capacity 17062K, committed 17280K, reserved 1064960K

class space used 2048K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702230505 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(10345004k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-23T05:05:17.643+0000: 1.324: [GC (Allocation Failure) 2017-02-23T05:05:17.643+0000: 1.324: [ParNew: 104960K->11951K(118016K), 0.0314623 secs] 104960K->11951K(1035520K), 0.0315506 secs] [Times: user=0.06 sys=0.00, real=0.03 secs]

Heap

par new generation total 118016K, used 18716K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 6% used [0x00000000c0000000, 0x00000000c069b2e8, 0x00000000c6680000)

from space 13056K, 91% used [0x00000000c7340000, 0x00000000c7eebfd0, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16322K, capacity 16606K, committed 17024K, reserved 1064960K

class space used 1994K, capacity 2093K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702230238 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(10436988k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-23T02:38:04.496+0000: 1.314: [GC (Allocation Failure) 2017-02-23T02:38:04.496+0000: 1.314: [ParNew: 104960K->11953K(118016K), 0.0463555 secs] 104960K->11953K(1035520K), 0.0464265 secs] [Times: user=0.08 sys=0.00, real=0.05 secs]

Heap

par new generation total 118016K, used 17668K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 5% used [0x00000000c0000000, 0x00000000c0594c28, 0x00000000c6680000)

from space 13056K, 91% used [0x00000000c7340000, 0x00000000c7eec6f8, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16292K, capacity 16542K, committed 16768K, reserved 1064960K

class space used 1991K, capacity 2093K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702170918 <==

2017-02-17T09:47:49.199+0000: 1741.303: [GC (Allocation Failure) 2017-02-17T09:47:49.199+0000: 1741.303: [ParNew: 177632K->11374K(184320K), 0.0516474 secs] 177632K->16287K(1028096K), 0.0517568 secs] [Times: user=0.07 sys=0.00, real=0.05 secs]

2017-02-17T11:38:04.320+0000: 8356.425: [GC (Allocation Failure) 2017-02-17T11:38:04.321+0000: 8356.425: [ParNew: 175214K->4225K(184320K), 0.0100974 secs] 180127K->9138K(1028096K), 0.0101578 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-17T13:40:42.557+0000: 15714.661: [GC (Allocation Failure) 2017-02-17T13:40:42.557+0000: 15714.662: [ParNew: 168065K->4776K(184320K), 0.0105439 secs] 172978K->9689K(1028096K), 0.0106118 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-17T15:48:42.617+0000: 23394.721: [GC (Allocation Failure) 2017-02-17T15:48:42.617+0000: 23394.721: [ParNew: 168616K->4683K(184320K), 0.0098185 secs] 173529K->9596K(1028096K), 0.0098748 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-17T18:00:42.552+0000: 31314.656: [GC (Allocation Failure) 2017-02-17T18:00:42.552+0000: 31314.656: [ParNew: 168523K->4676K(184320K), 0.0102810 secs] 173436K->9589K(1028096K), 0.0103623 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-17T20:12:42.557+0000: 39234.661: [GC (Allocation Failure) 2017-02-17T20:12:42.557+0000: 39234.661: [ParNew: 168516K->4693K(184320K), 0.0454755 secs] 173429K->9606K(1028096K), 0.0455573 secs] [Times: user=0.07 sys=0.00, real=0.05 secs]

2017-02-17T22:26:35.476+0000: 47267.580: [GC (Allocation Failure) 2017-02-17T22:26:35.476+0000: 47267.580: [ParNew: 168533K->2951K(184320K), 0.0345116 secs] 173446K->11050K(1028096K), 0.0346182 secs] [Times: user=0.08 sys=0.00, real=0.04 secs]

2017-02-18T00:40:42.552+0000: 55314.656: [GC (Allocation Failure) 2017-02-18T00:40:42.552+0000: 55314.656: [ParNew: 166791K->946K(184320K), 0.0131052 secs] 174890K->9074K(1028096K), 0.0131685 secs] [Times: user=0.01 sys=0.00, real=0.01 secs]

2017-02-18T02:55:16.801+0000: 63388.905: [GC (Allocation Failure) 2017-02-18T02:55:16.801+0000: 63388.905: [ParNew: 164786K->779K(184320K), 0.0208462 secs] 172914K->8919K(1028096K), 0.0209261 secs] [Times: user=0.03 sys=0.00, real=0.02 secs]

2017-02-18T05:08:49.200+0000: 71401.304: [GC (Allocation Failure) 2017-02-18T05:08:49.200+0000: 71401.304: [ParNew: 164619K->791K(184320K), 0.0155251 secs] 172759K->8947K(1028096K), 0.0156145 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-18T07:24:42.595+0000: 79539.431: [GC (Allocation Failure) 2017-02-18T07:24:42.595+0000: 79539.431: [ParNew: 164631K->777K(184320K), 0.0208760 secs] 172787K->8941K(1028096K), 0.0209703 secs] [Times: user=0.02 sys=0.00, real=0.03 secs]

2017-02-18T09:38:42.570+0000: 87579.405: [GC (Allocation Failure) 2017-02-18T09:38:42.570+0000: 87579.405: [ParNew: 164617K->746K(184320K), 0.0156596 secs] 172781K->8916K(1028096K), 0.0159435 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-18T11:53:39.208+0000: 95676.043: [GC (Allocation Failure) 2017-02-18T11:53:39.208+0000: 95676.043: [ParNew: 164586K->749K(184320K), 0.0117129 secs] 172756K->8923K(1028096K), 0.0118359 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-18T14:09:42.568+0000: 103839.403: [GC (Allocation Failure) 2017-02-18T14:09:42.568+0000: 103839.403: [ParNew: 164589K->761K(184320K), 0.0125504 secs] 172763K->8939K(1028096K), 0.0126372 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-18T16:23:42.583+0000: 111879.419: [GC (Allocation Failure) 2017-02-18T16:23:42.583+0000: 111879.419: [ParNew: 164601K->694K(184320K), 0.0326446 secs] 172779K->8939K(1028096K), 0.0327497 secs] [Times: user=0.04 sys=0.00, real=0.04 secs]

2017-02-18T18:39:15.789+0000: 120012.624: [GC (Allocation Failure) 2017-02-18T18:39:15.789+0000: 120012.624: [ParNew: 164534K->645K(184320K), 0.0091868 secs] 172779K->8891K(1028096K), 0.0092659 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-18T20:54:26.833+0000: 128123.668: [GC (Allocation Failure) 2017-02-18T20:54:26.833+0000: 128123.668: [ParNew: 164485K->644K(184320K), 0.0103986 secs] 172731K->8891K(1028096K), 0.0104645 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-18T23:10:03.308+0000: 136260.143: [GC (Allocation Failure) 2017-02-18T23:10:03.308+0000: 136260.143: [ParNew: 164484K->692K(184320K), 0.0235456 secs] 172731K->8940K(1028096K), 0.0236487 secs] [Times: user=0.06 sys=0.00, real=0.02 secs]

2017-02-19T01:24:42.564+0000: 144339.400: [GC (Allocation Failure) 2017-02-19T01:24:42.565+0000: 144339.400: [ParNew: 164532K->666K(184320K), 0.0535007 secs] 172780K->8915K(1028096K), 0.0535909 secs] [Times: user=0.07 sys=0.00, real=0.06 secs]

2017-02-19T03:41:42.619+0000: 152559.454: [GC (Allocation Failure) 2017-02-19T03:41:42.619+0000: 152559.454: [ParNew: 164506K->679K(184320K), 0.0129135 secs] 172755K->8931K(1028096K), 0.0129965 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-19T05:57:15.881+0000: 160692.717: [GC (Allocation Failure) 2017-02-19T05:57:15.881+0000: 160692.717: [ParNew: 164519K->704K(184320K), 0.0213292 secs] 172771K->8958K(1028096K), 0.0214142 secs] [Times: user=0.06 sys=0.00, real=0.02 secs]

2017-02-19T08:12:42.564+0000: 168819.399: [GC (Allocation Failure) 2017-02-19T08:12:42.564+0000: 168819.399: [ParNew: 164544K->638K(184320K), 0.0256431 secs] 172798K->8896K(1028096K), 0.0257233 secs] [Times: user=0.04 sys=0.00, real=0.03 secs]

2017-02-19T10:28:22.121+0000: 176958.956: [GC (Allocation Failure) 2017-02-19T10:28:22.121+0000: 176958.956: [ParNew: 164478K->665K(184320K), 0.0123641 secs] 172736K->8923K(1028096K), 0.0125127 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-19T12:43:29.199+0000: 185066.034: [GC (Allocation Failure) 2017-02-19T12:43:29.199+0000: 185066.034: [ParNew: 164505K->694K(184320K), 0.0130997 secs] 172763K->8952K(1028096K), 0.0132488 secs] [Times: user=0.03 sys=0.00, real=0.01 secs]

2017-02-19T14:58:40.028+0000: 193176.863: [GC (Allocation Failure) 2017-02-19T14:58:40.028+0000: 193176.864: [ParNew: 164534K->705K(184320K), 0.0214847 secs] 172792K->8964K(1028096K), 0.0215825 secs] [Times: user=0.07 sys=0.00, real=0.02 secs]

2017-02-19T17:14:28.479+0000: 201325.315: [GC (Allocation Failure) 2017-02-19T17:14:28.479+0000: 201325.315: [ParNew: 164545K->651K(184320K), 0.0227993 secs] 172804K->8910K(1028096K), 0.0228973 secs] [Times: user=0.05 sys=0.00, real=0.02 secs]

2017-02-19T19:29:59.198+0000: 209456.034: [GC (Allocation Failure) 2017-02-19T19:29:59.198+0000: 209456.034: [ParNew: 164491K->687K(184320K), 0.0189731 secs] 172750K->8958K(1028096K), 0.0190882 secs] [Times: user=0.04 sys=0.00, real=0.02 secs]

2017-02-19T21:44:42.573+0000: 217539.409: [GC (Allocation Failure) 2017-02-19T21:44:42.574+0000: 217539.409: [ParNew: 164527K->695K(184320K), 0.0226171 secs] 172798K->8976K(1028096K), 0.0227411 secs] [Times: user=0.04 sys=0.00, real=0.03 secs]

2017-02-20T00:00:31.253+0000: 225688.088: [GC (Allocation Failure) 2017-02-20T00:00:31.253+0000: 225688.088: [ParNew: 164535K->702K(184320K), 0.0155603 secs] 172816K->8983K(1028096K), 0.0156817 secs] [Times: user=0.04 sys=0.00, real=0.02 secs]

2017-02-20T02:14:42.690+0000: 233739.525: [GC (Allocation Failure) 2017-02-20T02:14:42.690+0000: 233739.526: [ParNew: 164542K->661K(184320K), 0.0126119 secs] 172823K->8944K(1028096K), 0.0126830 secs] [Times: user=0.02 sys=0.00, real=0.01 secs]

2017-02-20T04:29:42.562+0000: 241839.397: [GC (Allocation Failure) 2017-02-20T04:29:42.562+0000: 241839.397: [ParNew: 164501K->659K(184320K), 0.0484084 secs] 172784K->8942K(1028096K), 0.0484953 secs] [Times: user=0.06 sys=0.00, real=0.05 secs]

2017-02-20T06:42:42.628+0000: 249819.463: [GC (Allocation Failure) 2017-02-20T06:42:42.628+0000: 249819.463: [ParNew: 164499K->806K(184320K), 0.0180155 secs] 172782K->9089K(1028096K), 0.0180759 secs] [Times: user=0.00 sys=0.00, real=0.02 secs]

Heap

par new generation total 184320K, used 163495K [0x00000000c0000000, 0x00000000cc800000, 0x00000000cc800000)

eden space 163840K, 99% used [0x00000000c0000000, 0x00000000c9ee0688, 0x00000000ca000000)

from space 20480K, 3% used [0x00000000cb400000, 0x00000000cb4c9920, 0x00000000cc800000)

to space 20480K, 0% used [0x00000000ca000000, 0x00000000ca000000, 0x00000000cb400000)

concurrent mark-sweep generation total 843776K, used 8283K [0x00000000cc800000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 29219K, capacity 29676K, committed 29796K, reserved 1075200K

class space used 3276K, capacity 3411K, committed 3428K, reserved 1048576K

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out.1 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702200726 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(2561336k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-20T07:26:21.879+0000: 1.348: [GC (Allocation Failure) 2017-02-20T07:26:21.879+0000: 1.348: [ParNew: 104960K->11841K(118016K), 0.0470115 secs] 104960K->11841K(1035520K), 0.0470899 secs] [Times: user=0.07 sys=0.01, real=0.05 secs]

Heap

par new generation total 118016K, used 27615K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 15% used [0x00000000c0000000, 0x00000000c0f67a60, 0x00000000c6680000)

from space 13056K, 90% used [0x00000000c7340000, 0x00000000c7ed0428, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16870K, capacity 17114K, committed 17280K, reserved 1064960K

class space used 2058K, capacity 2161K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/gc.log-201702230228 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(10460152k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC

2017-02-23T02:28:31.587+0000: 1.374: [GC (Allocation Failure) 2017-02-23T02:28:31.587+0000: 1.374: [ParNew: 104960K->11955K(118016K), 0.0181515 secs] 104960K->11955K(1035520K), 0.0182390 secs] [Times: user=0.03 sys=0.00, real=0.02 secs]

Heap

par new generation total 118016K, used 18719K [0x00000000c0000000, 0x00000000c8000000, 0x00000000c8000000)

eden space 104960K, 6% used [0x00000000c0000000, 0x00000000c069b2e8, 0x00000000c6680000)

from space 13056K, 91% used [0x00000000c7340000, 0x00000000c7eeccc0, 0x00000000c8000000)

to space 13056K, 0% used [0x00000000c6680000, 0x00000000c6680000, 0x00000000c7340000)

concurrent mark-sweep generation total 917504K, used 0K [0x00000000c8000000, 0x0000000100000000, 0x0000000100000000)

Metaspace used 16295K, capacity 16542K, committed 16768K, reserved 1064960K

class space used 1991K, capacity 2093K, committed 2176K, reserved 1048576K

==> /var/log/hadoop/hdfs/hadoop-hdfs-namenode-HlseokNamenode.out.4 <==

ulimit -a for user hdfs

core file size (blocks, -c) unlimited

data seg size (kbytes, -d) unlimited

scheduling priority (-e) 0

file size (blocks, -f) unlimited

pending signals (-i) 56021

max locked memory (kbytes, -l) 64

max memory size (kbytes, -m) unlimited

open files (-n) 128000

pipe size (512 bytes, -p) 8

POSIX message queues (bytes, -q) 819200

real-time priority (-r) 0

stack size (kbytes, -s) 8192

cpu time (seconds, -t) unlimited

max user processes (-u) 65536

virtual memory (kbytes, -v) unlimited

file locks (-x) unlimited

==> /var/log/hadoop/hdfs/gc.log-201702200732 <==

Java HotSpot(TM) 64-Bit Server VM (25.77-b03) for linux-amd64 JRE (1.8.0_77-b03), built on Mar 20 2016 22:00:46 by "java_re" with gcc 4.3.0 20080428 (Red Hat 4.3.0-8)

Memory: 4k page, physical 14361248k(2550572k free), swap 0k(0k free)

CommandLine flags: -XX:CMSInitiatingOccupancyFraction=70 -XX:ErrorFile=/var/log/hadoop/hdfs/hs_err_pid%p.log -XX:InitialHeapSize=1073741824 -XX:MaxHeapSize=1073741824 -XX:MaxNewSize=134217728 -XX:MaxTenuringThreshold=6 -XX:NewSize=134217728 -XX:OldPLABSize=16 -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:OnOutOfMemoryError="/usr/hdp/current/hadoop-hdfs-namenode/bin/kill-name-node" -XX:ParallelGCThreads=8 -XX:+PrintGC -XX:+PrintGCDateStamps -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -XX:+UseCMSInitiatingOccupancyOnly -XX:+UseCompressedClassPointers -XX:+UseCompressedOops -XX:+UseConcMarkSweepGC -XX:+UseParNewGC