Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: kudu compaction did not run

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

kudu compaction did not run

- Labels:

-

Apache Kudu

Created on 12-26-2018 11:48 PM - edited 09-16-2022 07:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

kudu1.7.0 in cdh 5.15

3 master nodes, 4c32g, ubuntu16.04

3 data nodes, 8c64g, 1.8T ssd, ubuntu16.04

Here is a table : project_construction_record, 62 columns, 170k records, no partition

The table has many crud operations every day

I run a simple sql on it (using impala):

SELECT * FROM project_construction_record ORDER BY id LIMIT 1

it takes 7 seconds!

By checking the profile, I found this:

KUDU_SCAN_NODE (id=0) (6.06s)

- BytesRead: 0 byte

- CollectionItemsRead: 0

- InactiveTotalTime: 0 ns

- KuduRemoteScanTokens: 0

- NumScannerThreadsStarted: 1

- PeakMemoryUsage: 3.4 MB

- RowsRead: 177,007

- RowsReturned: 177,007

- RowsReturnedRate: 29188/s

- ScanRangesComplete: 1

- ScannerThreadsInvoluntaryContextSwitches: 0

- ScannerThreadsTotalWallClockTime: 6.09s

- MaterializeTupleTime

: 6.06s

- ScannerThreadsSysTime: 48ms

- ScannerThreadsUserTime: 172ms

So i check the scan of this sql, and found this:

| column | cells read | bytes read | blocks read |

| id | 176.92k | 1.91M | 19.96k |

| org_id | 176.92k | 1.91M | 19.96k |

| work_date | 176.92k | 2.03M | 19.96k |

| description | 176.92k | 1.21M | 19.96k |

| user_name | 176.92k | 775.9K | 19.96k |

| spot_name | 176.92k | 825.8K | 19.96k |

| spot_start_pile | 176.92k | 778.7K | 19.96k |

| spot_end_pile | 176.92k | 780.4K | 19.96k |

| ...... | ...... | ...... | ...... |

There are so many blocks read.

Then I run the kudu fs list command, and I got a 70M report data, here is the bottom:

0b6ac30b449043a68905e02b797144fc | 25024 | 40310988 | column 0b6ac30b449043a68905e02b797144fc | 25024 | 40310989 | column 0b6ac30b449043a68905e02b797144fc | 25024 | 40310990 | column 0b6ac30b449043a68905e02b797144fc | 25024 | 40310991 | column 0b6ac30b449043a68905e02b797144fc | 25024 | 40310992 | column 0b6ac30b449043a68905e02b797144fc | 25024 | 40310993 | column 0b6ac30b449043a68905e02b797144fc | 25024 | 40310996 | undo 0b6ac30b449043a68905e02b797144fc | 25024 | 40310994 | bloom 0b6ac30b449043a68905e02b797144fc | 25024 | 40310995 | adhoc-index

there are 25024 rowsets, and more than 1m blocks in the tablet

I left the maintenance and the compact flags by default, only change the tablet_history_max_age_sec to one day:

--maintenance_manager_history_size=8 --maintenance_manager_num_threads=1 --maintenance_manager_polling_interval_ms=250 --budgeted_compaction_target_rowset_size=33554432 --compaction_approximation_ratio=1.0499999523162842 --compaction_minimum_improvement=0.0099999997764825821 --deltafile_default_block_size=32768 --deltafile_default_compression_codec=lz4 --default_composite_key_index_block_size_bytes=4096 --tablet_delta_store_major_compact_min_ratio=0.10000000149011612 --tablet_delta_store_minor_compact_max=1000 --mrs_use_codegen=true --compaction_policy_dump_svgs_pattern= --enable_undo_delta_block_gc=true --fault_crash_before_flush_tablet_meta_after_compaction=0 --fault_crash_before_flush_tablet_meta_after_flush_mrs=0 --max_cell_size_bytes=65536 --max_encoded_key_size_bytes=16384 --tablet_bloom_block_size=4096 --tablet_bloom_target_fp_rate=9.9999997473787516e-05 --tablet_compaction_budget_mb=128 --tablet_history_max_age_sec=86400

It is a production enviroment, and many other tables have same issue, the performance is getting slower and slower.

So my question is:

why the compaction does not run? is it a bug? and can i do compact manually?

Created 12-27-2018 12:54 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi huaj,

It looks like you are hitting KUDU-1400, which before the fix Kudu cmpacts rowsets based on overlap and not based on other criteria like on-disk size.

Unfortunately, there is no way to fix the small rowsets have been flushed. On the other hand, You can rebuild the affected tables, create new tables from this existing tables and see if that helps. Before doing that, please check this doc to see which patten usage pattern caused you to hit this issue and try to prevent that by following the recommendations.

FYI, this fix for KUDU-1400 should land in the next release CDH6.2.

Created 12-26-2018 11:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I found this for that tablet in the tablet server metrics:

{

"name": "compact_rs_duration",

"total_count": 0,

"min": 0,

"mean": 0,

"percentile_75": 0,

"percentile_95": 0,

"percentile_99": 0,

"percentile_99_9": 0,

"percentile_99_99": 0,

"max": 0,

"total_sum": 0

},compaction has never run!

Created 12-27-2018 12:54 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi huaj,

It looks like you are hitting KUDU-1400, which before the fix Kudu cmpacts rowsets based on overlap and not based on other criteria like on-disk size.

Unfortunately, there is no way to fix the small rowsets have been flushed. On the other hand, You can rebuild the affected tables, create new tables from this existing tables and see if that helps. Before doing that, please check this doc to see which patten usage pattern caused you to hit this issue and try to prevent that by following the recommendations.

FYI, this fix for KUDU-1400 should land in the next release CDH6.2.

Created 12-27-2018 06:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for reply, but that is a sad answer 😞

I reed the doc, indeed i trigger the 'Trickling inserts'

Small cruds are happenning to the table all the time, with low pressure

According to the doc's suggestion, i should do the below actions:

1. set --flush_threshold_secs long enough, like 1 day

2. create a new table, and copy all data to new table

3. drop the old table

4. rename the new table to the old table name

Is it right?

And, I see the cdh6.1 just release, when will 6.2 release?

Created 12-28-2018 10:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, but notice that raising --flush_threshold_secs too high can affect tablet server restart time.

Currently, CDH 6.2 is scheduled for March.

Created 12-28-2018 10:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Just done it, and problem solved

Thanks!

Created 12-28-2018 08:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Problem not solved

After a few hours, i check the rowset of that table, there are many small rowsets again!

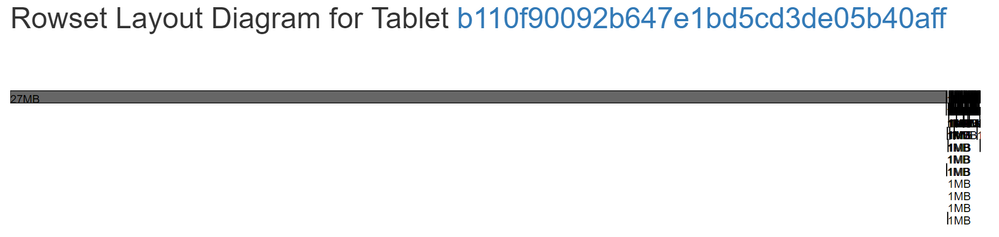

And run this command, i see 137 rowsets

kudu fs list -fs_data_dirs=/data/1/kudu/data -fs_wal_dir=/data/1/kudu/wal -tablet_id=b110f90092b647e1bd5cd3de05b40aff

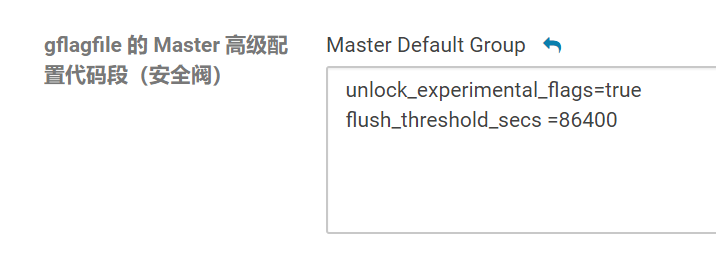

Here is my config in cm, and i have restarted kudu services after change it

Why was that? what can i du?

Created 12-29-2018 11:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry, i make a mistake

flush_threshold_secs flag is for both master and tabletserver

I did not set it on tabletserver, it's ok now after setting it

Created 03-01-2019 12:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we hit exact same issue which was totally unexpected and a month before we would go LIVE. @huaj how is the fix working for you so far?

Created 03-08-2019 04:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

waiting for kudu 1.9 in the coming cdh 6.2, hoping they would solve the problem

flush_threshold_secs flag sucks

when make it long, the query performance on the records in MRS (not yet flushing) sucks