Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: regex replace all newlines(\n) in a file at on...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

regex replace all newlines(\n) in a file at once

- Labels:

-

Apache NiFi

Created 10-30-2017 04:02 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have an scenario where I need to replace all new line characters with '' (technically remove all the newline characters and make a single string) in a big file(usually 50-500 MB).

I made the file to flow from getfile to replacetext as search value=\n+ and replace as (set empty string), regex replace and entire text as other parameters. It did not work.

Could you please suggest.

Created on 10-30-2017 05:38 PM - edited 08-18-2019 02:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

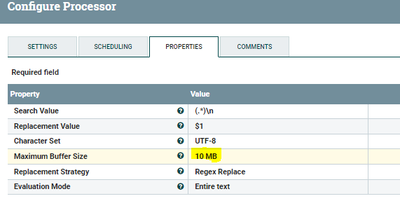

You need to change Maximum Buffer Size in your replace text processor as by default it is 1 MB

When the flowfile size is more than 1 mb then it will route to failure relation.

Replace Text Configs:-

Search value property as

(.*)\n

Replacement value as $1

as for testing i kept buffer size as 10 MB but you can change the size.

**keep in mind more buffer size will leads to out of memory issues.**

Created on 10-30-2017 05:38 PM - edited 08-18-2019 02:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You need to change Maximum Buffer Size in your replace text processor as by default it is 1 MB

When the flowfile size is more than 1 mb then it will route to failure relation.

Replace Text Configs:-

Search value property as

(.*)\n

Replacement value as $1

as for testing i kept buffer size as 10 MB but you can change the size.

**keep in mind more buffer size will leads to out of memory issues.**

Created 10-31-2017 01:36 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your response, I am sure that I will get bigger files.

I tried with a 100 MB file, it is taking a lot of time.

do we have an alternate way of doing this, because I will get such big files every minute.

Created on 10-31-2017 03:06 PM - edited 08-18-2019 02:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

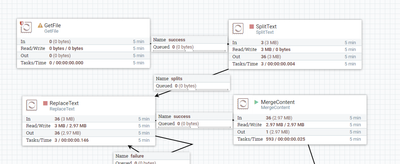

Instead of replacing one 100 MB file in replace text processor another alternate way is using split text and replace those small chunk of files then merge the chunks into one file using merge content processor.

Flow Explanation:- 1.GetFile

2.SplitText(splits relation) //split the text file into your required number of lines

3.ReplaceText(success relation) //replace those split files new lines into one line

4.Mergecontent(merged relation) //group all the splits into one file

in this process our input file has been split into small chunks of files then we are replacing those new lines into one line.

Flow:-

Created 10-31-2017 03:18 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Currently I am following the same approach, but wanted to know if I could do this at once.

However, this approach is working fine. In mergecontent, what will you suggest to have minimum buffer size(I am not sure I just kept 5MB).

Thanks for your help

Created on 10-31-2017 07:18 PM - edited 08-18-2019 02:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

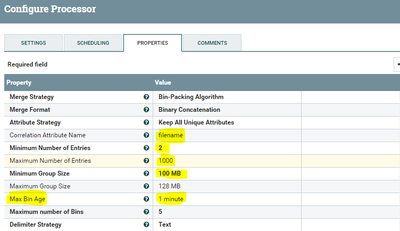

@Hadoop User, Merge content minimum group size depends on your input file size,

In merge content processor

Change Correlation Attribute Name property to

filename //it will binned all the chunks of files that having same filename and merges them.

<strong>Minimum Number of Entries</strong> //this is minimum number of flowfiles to include in a bundle and needs to be at least equal to chunk of files that you are getting after split text processor.

Maximum Number of Entries max number of flowfiles to include in bundle.

<strong>Minimum Group Size minimum size of the bundle</strong>// this should be at least your file size, if not then some of your data will not be merged.

Max Bin Age The maximum age of a Bin that will trigger a Bin to be complete. i.e after those many minutes processor flushes out what ever the flowfiles are waiting before the processor.

in above screenshot i am having Correlation attribute name property as filename that means all the chunks of files that are having same filename will be grouped as one.

Processor waits for minimum 2 files to merge and max is 1000 files and check for min and max group size properties also. if your flow is satisfying these properties then merge content processor won't having any files waiting before merge content processor.

if your flow is not met the configurations above then we need to use Max Bin Age property to flush out all the files that are waiting before the processor.

as you can see in my conf i have given 1 minute so this processor will wait for 1 minute if it won't find any correlation attributes that will flushes out, in your case you need to define the value as per your requirements.

For your reference

Ex1:-lets consider your filesize is 100 mb, after split text we are having 1000 chunks of splits then your Merge content configurations will looks like

Minimum Number of Entries 1

Maximum Number of Entries 1000

Minimum Group Size 100 MB //atleast equal to your file size. case1:-if one flowfile having 100 mb size then maximum number of entries property ignored because min entries are 1 and min group size is 100 mb it satisfies min requirements then processor will merge that file.

case2:-if 1000 flowfiles having 10 mb size then minimum group size property ignored because max entries are 1000 it satisfies max requirements then processor will merge those files.

then the 1000 chunks are merged into 1 file.

Ex2:-lets consider your filesize is 95 mb, after split text we are having 900 chunks of splits..The challange in this case is processor with above configuration will not merge 900 chunks because it hasn't reached the max group sixe i.e 100 MB but we are having 95 mb but still we need to merge that file in this case you need to use

then your Merge content configurations will looks like

Minimum Number of Entries 1

Maximum Number of Entries 1000 //equals to chunk of files

Minimum Group Size 100 MB //atleast equal to your file size, if one flowfile having 100 mb size then maximum number of entries property ignored because min entries are 1 and min group size is 100 mb it satisfies min requirements then processor will merge that file.

case1:-if one flowfile having 100 mb size then maximum number of entries property ignored because min entries are 1 and min group size is 100 mb it satisfies min requirements then processor will merge that file. case2:-if 1000 flowfiles having 10 mb size then minimum group size property ignored because max entries are 1000 it satisfies max requirements then processor will merge those files.

--same until here--

Max Bin Age 1 minute

we need to add max bin age this property helps if the files are waiting before the processor after 1 minute it will flush out those files then merges them according to filename attribute correlation.

By analyzing your get file,split text,replace text processors(size,count), you need to configure merge content processor.