Archives of Support Questions (Read Only)

- Cloudera Community

- Board Archive

- Archives of Support Questions (Read Only)

- Re: how to find long running hadoop/yarn jobs?

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

how to find long running hadoop/yarn jobs?

- Labels:

-

Apache Hadoop

-

Apache YARN

Created 02-11-2017 12:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

how to find long running hadoop/yarn jobs by using command line.

Created on 02-11-2017 03:09 PM - edited 08-18-2019 03:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

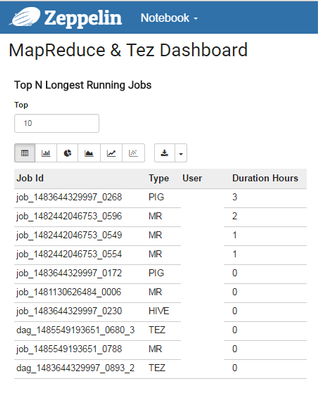

There is not a straight command to get long running jobs. In Ambari 2.4, they have provided the Zeppelin Dashboard in SmartSense Service(1.3) where we can see all long running jobs and job which has used maximum memory etc.,

Example Screenshot:

Option 2:

Prior to that, I have written a bash script which is a length process. Where I will gather all the information from Resource Manager URL using Resource Manager REST API calls and store that information in a CSV file. Then load that data in a CSV file to HDFS and then create a Hive external table on top of it. Then I use to run insert command and move the required columns to the final table and started running simple hive queries to get the list of all long running jobs.

Hope this helps you.

Created on 02-11-2017 03:09 PM - edited 08-18-2019 03:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is not a straight command to get long running jobs. In Ambari 2.4, they have provided the Zeppelin Dashboard in SmartSense Service(1.3) where we can see all long running jobs and job which has used maximum memory etc.,

Example Screenshot:

Option 2:

Prior to that, I have written a bash script which is a length process. Where I will gather all the information from Resource Manager URL using Resource Manager REST API calls and store that information in a CSV file. Then load that data in a CSV file to HDFS and then create a Hive external table on top of it. Then I use to run insert command and move the required columns to the final table and started running simple hive queries to get the list of all long running jobs.

Hope this helps you.

Created 05-18-2018 07:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Sridhar Reddy,

Could please share the script if possible??