Community Articles

- Cloudera Community

- Support

- Community Articles

- Apache Ambari Workflow Manager View for Apache Ooz...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 08-29-2017 03:10 PM - edited 08-17-2019 11:28 AM

Part 9: https://community.hortonworks.com/articles/85091/apache-ambari-workflow-manager-view-for-apache-ooz-...

Part 11: https://community.hortonworks.com/articles/85361/apache-ambari-workflow-manager-view-for-apache-ooz-...

I get a lot of questions about doing distcp and figured I'd write yet another article in the series on WFM. There's a common assumption that FS action should be able to do a copy within a cluster. Unfortunately it's not obvious that you can leverage distcp action to do a copy within a cluster instead. The reason behind FS action missing copy functionality is that copy is not meant to be distributed and will DOS your Oozie server until the action completes. What you need to do is use distcp action as it's meant to do distributed operations and it being decoupled from Oozie launcher will complete w/out DOS. The functionality is the same even with naming convention being a bit off.

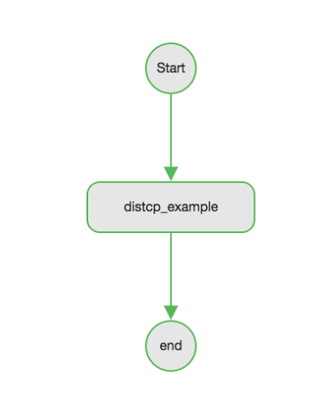

We're going to start with adding a new workflow and naming it distcp-wf.

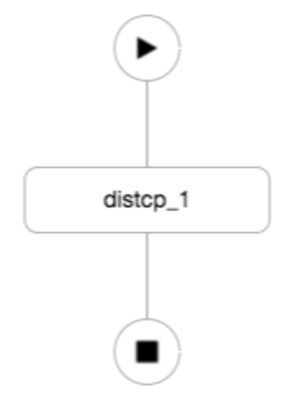

Now we're going to add distcp node to the flow.

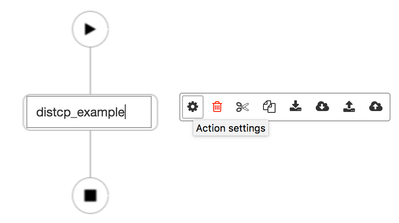

I prefer to name the nodes something other than default so I'll name it distcp_example and hit the gear button to configure it.

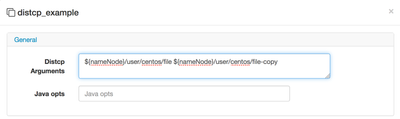

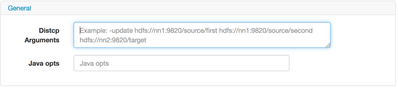

Now in distcp arguments field, I'm going to use Oozie XML variable replacement to add the full HDFS path of the source and target, which happen to be in the same cluster. They could might as well be two separate clusters.

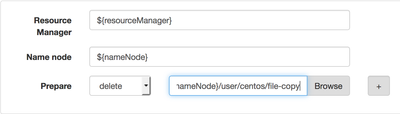

Now if you're familiar with how Oozie and Mapreduce works, you're quickly going to realize that this workflow will only run once and fail second time around. The reason is that my destination never changes and if output exists, you're going to get a failure on the next run. For that, we're going to add a prepare action to delete destination file/directory. Copy the second argument to clipboard. Paste it into advanced properties and change mkdir drop-down to delete.

We're almost ready to submit our workflow; I first have to create an HDFS directory (distcp-wf) that will contain my distcp workflow and file I'd like copied.

hdfs dfs -mkdir distcp-wf hdfs dfs -touchz file hdfs dfs -ls Found 4 items drwx------ - centos hdfs 0 2017-08-29 14:35 .Trash drwx------ - centos hdfs 0 2017-08-29 14:33 .staging drwxr-xr-x - centos hdfs 0 2017-08-29 14:35 distcp-wf -rw-r--r-- 3 centos hdfs 10 2017-08-29 01:26 file

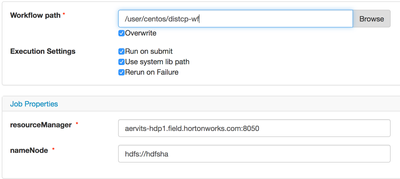

Now I'm ready to save and submit my workflow, enter the HDFS path of the workflow directory you just created

notice the job properties have the fully-expanded nameNode and resourceManager addresses, that's what is being used for variable substitution.

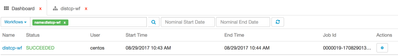

Now I am going to submit the job and and use filtering in the dashboard for the name of the workflow.

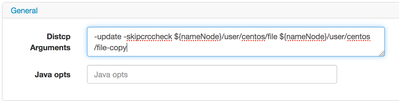

Now let's switch back to the distcp action as I'd like to demonstrate a few other things about distcp that you can leverage. If you refer to distcp user guide you notice that there are many arguments we didn't cover like -append, -update etc. What if you would like to use them in your distcp? Well WFM has got you covered, the eagle-eyed users would see the tool-tip the first time we tried to configure distcp action node and see that you can pass the arguments in the same field as source and destination.

So in addition to the two arguments, I'm going to add -update and -skipcrccheck in front of the existing ones.

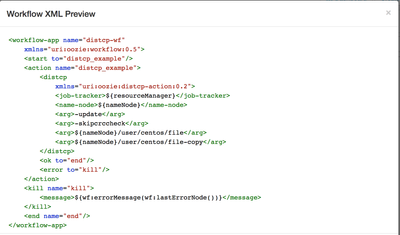

My workflow XML should now look like so

So when I execute with new arguments, everything should still be green.

On a side note, our documentation team has done a phenomenal job adding resources to our WFM section. I encourage everyone interested in WFM to review. The caveats with distcp is that in some cases you cannot do distcp via Oozie from secure to insecure and vice versa. There are parameters you have to specify to make it work in some cases but overall it is not supported in heterogeneous clusters. Other issues crop up when you distcp from HA enabled clusters. You have to specify the nameservices for both clusters. Please leverage HCC to find resources how to get that working. Hope this was useful!